I appreciate the patience you folks have shown. I see now that what is implied in the standard is that as frequency gets very low, a progressively higher level of distortion is tolerated. When you think about it, can a person really sense distortion at all in a 10 or 15 Hz impulse? Is there any distortion in an earthquake, or a cement truck rumbling by? Probably not, but maybe some studies have shown it can, or maybe they just had to establish a definition somehow. I imagine at 10 Hz, I could tolerate 100% or multiples more. Things change a lot at 30Hz, where the standard becomes much stricter. At 125Hz, 2.7% seems like a lot of distortion to tolerate (more lenient than strict), but may be easier to accept when there are other high quality speakers present in the system reproducing the program material with less distortion (in which case the overall system SINAD would be something less than 2.7%). Is that an argument for the Geddes approach to subwoofer integration?

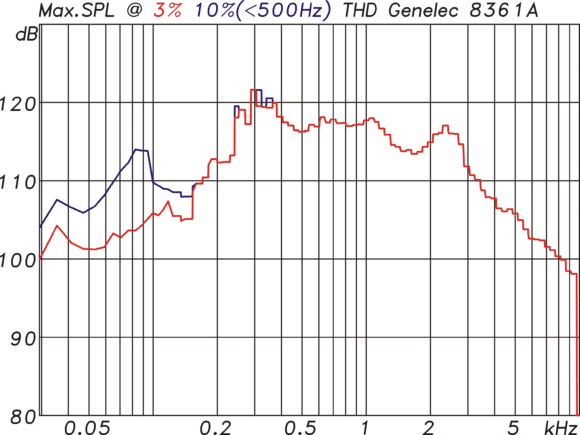

It seems an unfamiliar way to present the data. When a DAC or full-range speaker is tested, we’re used to seeing distortion level measured at a particular frequency with a sweep of power output levels, or a fixed output level and a frequency sweep. But in subwoofer world, you hold frequency constant, and increase the output until you hit the ceiling on a defined distortion level. It must work OK to do it that way, but it takes a little re-alignment of thought to internalize.