This has nothing to do with time resolution of PCM or subsample delays. It may read (if the detail is there) on how to calculate acoustic impedance.

https://www.aes.org/e-lib/browse.cfm?elib=6008

Last edited:

This has nothing to do with time resolution of PCM or subsample delays. It may read (if the detail is there) on how to calculate acoustic impedance.

I am arguing about nothing, it was my first post here in this thread.(OK, you edited the post) I was just interested if you knew the author, for the reason that you mentioned the acoustical impedance measurement. The world is not limited to PCM subsample delays.

Sorry, I confused posters there for a minute.

I do not know that work (well, I can't read the actual paper), but I do know what I suspect are very similar results described in English (sorry, I'm your typical monolingual American).

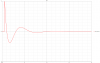

@pkane's DeltaWave does a good job for subsample shifts. Shifting a file first by 0.333 samples, then a second time by 0.667, gives 1.000 sample total shift. After rotating left by one sample the difference to the original file is basically an infinite null, with the exception of Nyquist (fs/2) ripple present, with some peaks every 512 samples (the FFT block size used for shifting, it seems)

then downsample again without filtering?

So, in a practical example using Adobe Audition, one would upsample to 64x without filter (as that would impose a FR change), then calculate the required shift count and implement the remaining fractional shift (in upsampled sample periods) by linear interpolation, then downsample again without filtering?

I just tried (without the interpolation, just shifting the 64x by 7 samples) but get odd results: Result is polarity-inverted and reduced in level by some 11dB

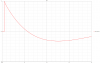

Sorry, but this is not correct either!I think step reponse is usefull which time it need to reach 0 and how much % it later reach max to see in this value if a speaker is precise and can produce good stereo width or not.

B)

B)

B)

B)

D)

D)

On the subject stereo phantom image, width, elevation, spaciousness, envelopment,... you will find much useful information in Floyd Toole's book "Sound Reproduction" ... and no, you cannot infer the stereo image width established by loudspeakers based on the step response...produce good stereo width or not

There are a lot of ways to do subsample or variable-length resampling. DC and Nyquist present issues for real signals when using FFT methods. Proakis' method of oversampling and filtering in the oversampled domain is pretty much bulletproof, and gives you approximately an SNR of 'n^4' where n is the oversampling rate. 64 is almost bulletproof, and 128 pretty much exceeds any sort of real signal. At that rate, you can simply use linera interpolation between the two nearest samples at 128 times the sampling rate. Of course, only calculate the samples you actually need.

It turns out that Audition cannot handle extreme factors like 64x not foreseen by the developers which in general did a excellent job.

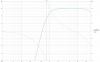

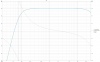

Linear phase including the bass means a high latency which is often not acceptable for monitoring or even enjoying combined video-audio.I would not like to be a prophet of phase linearity, but i'd say currently for decent active monitors or any high level digital speakers it's a must. It can be done almost at no additional cost, so why not?

Linear phase including the bass means a high latency which is often not acceptable for monitoring or even enjoying combined video-audio.

Low-latency mode like in Kii for live monitoring (exact phase here is not so important), lipsync for customer AV case.Linear phase including the bass means a high latency which is often not acceptable for monitoring or even enjoying combined video-audio.

In critical application like mastering studio it will be done in complex with subwoofers and delay lines.The time cost for linearizing the bass is not worth-it in many applications

Yes, as a mode it surely can be done, my above reply was a reason why it has a cost/disadvantage when fully used.Low-latency mode like in Kii for live monitoring (exact phase here is not so important), lipsync for customer AV case.

I mean it might be one of available presets, for example.

On the other side unfortunately the biggest audible differences for non pathological constructions are in the bass region where group delay can exceed known psychoacoustic limits.Or limit phase linearisation to i.e. midbass, when pinpoint localisation at least troublesome to our earbrain and phase error will not really matter.

Although drivers should be better placed geometrically in a way that their sound generation centres don't have a significant distance differences as correcting differences per DSP delay works only for one angle and compromises the generated radiation pattern and reflected sound.Alternatively you can simply compensate each driver, and the crossover as well, digitally, using DSP and it all works like a charm.