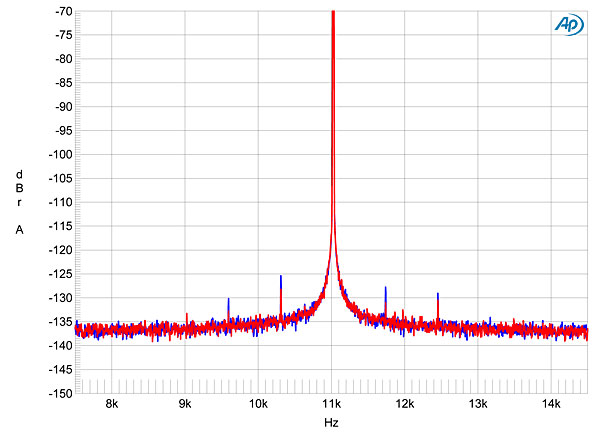

Measuring phase noise accurately can be a challenge, and after a very quick skim IMO there are far too many variables in this to say for certainty that phase noise is worse when cold for all DACs. I noticed it seemed to spike up at 1 hour, what's up with that? Let alone how much it matters to the listener. I will note that RF systems and test equipment routinely uses temperature-controlled oscillators, often with built-in heaters, to ensure frequency stability. Oscillator topology is a big player. But phase noise is also heavily dependent upon the circuit design, particularly the oscillator's amplifier and biasing circuit. For my present work we measure it well enough in the open lab, but for truly accurate measurements I was in a screen room, or at least a well-constructed screen box, to isolate the device under test (DUT) from external noise sources. Of course measuring phase noise in the -140 to -160 dBm/rtHz range is a bit more challenging than in the -60 to -100 range...

I have used test instruments that specify from none to one hour to one day of warmup before use, the worst (longest) being a highly-accurate microwave instrument that specified 48 hours before reaching rated specs. Did it really take that long? Almost certainly not, but we had to follow the manufacturer's guidance to be able to certify our devices. These days an hour suffices for most equipment (better designs, better parts, better compensation methods).