Below is a posting made today in a thread I started awhile back ago. Note, I don't know this poster, or if it is valid or not....just sharing in case anyone is interested... I will not respond to any comments about it, because it is beyond my knowledge. I just thought maybe someone here may find it interesting to read.

=============

Bit error of 10^12 would do the job for sure...."if" you can get to this performance in a real life implementation. Personally I think there is a lot that needs to be taken care of in PC to DAC system set-up approach this.

As I mention above the USB transmission eye detection margin is quite small for the receiver (DAC) at ~ 0.4v. Differential noise between the power supplies of the USB link's transmitter (eg the PC, packed with noisy buck convectors, high transient loads and even an SMPS if your unlucky) and the receiver (the DAC probably on a low noise linear supply) really have the potential to impact eye detection margins.

Although the USB cable is shielded, there is also generally a GND loop between the PC and DAC via mains safety earth connections. The PC switching supply noise and ground leakage currents can pollute the safety earth and appear at the USB receiver circuit in the DAC (even though there "should" be a good ground reference established by the USB lead's shield).

Then there is EMI coupling into the D+ D- pairs as they traverse the motherboard, if motherboard is used, or from a PCIE card if used. EMI can also be coupled into the USB cable. I have much frustrating experience of "good" quality coaxial cable being insufficiently shielded for low jitter HF signalling. Interesting here is that there are so many reports in forums about improvements in sound from multi shielded USB cables, something I use here too.

Final area I think is very important is transmission timing both phase noise and clock speed. The differential noise above may or may not be enough to cause data errors due to eye detection errors. Even if errors are not being caused by detection errors, the differential power noise in the transmitter's & receiver's supplies will cause threshold detection jitter in the USB data stream and this does matter to sound quality (although I would agree this is not data error). I mentioned in my earlier post above I have developed the ability to accurately set the relative frequency of the individual USB clock domains governing the transmitter and receiver. I have been working on this stuff for many years, and know that as little as an 0.000005% difference in the speed of the USB transmitter and receives clock domains can be heard. USB timing really matters if you are aiming for truly high end sound quality.

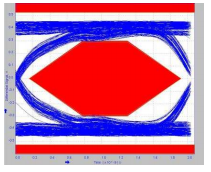

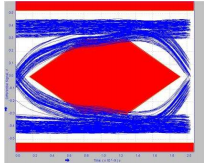

The point of the diagrams below is not to highlight that using a 3 or 5m cable could be a bad move (most people just don't go that long for audio

), rather my point is something as simple as the cable length alone can really degrade the eye detection margins. The issues listed above I think have far greater potential to harm eye margin performance than these example cable lengths.

sample USB transmission eye 9 inch cable

eye 3m cable

eye 5m cable

I don't have USB test equipment (way too expensive) but I have been modifying USB interfaces and audio servers at board level for > 14 years. I just can't say beyond doubt that the above issues cause actual errors but I have come across lots of evidence that the areas above really matter for quality.

I happened to be working up that board I posted about above prior to seeing this thread.I though it would be fun to post about this. It is able to do much more than condition power and make or break shield links etc. I felt its worth the time to see if anything useful can be pinned down.

Best regards,

OAudio.