-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

KEF R3 Speaker Review

- Thread starter amirm

- Start date

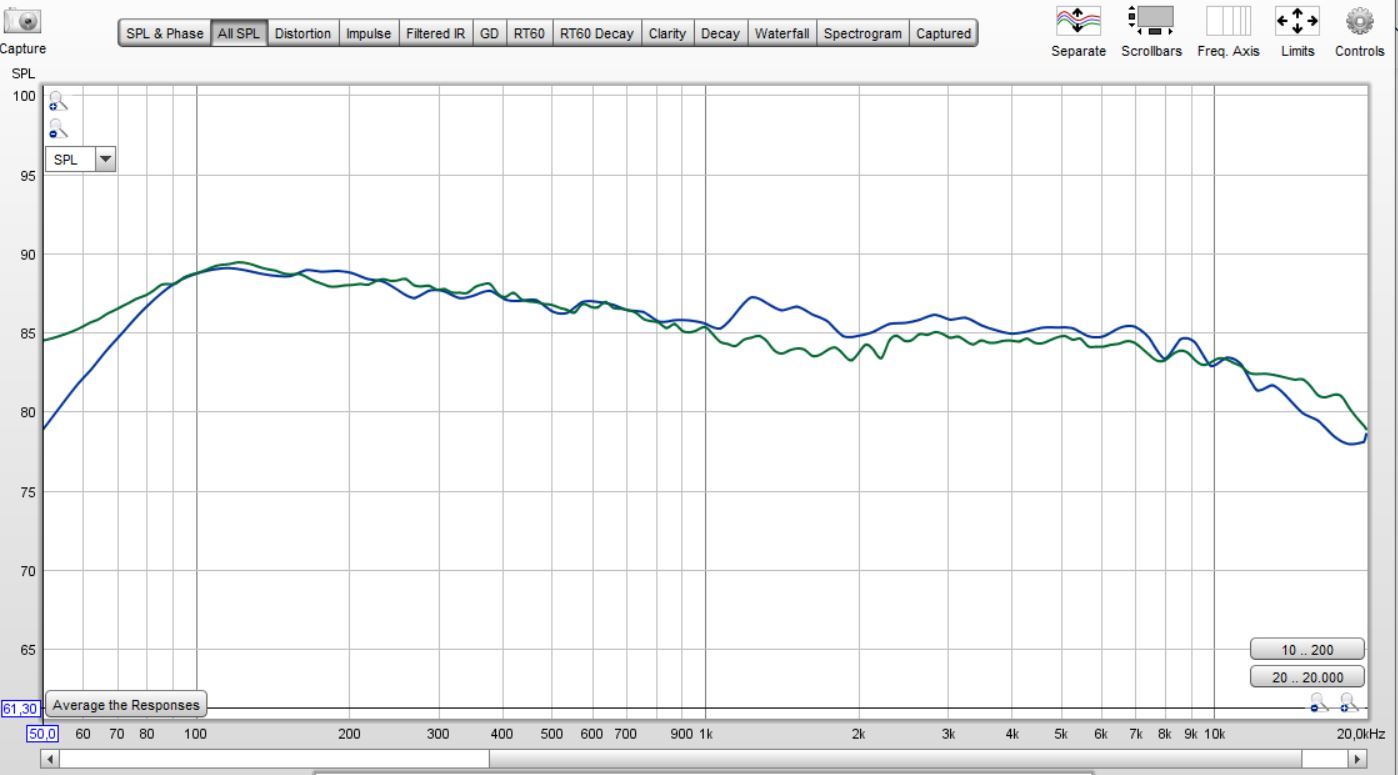

.. or you can level-match the 100Hz-1kHz region and realise that M16 is a bit higher in the 1kHz-10kHz region, after which it rolls-off mroe steeply.

But the truth is that, baring M16s hump at 1200-1800Hz and sharper roll-off at the end those 2 speakers are incredibly similar.

But the truth is that, baring M16s hump at 1200-1800Hz and sharper roll-off at the end those 2 speakers are incredibly similar.

@distortion graph: so 2.83v is 86dB and 10v is 105dB?

2.83 -> 10 should be +11 dB so 86dB -> 97dB

Probably a typo. What is more interesting is that THD is over the entire range driven by 2nd harmonic, which is far more benign than if it would be the 3rd. That would point to an extremely neutral sounding speaker.

Indeed I agree it measures very well!Probably a typo. What is more interesting is that THD is over the entire range driven by 2nd harmonic, which is far more benign than if it would be the 3rd. That would point to an extremely neutral sounding speaker.

Indeed I agree it measures very well!

Here is the thing I believe it would be interesting for most of us: as both M16 and R3 have such similar estimated in-room response and exhibit quite low distorions I wander if they could be told apart if EQ-ed to the same target and listened blindly in a room.

tecnogadget

Addicted to Fun and Learning

.. or you can level-match the 100Hz-1kHz region and realise that M16 is a bit higher in the 1kHz-10kHz region, after which it rolls-off mroe steeply.

But the truth is that, baring M16s hump at 1200-1800Hz and sharper roll-off at the end those 2 speakers are incredibly similar.

View attachment 54092

Looking to that graph we could say we have 2 really great speakers but not “absolute natural perfection”...we could guess:

- BUMP in 1200hz-1800hz for M16s, gives them more presence in a region very sensitive to our ears.

- SUCKOUT in 1200hz-1800hz for R3s, could make them feel bright in the treble.

Please let not forget this analysis is being done over a “predicted” curve.

Here is the thing I believe it would be interesting for most of us: as both M16 and R3 have such similar estimated in-room response and exhibit quite low distorions I wander if they could be told apart if EQ-ed to the same target and listened blindly in a room.

I asked my self the same and strongly recommended your idea. IMHO carefully implemented Parametric EQ is the way to go every time, no matter what reference speakers we are dealing with.

That's certainly not wrong, but the thing is you can get that stuff anywhere. There are about a million people doing reviews of speakers on this manor. But there's only one guy with a Klippel. While there certainly can be some value in subjective reviews, I'm perfectly fine with the one guy with a Klippel doing nothing but providing us with the data we pretty much can't get anywhere else. Squeeze the most value out of that thing, don't waste too much time doing other things, I say.Fully agree with you, although for me for subjective impressions to be also useful I would like more care in the listening tests, thus sensible placement and listening distance and placement according to the bass propertiies or EQin the bass/modal region, stereo listening and measurements at the listening room position.

- Joined

- Jan 15, 2020

- Messages

- 6,935

- Likes

- 17,091

I don't say anything different and actually ignore the subjective listening and only look at the measurements. Am just writing what I would expect to see if I would want a subjective review as an add-on. Personally I would even prefer if the reviews had only objective measurements and the "panther ratings" were only based on measurements as this way the kind of get muddled up for inexperienced visitors.That's certainly not wrong, but the thing is you can get that stuff anywhere. There are about a million people doing reviews of speakers on this manor. But there's only one guy with a Klippel. While there certainly can be some value in subjective reviews, I'm perfectly fine with the one guy with a Klippel doing nothing but providing us with the data we pretty much can't get anywhere else. Squeeze the most value out of that thing, don't waste too much time doing other things, I say.

Vuki

Senior Member

Something is not quite right with these two overlays...

Shouldn't both show overlaid predicted inroom responses? But it seems predictions in one don't match the other?

- Joined

- Jan 15, 2020

- Messages

- 6,935

- Likes

- 17,091

Somehow we use different data as I used the estimated listening response (Estimated In-Room Response.txt) from his zip files which gives a very different result if I align them similarly:It all depends what you want to show. Take a look at this comparison. Obviously both speakers aimed for flat PIR in the 1kHz-10khz so I aligned them that way (let's ignore M16 hump in the 1200-1800Hz range).

From that comaprison it turned out that M16 makes slightly less nergy in the entire sub 1kHz region and that is also rolls-off more steeply after 10kHz.

View attachment 54091

Thats what I actually did at my initial post #84 (with again very different results to yours) which started this discussion... or you can level-match the 100Hz-1kHz region and realise that M16 is a bit higher in the 1kHz-10kHz region, after which it rolls-off mroe steeply.

- Joined

- Jan 15, 2020

- Messages

- 6,935

- Likes

- 17,091

Yes, thats why I also just wrote in my following post.View attachment 54096

View attachment 54097

Something is not quite right with these two overlays...

Shouldn't both show overlaid predicted inroom responses? But it seems predictions in one don't match the other?

- Joined

- Sep 16, 2019

- Messages

- 1,201

- Likes

- 2,658

I think we're neglecting the point that these are one person's brief sighted impressions. I don't think he should be doing them at all, in preference of seeing more speakers measured

Even good speakers need care to sound their best. It took me more than 2 weeks to get my Revels to sound their best while others such as my Genelecs sounded pretty well right off the bat, to my ears. It doesn't mean one is better than the other, as the objective measurements show.

Most of these things have to do with what is going on below the transition frequency. However, while the spinorama shows us a great deal, it does not show the lateral reflections as a seperate curve. As our ears are in the lateral plane, that first sound to bounce of the sidewall is of great importance. I've seen Kevin Voecks stress on this point on more than one occasion. While the R3 averages out very well, we've seen in independent measurements that it's not perfect in this regard. There's many potential factors at play, and it's easy to jump to conclusions.

Anyhow, that's my personal thought.

Even good speakers need care to sound their best. It took me more than 2 weeks to get my Revels to sound their best while others such as my Genelecs sounded pretty well right off the bat, to my ears. It doesn't mean one is better than the other, as the objective measurements show.

Most of these things have to do with what is going on below the transition frequency. However, while the spinorama shows us a great deal, it does not show the lateral reflections as a seperate curve. As our ears are in the lateral plane, that first sound to bounce of the sidewall is of great importance. I've seen Kevin Voecks stress on this point on more than one occasion. While the R3 averages out very well, we've seen in independent measurements that it's not perfect in this regard. There's many potential factors at play, and it's easy to jump to conclusions.

Anyhow, that's my personal thought.

I agree. Personally I think lateral dispersion smoothness is much more important than vertical (for my uses anyway). I think maybe speakers with really smooth vertical dispersion might "game the score" a bit relative to a speaker with better lateral response but may not have as good verticals (which may matter less to the listener).However, while the spinorama shows us a great deal, it does not show the lateral reflections as a seperate curve. As our ears are in the lateral plane, that first sound to bounce of the sidewall is of great importance.

Some of the "Revel Secret Sauce" may be limiting vertical dispersion a bit and focusing on wider lateral dispersion.

I have no doubt that the Revel will sound better you can see it in the measurements. You should have a look at the drectivity index plotsGreat thanks nice review and spin data, we also interested eat what you sense for subjective part at least i do and in you try to find explanations why M16 get golf panther and R3 plus 8341A dont get their expected football panther i made below toggle of 3 second animation camparison for M16/R3/8341A, maybe its too much show there but think its a good stare in Spinorama have included PIR in the upper orange curve and also a rough curve of Toole's trained listener preference from first edition book where M16 is coming most close to that curve. Thanks if you will take a stare and see if any directivity or Spinorama data makes sense to listening test, in the lower animation they filtered 8th order to telephone band like bandwidth and also smothed flat as pancake on axis which think combined the filtering is a good effect ala normalize thing.

Click inside visual to get clear resolution to directivity patterns:

View attachment 54038

EQed flat on axis and filtered BW 8th order stopbands @80Hz / @7kHz, click inside visual to get clear resolution to directivity patterns

View attachment 54039

( @amirm it would be great if the di indexes are ploted more stretched in the level axis).

You can see that the Revel achieves more constant directivty in the frequencies in which typical rooms absorb more and more sound power. This should put the Revels ahead since there a no real flaws in the frequency response in the listening window.

Loudspeaker like some B&W speakers will also sound better than presumably great speakers like the Genelec or KEF. Mayby even better than the Revels since they do the trick to lower the di to higher frequencies where typical rooms absorb a lot of energy, but most B&W speaker did not provide linear direct frequency response which is a minus. The use of super tweeter wasn't established out of nowhere...

In most of your analysis you didn't think enough of the room and speaker as a unit. The listening distance, the reverberation time over frequency and the mixture of direct to later arriving sound energy are very important to better predict the sound quality...

- Joined

- Jan 15, 2020

- Messages

- 6,935

- Likes

- 17,091

Thanks, now it looks identical to mine.Sorry guys, I loaded wrong PIR for M16.

Here's how they look when range 1khz-10kHz is matched:

View attachment 54112

I’m the one who performed that blind test, and I agree — I couldn’t find any obvious major coloration differences once bass was normalized. They sounded remarkably similar actually, in terms of frequency response. But they did not sound similar in terms of “fidelity”, for lack of a better word.

This objective and subjective review from Amir is really fascinating to me, because of how closely it aligns with my KEF R3 experience: I initially bought the R3 because the published specs seemed to indicate a speaker that should outperform just about anything else out there — and now thanks to Amir, we’ve confirmed that KEF was not sugarcoating the measurements. The KEF R3 really does measure fantastically well.

And yet in my blind test, they didn’t really dominate, despite their phenomenal measurements suggesting that they should. They were clearly fantastic speakers, but the truth is when compared side by side, they lost pretty severely to the Ascend Sierra 2EX in a bass-normalized blind test. And this result is mirrored in Amir’s subjective listening where the R3 loses subjectively to another speaker (the Revel), which purely according to measurements shouldn’t be happening. And there are many other accounts you will find online mirroring this.

As for what could explain this? I do not know. Many of us suspect dispersion breadth to be the likely culprit, but we need more data to better establish exactly how much more important wide dispersion may be than how current preference scores weight it. And thanks to Amir’s equipment and hard work, many of us are happy to look forward to much more valuable data

FWIW, ultimately I ended up returning the KEF R3’s, but I still have my Revel F206 and all my Ascend flagship speakers. I still have not done a blind test between the Revel F206 and Ascend RAAL Towers, but I do still plan to get around to it eventually.

Thanks for the input! Don't forget to send your speakers to Amir to get measured

That certainly looks like the m16 would sound better ie pleasing. But the R3 more accurate. And I think we can agree now as a speaker gets more accurate it gets more boring.Sorry guys, I loaded wrong PIR for M16.

Here's how they look when range 1khz-10kHz is matched:

View attachment 54112

That certainly looks like the m16 would sound better ie pleasing

Listening to both without EQ M16 would certainly sound punchier and with more presence in the 1-2kHz it may seem more attractive, but IMO there is no doubt that R3 is a better speaker and that would show as soon as you EQ them both..

tecnogadget

Addicted to Fun and Learning

Sorry guys, I loaded wrong PIR for M16.

Here's how they look when range 1khz-10kHz is matched:

View attachment 54112

Graphics don’t lie. It’s totally makes sense M16 would please most audience, you have more presence in the critical 1k to 2k region and a bass boost center at 100hz.

It’s like adding some salt and seasoning to food, I consider it to be under the acceptable parameters of different tastes.

Both great speakers with different voicing. Both will suit to different applications/systems. I still prefer R3 as personal choice because of it performance and technology, but I can’t neglect the M16 get the advantages in price department.

Similar threads

- Replies

- 369

- Views

- 73K

- Replies

- 255

- Views

- 42K

- Replies

- 345

- Views

- 52K

- Replies

- 133

- Views

- 21K

- Replies

- 5

- Views

- 1K