Placeholder for new thread to discuss ideas on how to drive speaker measurements to be more meaningful.

Today's reviews and scoring have a basis in past approaches and have led to lots of review thread discussions. Many of them show a need to do something better. This thread is meant to consolidate these discussions. and (hopefully) drive out some concrete improvements.

Here is one small example (my own) from the Sointuva review thread...

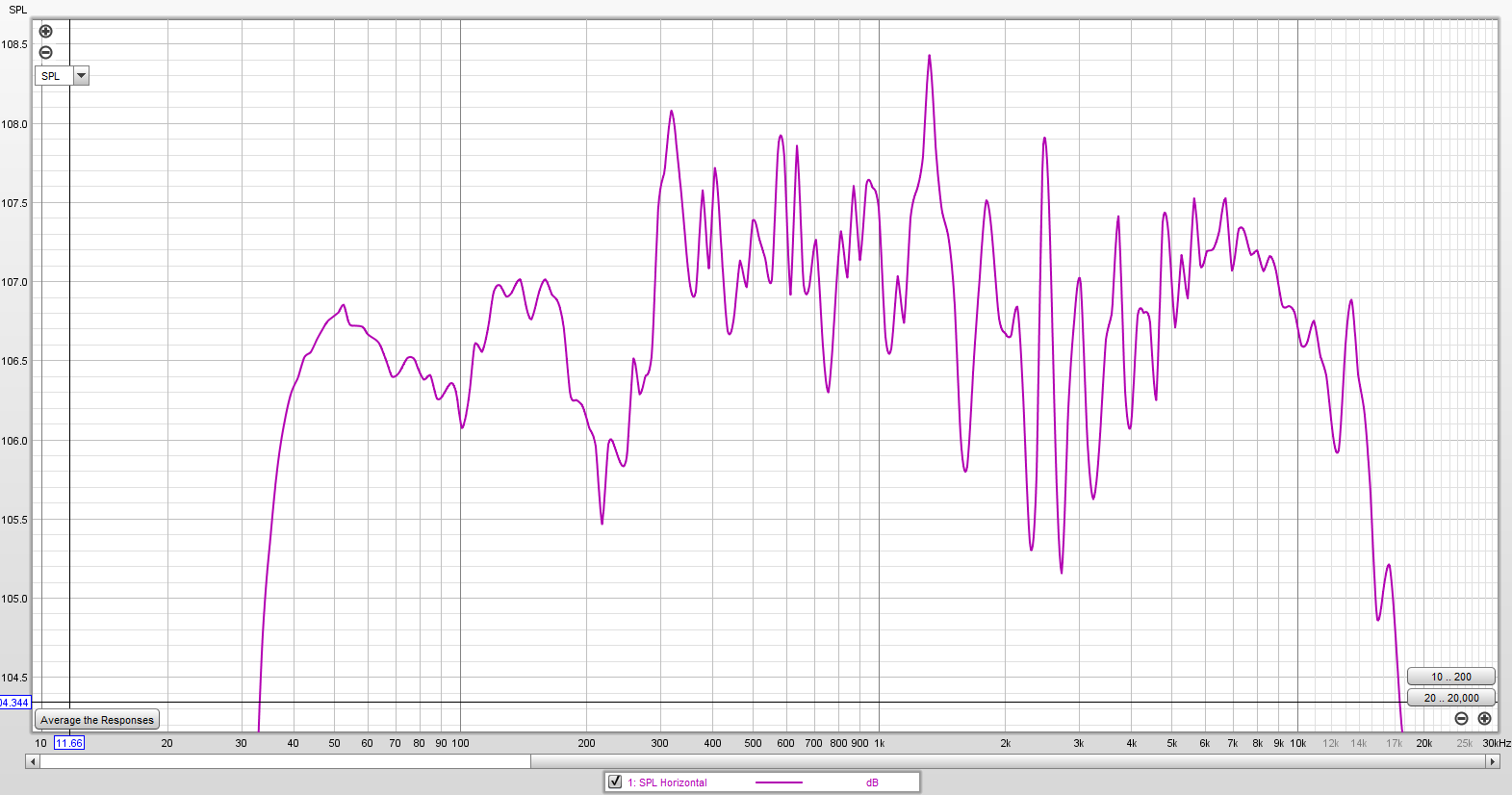

Some of the problem starts with how the data is presented IMO. For instance, the usual 50 db range of the scale on a simple frequency response plot leads many to think the response is flatter than it really is. Here is on-axis Klippel data from a highly regarded active monitor (is quite flat shown using the standard range), shown at a 4 db range:

Does not look very flat now, does it? Harder to eyeball a trend line too. I can tell you if you fit a trend line to this data, it is neither flat or zero slope.

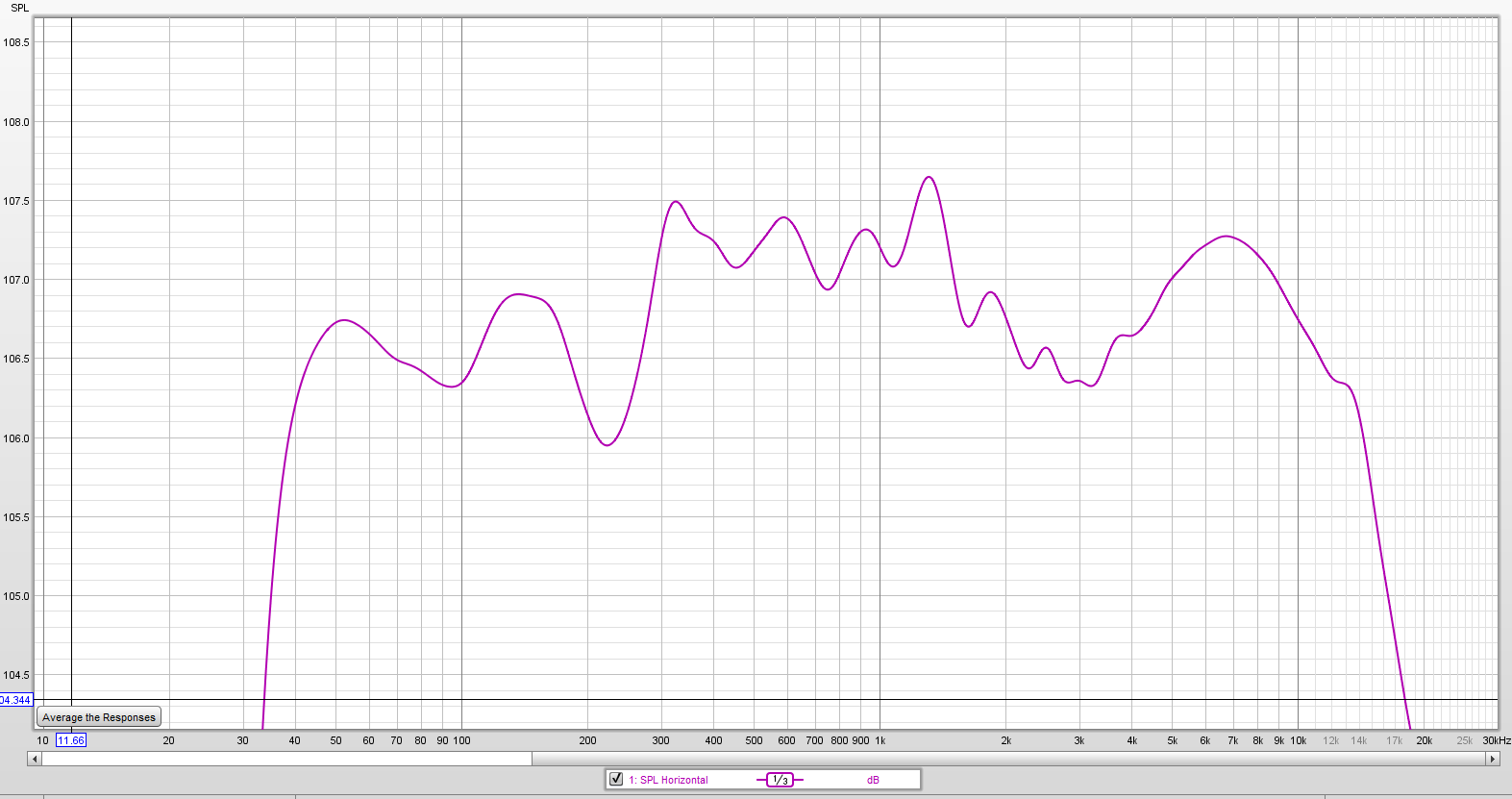

Some might argue that the above is raw data and too harsh a presentation. So, here is the same data with 1/3 smoothing:

Wonder how many would flock to buy this speaker now?

Not so pleasing to the eye any longer, but is a much more revealing presentation than the usual scaling. If we start by presenting the data with more of the true nature of its complexity, the technical consumer is forced to think more critically (or may realize that judging the speaker is not as simple as it was made to appear).

EDIT: Should note that any improvements may need to be vetted for impact on the legacy speaker dataset.

Today's reviews and scoring have a basis in past approaches and have led to lots of review thread discussions. Many of them show a need to do something better. This thread is meant to consolidate these discussions. and (hopefully) drive out some concrete improvements.

Here is one small example (my own) from the Sointuva review thread...

Some of the problem starts with how the data is presented IMO. For instance, the usual 50 db range of the scale on a simple frequency response plot leads many to think the response is flatter than it really is. Here is on-axis Klippel data from a highly regarded active monitor (is quite flat shown using the standard range), shown at a 4 db range:

Does not look very flat now, does it? Harder to eyeball a trend line too. I can tell you if you fit a trend line to this data, it is neither flat or zero slope.

Some might argue that the above is raw data and too harsh a presentation. So, here is the same data with 1/3 smoothing:

Wonder how many would flock to buy this speaker now?

Not so pleasing to the eye any longer, but is a much more revealing presentation than the usual scaling. If we start by presenting the data with more of the true nature of its complexity, the technical consumer is forced to think more critically (or may realize that judging the speaker is not as simple as it was made to appear).

EDIT: Should note that any improvements may need to be vetted for impact on the legacy speaker dataset.

Last edited: