MaxwellsEq said:

Video compression is a great deal more aggressive than audio compressing because the brain is easier to fool with video than sound. Film is sampled at 26 times every second, CD is 44000 times each second!

Video inside a TV Studio is at 270Mbit/s for Standard Definition and 3G for HD. But you watch it on YouTube or via your STB at a handful of Mbits/s these are compression ratios of several 100 fold.

video and audio compression are surely way "more aggressive" than AD/DA. And nobody can say exactly what youtube does .. or did in 2010. The improvements between 2010-2019 are easily visible/audible though.

I have excellent technical knowledge of what YouTube was doing in 2010 and now. I have the advantage of being an engineer in both broadcasting and streaming worlds.

Please don't make this into a mystery, it's not. It's engineering, and engineers at YouTube, Netflix etc. frequently publish technical articles on how they do things and share this knowledge with their peers. There are only so many technologies available and only so many ways to build the engineering.

I will unpack the numbers.

1. Video

10 bits needed for each colour R G B = 30 bits per pixel.

HD has 1920 x 1080 for a frame = 2,073,600 pixels multiplied by 30 = 62,208,000 bits for a frame

For Progressive 50 frames per second = 50 x 62,208,000 = 3,110,400,000 bits every second

This is 3.11Gbit/s for uncompressed HD video. UHD is c 12Gbit/s!

In general, over the last few years, video from YouTube, Netflix et al uses Manifests to offer different qualities based on what your device and network can sustain. But in general, assume most non-UHD streams are between 3/5 Mbit/s for talking heads and 5 to 15/20Mbit/s for more action. Let's pick 10 Mbit/s

So the 3,110,400,000 is converted to 10,000,000. This is compression ratio of

311.

Perhaps it helps I explain that this means 3.10 Gbit/s of information has been discarded from 3.11 Gbit's of source data! And that is just one pass!

It's been known for decades that you should never run a lossless compression algorithm on a compressed video signal. The algorithms above work by throwing away stuff your brain doesn't notice (for example, we don't need very resolute colour images, so we can throw a huge amount of colour resolution away).

BUT, the second time, the algorithm "assumes" there is information that can be thrown away, but that simply is no longer true. This time, stuff gets thrown away that you cannot do without. So looping a 3Gbit/s stream into a compressed stream at 10 Mbit/s, then doing it just once more will produce massive artefacts.

2. Sound

In sound, the same thing happens. A Red Book CD produces 44,100 samples which have a bit depth of 16 (notice that pictures only have 10 bits per pixel). There are 2 channels so you need 1.411 Mbit/s. It's generally accepted that a 256kbit/s MP4 stream is a good comparator with an uncompressed audio stream. This is only a

5x compression!

Again - it's been known for years that you should never double lossless compress audio. For example, MP3s used in studios (rather than CDs or WAVs) sound wrong via compressed DAB or via compressed Internet streams!

SO, I would expect a couple of loops of YouTube serial compression to be heavily artefact-ed, because so much gets chucked away on the first compression, a second compression has nothing to work with so damages the image and sound.

BUT, if you are going uncompressed - to - uncompressed - to - uncompressed etc. I would expect almost no impact.

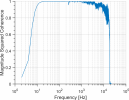

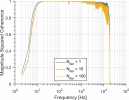

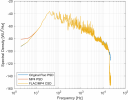

DA to AD to DA to AD should be fine for many 100s of times assuming that both the DAC and ADC are linear to 20 bits or so. Gradually the result may sound different from the original, but the difference would NOT be digital artefacts as above, but variations on the interpretation of the least significant bits. This might appear as changes to the noise floor.