A normal distribution for a null result? SHOCKING!I agree - the result from the report look suspiciously like coin flips to me too.

-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Serious Question: How can DAC's have a SOUND SIGNATURE if they measure as transparent? Are that many confused?

- Thread starter Willhelm_Scream

- Start date

- Joined

- Oct 10, 2020

- Messages

- 806

- Likes

- 2,638

Note that the probabilities column in the table are not in percentages, so 0.056 is actually 5.6% i.e. 56/1000 and not 6/1000 as you suggested.Granting the 5% was used vs. 1%, a result like 0.056%, (6/1000) chance of result being due to random chance is too low to blown off.

You can also easily check the calculations here (example for the 0.056 result you mention):

mediocrebutarrogant

Active Member

- Joined

- Feb 20, 2022

- Messages

- 127

- Likes

- 43

a dac sending 1 vrms and another sending 2 vrms will sound different . adjusting pot to level match is not a solution IMHO

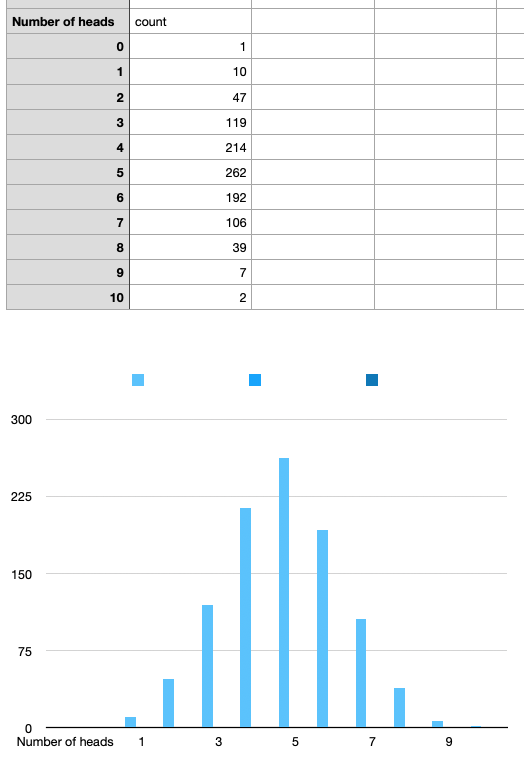

To get an idea, here is a spreadsheet simulation of a 10 coin toss repeated about 999 times. I had to run it about 3 times to get either an all heads or all tails (but then got 1 all tails and 2 all heads).

In an ABX test each of these would equate to a person getting it right 10/10 times (say heads) and wrong 10/10 (tails) - even if there was no difference. So around 3 x 999 runs = 2997 runs, and 2 times it was guessed correctly 10/10 (heads)

So based on this data, 0.066% or runs can generate 10/10 correct guessing even by blind chance.

In an ABX test each of these would equate to a person getting it right 10/10 times (say heads) and wrong 10/10 (tails) - even if there was no difference. So around 3 x 999 runs = 2997 runs, and 2 times it was guessed correctly 10/10 (heads)

So based on this data, 0.066% or runs can generate 10/10 correct guessing even by blind chance.

Last edited:

- Joined

- Oct 10, 2020

- Messages

- 806

- Likes

- 2,638

Sure - nominal output level is a valid reason to select one device vs another, as it can be important for appropriate and optimal gain staging.a dac sending 1 vrms and another sending 2 vrms will sound different . adjusting pot to level match is not a solution IMHO

Ideally optimal output level of a DAC should be dictated by the input sensitivity of the next device in the chain, to avoid overload as well as sub-optimal SNR. So even from that perspective it makes sense to compare DACs at the same output level if all devices are supposed to be feeding the same input.

It is also very important to understand that 'louder' is typically perceived as 'better' to most people - even if the difference is slight. To avoid influence of this natural human bias, it is very important to level-match. That will tell us if the preference is only due to the loudness difference, or if there's any other difference in the sound signature. If the devices sound the same to us when precisely level-matched then we know the objective audible differences are down to output level differences (i.e. loudness) - and we can freely choose which device fits better with the rest of the system.

Robin L

Master Contributor

I don't see any results here that appear to be anything but random. Highest rate of correct answers is 65% and that is not enough to be statistically significant.Wearisome issue for sure, but the same-old.

I'm not a scientist and I can't explain why ABX-style, blind tests would not refute subjective claims of sound differences. Seems they ought to, but there might be something about that testing method that, while it may prove that difference do exist, it can't certainly prove they don't, nor that under different listening conditions some people, at least, can those differences.

Some might recall the famous testing reported in the January 1987 Stereo Review that compare a series of amplifiers. The general conclusion was the people cannot reliably tell the difference between amps. However when you look at the detailed results, you can see document that some people were able to tell the difference between amps in case of some paired amps at well above chance probability.

My own subjective experience is that things the measure well do tend to sound the same -- or at least the differences are utterly insignificant. I replaced my Schiit Gungnir Multibit DAC with a Topping DX7s DAC/headphone unit because I could hear no significant difference -- this despite the fact that the Gungnir MB doesn't measure all the well. I replace the DX7s with a D90 because it the latter has slightly better measurements but frankly I can't really hear any difference.

OTOH, I know a member on another forum who has decades of experience listening to many different amps and DACs. I have come to respect his wholly subjective impressions far more than most. He replaced his Topping D90 with the RME ADI and claims that they sound the different and he prefers the latter -- but I'm not rushing out do the same switch.

View attachment 190139

Pdxwayne

Major Contributor

- Joined

- Sep 15, 2020

- Messages

- 3,219

- Likes

- 1,172

I see that the most significant one from post #3313 is 74/128.To get an idea, here is a spreadsheet simulation of a 10 coin toss repeated about 999 times. I had to run it about 3 times to get either an all heads or all tails (but then got 1 all tails and 2 all heads).

In an ABX test each of these would equate to a person getting it right 10/10 times (say heads) and wrong 10/10 (tails) - even if there was no difference. So around 3 x 999 runs = 2997 runs, and 2 times it was guessed correctly 10/10 (heads)

So based on this data, 0.066% or runs can generate 10/10 correct guessing even by blind chance.

View attachment 190149

Can you do another simulation but each trial is 128 times instead of just 10 times, like what you did? Repeat that for 100 rounds and share your results?

Thanks!

Why? This is a textbook normal distribution. If Gorgonzola doesn't understand that, he needs to do more studying rather than you tossing work at someone else which will be meaningless to him.I see that the most significant one from post #3313 is 74/128.

Can you do another simulation but each trial is 128 times instead of just 10 times, like what you did? Repeat that for 100 rounds and share your results?

Thanks!

Pdxwayne

Major Contributor

- Joined

- Sep 15, 2020

- Messages

- 3,219

- Likes

- 1,172

Haha, indeed...So at the end he will most likely find 5 out of 100 that do better.Why? This is a textbook normal distribution. If Gorgonzola doesn't understand that, he needs to do more studying rather than you tossing work at someone else which will be meaningless to him.

Instead arguing about this, how about we discuss why different DAC and amp and headphones combo did not give same clues when running timing tests?

Would you please try to explain my findings in my timing tests?

See https://www.audiosciencereview.com/...-would-be-the-desired-clue.30752/post-1083079

Thanks!

Last edited:

Pdxwayne

Major Contributor

- Joined

- Sep 15, 2020

- Messages

- 3,219

- Likes

- 1,172

Kind of thinking more, 74/128 is by 8 participants. We don't know the break down of the results.Why? This is a textbook normal distribution. If Gorgonzola doesn't understand that, he needs to do more studying rather than you tossing work at someone else which will be meaningless to him.

But, assuming that their results are similar. What is the probability that all 8 of them would do at least 9/16?

Well, very significant!

Screenshot from https://stattrek.com/online-calculator/binomial.aspx

You are correct. However 5.6% of random in a psychoacoustic test seems very low. I don't have the statistical knowledge to aggregate all the results to demonstrate whether or not this single result falls within a normal distribution having a mean of 50/50 correct for the study as a whole.Note that the probabilities column in the table are not in percentages, so 0.056 is actually 5.6% i.e. 56/1000 and not 6/1000 as you suggested.

You can also easily check the calculations here (example for the 0.056 result you mention):

View attachment 190147

So I will accept based on more expert statistical expertise than my own, that the result of the study of as a whole were random. However there were six different amps involved in the study. I wonder whether more trials of specific pairs of the amps would produce less random results than the study as a whole? My guess is ... could be. The question for me has never been whether amps in the universe of amps tend to sound the same, but whether two specific amps, (both operating properly), can sound different.

One thing I strongly suspect is that the amps in the pairs with the lowest probabilities of random guesses, (Counterpoint/NAD, Futterman/Hafler, Futterman/Pioneers), would measure quite differently from each other. At some point amps, (or DACs), the measure differently are going to sound different to some portion of listeners.

Last edited:

Humm ... well I won't dispute with you statisticians, however I wonder how the participates feel about their guesses?I don't see any results here that appear to be anything but random. Highest rate of correct answers is 65% and that is not enough to be statistically significant.

Robin L

Master Contributor

How they feel doesn't really matter, the issue is audibility. And the test results indicate close to random guesses. Yeah, people's feelings get hurt when they find out that the $40,000 DAC performs as well as a $9 dongle. Hurt feelings all around, that's what ASR is all about.Humm ... well I won't dispute with you statisticians, however I wonder how the participates feel about their guesses?

billyjoebob

Senior Member

- Joined

- Oct 9, 2021

- Messages

- 307

- Likes

- 118

Was listener fatigue ever considered in any of these test?

Pdxwayne

Major Contributor

- Joined

- Sep 15, 2020

- Messages

- 3,219

- Likes

- 1,172

Do you have anything more to say about this one? At the end, OP and his friend can pass 7 out of 8 rounds, once comparisons reduced to 2 DAC instead of 4 at the same time.How they feel doesn't really matter, the issue is audibility. And the test results indicate close to random guesses. Yeah, people's feelings get hurt when they find out that the $40,000 DAC performs as well as a $9 dongle. Hurt feelings all around, that's what ASR is all about.

DAC ABX shootout - unable to distinguish between 10$ and 15k$

Hello All, First of all, thank you for the forum contributors who are sharing their knowledge here. I started reading ASR two month ago and just can't get enough of it. I am sharing my experience with my first ABX testing. Last Friday me, together with a friend performed a double blind test on...

www.audiosciencereview.com

www.audiosciencereview.com

Last edited:

They didn't level match properly though. Used a mic.Do you have anything more to say about this one? At the end, OP and his friend can pass 7 out of 8 rounds, once comparisons reduced to 2 DAC instead of 4.

DAC ABX shootout - unable to distinguish between 10$ and 15k$

Hello All, First of all, thank you for the forum contributors who are sharing their knowledge here. I started reading ASR two month ago and just can't get enough of it. I am sharing my experience with my first ABX testing. Last Friday me, together with a friend performed a double blind test on...www.audiosciencereview.com

Pdxwayne

Major Contributor

- Joined

- Sep 15, 2020

- Messages

- 3,219

- Likes

- 1,172

Please read through to the end. Beginning was mic. End was matching voltages with scope.They didn't level match properly though. Used a mic.

Robin L

Master Contributor

"Silly enough I can 100% reliably say which one is better when I see what system is connected."Please read through to the end. Beginning was mic. End was matching voltages with scope.

Anyway, I didn't find the statistical nugget you cited. Do you have a post#?

Pdxwayne

Major Contributor

- Joined

- Sep 15, 2020

- Messages

- 3,219

- Likes

- 1,172

What you quoted is from first post. After that, many members provided testing suggestions and OP improved his testing method and rerun tests a few days later."Silly enough I can 100% reliably say which one is better when I see what system is connected."

Anyway, I didn't find the statistical nugget you cited. Do you have a post#?

The statistics of second rounds were described to me at this post:

DAC ABX shootout - unable to distinguish between 10$ and 15k$

I know its probably me, but I cannot fathom an apple dongle sounding the same as any Chord or Topping DAC. Yet... it does. The performance of that little thing is astonishingly good. Not state of the art measurement, but damn excellent measurements. When I did a comparison to an RME ADI-2 Pro...

www.audiosciencereview.com

www.audiosciencereview.com

Please ask more questions to the OP in that thread if you need further information.

; )

Anyway, I think the difference likely due to DAC+amp+headphones combinations used. I also observed differences with different combo when doing online blind timing tests. You can see my chart here:

DAC and amp combos did not give same clues when running online blind tests. Why? What would be the desired clue?

When running online blind timing tests yesterday, I heard different clues when using multiple combination of dac and amps. I wonder why. I thought, as long as I am using transparent DAC and amp, I can mix and match them and any combo will sound the same. But not this case. Is it okay? More...

www.audiosciencereview.com

www.audiosciencereview.com

Last edited:

Robin L

Master Contributor

Links don't work, just give me the post #s on this thread, ok?What you quoted is from first post. After that, many members provided testing suggestions and OP improved his testing method and rerun tests a few days later.

The statistics of second rounds were described to me at this post:

DAC ABX shootout - unable to distinguish between 10$ and 15k$

I know its probably me, but I cannot fathom an apple dongle sounding the same as any Chord or Topping DAC. Yet... it does. The performance of that little thing is astonishingly good. Not state of the art measurement, but damn excellent measurements. When I did a comparison to an RME ADI-2 Pro...www.audiosciencereview.com

Please ask more questions to the OP in that thread if you need further information.

; )

Anyway, I think the difference likely due to DAC+amp+headphones combinations used. I also observed differences with different combo when doing online blind timing tests. You can see my chart here:

DAC and amp combos did not give same clues when running online blind tests. Why? What would be the desired clue?

When running online blind timing tests yesterday, I heard different clues when using multiple combination of dac and amps. I wonder why. I thought, as long as I am using transparent DAC and amp, I can mix and match them and any combo will sound the same. But not this case. Is it okay? More...www.audiosciencereview.com

Similar threads

- Replies

- 67

- Views

- 5K

- Replies

- 9

- Views

- 1K

- Poll

- Replies

- 5

- Views

- 722

- Replies

- 2

- Views

- 331