I don't think so.Funny... Amir and staticV3 are arguing the exact same point to each other but from a different perspective.

Basically amplifiers are voltage and current limited (staticV3 sometimes posts nice plots about this aspect).

Drivers can have different impedances, different impedance curves (over a frequency range) and different sensitivity.

Effectivity is determined by the sensitivity and impedance (which can differ per frequency band).

Most amplifiers have increased distortion in lower impedances. So... with the same sensitivity (but different effectivity) the higher impedance headphones are usually in the advantage. Also these will draw less power which, when playing really loud, could improve service life of a battery fed device on 1 charge.

When the output is voltage limited only a higher sensitivity headphone can play louder. Often these are also low impedance so there's that.

It's only the ohms law what [staticV3 & me] and [Amir & Zolall] are agreed on,

but that does not make we are arguing the exact same point to each other.

What [staticV3 & me] are talking about and trying to apply ohms law is:

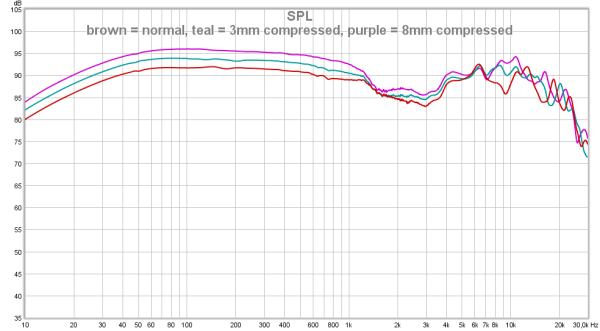

TOPIC A [effect of different impedance at different frequency of a same headphone+amp setup].

What [Amir & Zolall] is talking about is:

TOPIC B [driving different headphones with different amps].

The impedance curve of a specific headphone, the original question, obviously relates to TOPIC A.

The problematized fallacy is caused by confusing TOPIC A and TOPIC B.

But the TOPIC B, which is totally irrelevant, is constantly brought up,

either by still being confused, or to confuse readers to cover up the fallacy (don't want think that way though).

Again, please remind that the impedance curve of a specific headphone relates to TOPIC A, not B

I don't think I can do any better to make it clearer.