-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

MQA creator Bob Stuart answers questions.

- Thread starter Tks

- Start date

- Joined

- Feb 23, 2016

- Messages

- 20,970

- Likes

- 38,112

Do you have any experience using reel to reel tape machines?I learned about it back in the 1980s: https://en.wikipedia.org/wiki/Tape_bias. Contrary to popular opinion, the gap of recording head is not the limiting factor, the gradient of magnetic field at the trailing edge of the recording head is. Yes, nearly-perfect azimuth is important, and setting it on a machine without a servo-adjuster could be an unpleasant chore.

The gap at playback head, yes, is a limiting factor. We replaced worn out playback heads much more often than the recording heads. I recall the laser-cut gaps being on the order of several micrometers on high-end machines back then, and https://ccrma.stanford.edu/courses/192a/Lecture7-Magnetic_recording.pdf confirms that.

I'm taking about serious machines from the 1970s. Like Ampex ATR-100. See page 32 of https://www.americanradiohistory.com/Archive-DB-Magazine/70s/DB-1976-12.pdf, and page 36 of https://www.americanradiohistory.com/Archive-DB-Magazine/70s/DB-1977-02.pdf. Note the bias frequency: 432 KHz. Note servo motors power: 1/4 HP. Note the discussion about phase coherency and testing with square waves.

ATR-100 SNR spec is not entirely clear: I've seen 68, 72, and 80 dB at 30 ips (depending on tape material and weighting?). Was this that much worse than the PCM decks of that era, such as 13-bit Sony PCM-1: http://www.thevintageknob.org/sony-PCM-1.html? In its marketing materials, Sony only dared to compare the PCM-1 with a 15 ips reel-to-reel.

Moving on to 1980s, consider Studer A820: http://www.theaudioarchive.com/TAA_Tape_Studer_A820.htm. SNR up to 77 dB (A-weighted). For a while, those monsters were still competitive with the direct PCM recorders. Yes, as I mentioned in the previous post, distortions and noise were always their weak spots. Yet were they as bad as those of the consumer-grade tape recorders? Clearly not.

What gets me is people not getting that analog SNR is not the same as digital SNR. In the analog case, as long as the noise floor at -77 .. -68 dB is masked by music, the music itself doesn't really have the amplitude resolution of just 13 or 14 bits as so many people think. It is analog: you can digitize it with 24 bits and this will be meaningful.

Similarly with timing. Yes, electronics band-limits the signal, typically to 22 KHz on high-end machines. Yet again, being an analog system, it doesn't need the 10-20 ms for the dithered PCM samples to catch up with the true signal value with a precision of ~0.2 LSB, after a signal's component "jumps": what's recorded on the tape just "jumps" too, straight to a very close approximation of the true value.

Of course, if you just plug the output of a reel-to-reel deck into DAC and push play and record buttons, you are going to record the hiss on silent intervals. Gate it out, digitally if you must. The real-life music's dynamic range rarely exceeds 45 dB anyway. IMHO, 192/24 PCM carefully captured from a high-end analog tape master can be better sounding than a CD.

Theorem. You keep using that word. I do not think it means what you think it means.

And seriously, I don't think you have a good grasp of Fourier or Shannon, and until such a time that you do, you're still going to be all over the place with irrelevancies.

FWIW: Theorem and theory.

More or less what's being said here:The solution for you is really simple. Playback your digital recordings (the ones that have a higher S/N ratio from the studio recording) and mix some analog noise of around -70dB which 'masks' the things you seem to worry about.

http://www.aes.org/aeshc/docs/3mtape/printthrough.pdfThere isn't an analog recording tape made that doesn't suffer from print through...

...Signal-to-print may be the constant that measures print-through potential, but the real print-through that makes you cringe is measured by the print-to-noise ratio. A tape with a poor signal-to-noise ratio will actually make you cringe less, because the noise on that tape will mask the print through you would otherwise hear. In other words if the signal-to-noise ratio is lower than the signal-to-print ratio, you would not hear the print through. However, you will definitely hear the noise.

The print-through is still there, of course, but buried in noise so it can't be measured. The assumption is that it therefore isn't there. But of course it *is* there, affecting what signal and timing emerges above the layer of supposedly pure, statistically neutral noise that supposedly isn't resulting in any systemic timing errors.

Nice demo above...

Yes, this dither convergence thing might be real in the sense that it can be analysed mathematically (as I was saying earlier, you can keep zooming in until the errors fill the screen unlike analogue which just isn't analysable that way) but the size of the problem is so small that even if you boost it by 60dB without the masking of the music it still can't be heard. It's just a question of getting things in proportion - which the zoomed-in view of theoretical digital errors fails to do.

What about that quote I linked to earlier? Is it correct in what it is saying?

Sounds like modulated noise, the very worst thing imaginable in a digital system - apparently. What effect does this have on timing?

Yes, what you are saying is correct. As is what RayDunzl said, and Blumlein 88, and Amir, which all repeated what I've been saying all along: analog has more distortions and noise than digital. Should I agree with you all about "way more distortions and noise"? Fine, I agree.

But what doesn't the analog have that the 44/16 has? Such rigid informational bandwidth limitation! The analog spacial resolution goes down to micrometers on physical media, which translates to microseconds and microvolts at the output. If you calculate to what bit depth and sampling rate these resolutions correspond, and then multiply them, it could be a ginormous number of bits per second.

Some people simply prefer this tradeoff: a nearly-perfect tracking of the analog signal, unfortunately burdened with baggage of distortions and noise. My hypothesis is that this could be a rational explanation of why RTR and LP didn't yet follow the way of NTSC. The oft-cited alternative explanation - these people are stupid, and thus let themselves be fooled by unscrupulous marketers and salesmen - doesn't satisfy me.

Hmm. You seem to be saying that the noise and distortion has 'infinite' resolution, therefore it's benign. But if it's modulated noise, distortion or print-through etc. then just because it has 'infinite' resolution doesn't mean it isn't producing genuine, repeatable, signal-dependent timing errors (and all the other errors) that supposedly destroy the sense of space, etc. It's just that there isn't a finite fundamental, theoretical basis to its 'resolution'.Yes, what you are saying is correct. As is what RayDunzl said, and Blumlein 88, and Amir, which all repeated what I've been saying all along: analog has more distortions and noise than digital. Should I agree with you all about "way more distortions and noise"? Fine, I agree.

But what doesn't the analog have that the 44/16 has? Such rigid informational bandwidth limitation! The analog spacial resolution goes down to micrometers on physical media, which translates to microseconds and microvolts at the output. If you calculate to what bit depth and sampling rate these resolutions correspond, and then multiply them, it could be a ginormous number of bits per second.

Some people simply prefer this tradeoff: a nearly-perfect tracking of the analog signal, unfortunately burdened with baggage of distortions and noise. My hypothesis is that this could be a rational explanation of why RTR and LP didn't yet follow the way of NTSC. The oft-cited alternative explanation - these people are stupid, and thus let themselves be fooled by unscrupulous marketers and salesmen - doesn't satisfy me.

I don't buy this idea that analogue systems have evolved to make them "perceptually transparent" despite their obvious shortcomings. Analogue systems were developed by people watching moving coil meters, playing the same specs game as manufacturers do today. In order for there to be evolution based on pure perception, there would have to be feedback from Golden-eared listeners that resulted in changes to lathe settings, coil winding, metal composition, etc. that didn't result in better conventional specs or prosaic improvements like easier maintainability. If you can show how that happened, I would be interested.

solderdude

Grand Contributor

The analog spacial resolution goes down to micrometers on physical media, which translates to microseconds and microvolts at the output. If you calculate to what bit depth and sampling rate these resolutions correspond, and then multiply them, it could be a ginormous number of bits per second.

So you think 24 bit depth is not enough and neither is 96kHz (or even 192kHz) using compression algo's like FLAC etc. equals ginormous bitrates ?

You have done the math obviously (magnetic particles on tape and vinyl grains) then what would you, considering your new paradigm, calculate what is needed (bitrate, bit depth, sampling method/frequency ?

It seems like you have been pondering/reading/examining/gathering evidence for this over many, many years. You MUST have a theory by now and have come up with minimal requirements/specs to fit in your paradigm.

Last edited:

It seems to be that MQA has a secret that no-one can critically recognise or identify. Whether it is correct or not is irrelevant in terms of current internet charges. MQA is dead on download cost vs licensing cost.

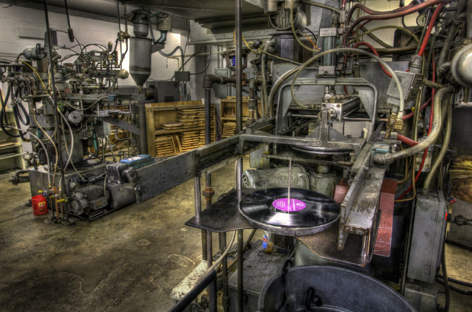

The reality of analogue audio:

And tape machines are on the same continuum: layer upon layer of non-random effects from saturation/hysteresis/drop-out/print-through/oxidised contacts/imperfect erasure/alignment/hum/magnetisation/dirt. To think it's pure benign Gaussian noise of infinite resolution is surely wishful thinking.

And tape machines are on the same continuum: layer upon layer of non-random effects from saturation/hysteresis/drop-out/print-through/oxidised contacts/imperfect erasure/alignment/hum/magnetisation/dirt. To think it's pure benign Gaussian noise of infinite resolution is surely wishful thinking.

Last edited:

The analog spacial resolution goes down to micrometers on physical media, which translates to microseconds and microvolts at the output. If you calculate to what bit depth and sampling rate these resolutions correspond, and then multiply them, it could be a ginormous number of bits per second.

Microseconds timing is easily bested by 44/16 which encodes to below a microsecond resolution, despite your protestations. Increase this to 44/24 and the resolution goes to well below the level of any analog media, below a picosecond range.

solderdude

Grand Contributor

It seems to be that MQA has a secret that no-one can critically recognise or identify. Whether it is correct or not is irrelevant in terms of current internet charges. MQA is dead on download cost vs licensing cost.

Perhaps as long as there are believers, customers and the money keeps rolling in it won't be dying until the next best format comes along.

Last edited:

- Joined

- Feb 23, 2016

- Messages

- 20,970

- Likes

- 38,112

Wow and flutter are not micro second level effects. And they aren't benign.

Hmm. You seem to be saying that the noise and distortion has 'infinite' resolution, therefore it's benign. But if it's modulated noise, distortion or print-through etc. then just because it has 'infinite' resolution doesn't mean it isn't producing genuine, repeatable, signal-dependent timing errors (and all the other errors) that supposedly destroy the sense of space, etc. It's just that there isn't a finite fundamental, theoretical basis to its 'resolution'.

I don't buy this idea that analogue systems have evolved to make them "perceptually transparent" despite their obvious shortcomings. Analogue systems were developed by people watching moving coil meters, playing the same specs game as manufacturers do today. In order for there to be evolution based on pure perception, there would have to be feedback from Golden-eared listeners that resulted in changes to lathe settings, coil winding, metal composition, etc. that didn't result in better conventional specs or prosaic improvements like easier maintainability. If you can show how that happened, I would be interested.

Apologies to other members for not quoting some of your questions. I will do my best to answer them all as well, in the course of answering selected questions. Otherwise the larger conversation gets fragmented and thus not easy to follow.

This morning I put together three things:

(A) My experiences with tape recorders. Haven't thought about this in a while, but thanks to Blumlein 88, I "went back in time", and recalled the long chain of magnetic machines I owned. From the first I disassembled, at age of 11 (after being put back together, it would play, but won't properly record anymore - that's how I learned that the bias generator is an important part

(B) My own experiences from the past, and the current seemingly ridiculous infatuation of certain audiophile circles with vacuum tubes and transformers. Exactly same vacuum tubes and precision-made transformers that for me were associated with electronics junkyards of the 1980s, overflowing with audio and TV hardware than nobody wanted to buy anymore, new or used, even for pennies. As of today, some vacuum tubes models have been produced, without noticeable changes, other than packaging and price, at the same factories, for ~70 years. What the heck!

(C) Seemingly insatiable buying public hunger for portable bluetooth speakers. Dozens of manufacturers, hundreds of models, breakneck pace of innovation. But only two models I could find, from niche manufacturers, one model from each, that I could consider somewhat hi-fi. The rest - comically yet unabashedly distorting audio junk, per objective measurements. How can the people who buy them even listen to them without flinching? Why the upscale boutiques in San Francisco switched en masse from higher-end Bose systems to JBL little bluetooth boom-barrels?

So here comes the insight. What is common between (A),(B), and (C)? Gross distortions! In (A), they are mostly odd-harmonics, as Cosmik so eloquently reminded me. In (B), they are mostly even harmonics. In (C), they are all harmonics, carefully crafted to induce, via nonlinearities of transducers, and aided by DSP algorithms, the Missing Fundamental effect (https://en.wikipedia.org/wiki/Missing_fundamental).

Another hint for me was the perplexingly convoluted designs of some of the early transistor amplifiers, which were replacing in RTR and LP gear the still-fresh-in-memory vacuum-tube ones. Back then, I was thinking: "Seriously, fourteen stages of amplification? With diodes in a local feedback loop? What other than massive distortions are you going to get out from this?". But that was precisely the point! Systems as a whole did sound good.

If you put (A) and (B) together, you'll get (C). Odd harmonics + Even harmonics = All harmonics = illusion of a deep bass + perceptually arguably benign timbral deviations. In other words, there are two paths to perceptual high fidelity:

(1) Make all the distortions in audio delivery chain vanishingly small. That's the path that professional community, and part of audiophile community, has taken. It is not simple, it is expensive, yet it is universal for all genres of music, and - I agree with Cosmic - easier for scientists and engineers to understand, model, and measure. 192/24 PCM and its compressed variations, in combination with seemingly unreasonably low THD and IMD at every step, relentlessly chased down by investigators like Amir, is the contemporary practical embodiment of this approach.

(2) Keep adding distortions until they perceptually cancel each other. Majority of consumer audio gear vendors, and another part of audiophile community, took this turn. Despite being cheaper and easier to achieve as a first approximation, it is not universal in regard to genres of music, is very hard to model and measure, and keeping perceptual improvements coming gets prohibitively expensive, especially in terms of tinkering time spent, after a certain point. Nevertheless, the boutiques owners seem happy playing new age and moderately paced urban contemporary on that gear.

The framework described above allows to explain the mystery behind the still raging, after all these decades, analog vs digital controversy. CD was one of the very first generations of products on the path (1) that mass-market consumers could buy. High-end RTR/LP media and gear were the last generations of analog products on path (2). The CD is superior in many, yet not all, perceptual aspects, to the high-end RTR/LP. And vice versa.

Due to differences in hearing systems, and other factors, some of which could be unrelated to the perceptual quality of sound, some people prefer CD to RTR/LP, while others have opposite preference. Yet others prefer CD for some genres of music, or even individual music pieces, and RTR/LP for other genres and pieces.

In my opinion, there is strong evidence indicating that 192/24 PCM, if delivered inexpensively, and yet in such a way that it won't be easily stolen, may persuade some of the folks currently pursuing path (2) to switch to path (1). MQA purports to be such a technology. That's why it is of interest to me personally. Yet it is not the only technology that can aid the (2)->(1) transition, in my opinion.

MRC01

Major Contributor

It would seem that this path is not that expensive, and doesn't require 192/24. For example, some of the inexpensive DACs from Topping measure extremely well. And whatever is missing from 44-16, is difficult for the most sensitive well trained listeners to discern, and requires critical listening under controlled conditions with top notch equipment....

(1) Make all the distortions in audio delivery chain vanishingly small. That's the path that professional community, and part of audiophile community, has taken. It is not simple, it is expensive, yet it is universal for all genres of music, and - I agree with Cosmic - easier for scientists and engineers to understand, model, and measure. 192/24 PCM and its compressed variations, in combination with seemingly unreasonably low THD and IMD at every step, relentlessly chased down by investigators like Amir, is the contemporary practical embodiment of this approach.

...

I'm not saying 44-16 is perfectly transparent so we're done, but whatever gap 44-16 has is quite thin, and that I'm skeptical that MQA does anything to fill that gap.

solderdude

Grand Contributor

Wow that's a lot of words to basically say nothing relevant and make ill founded remarks like :

Keep adding distortions until they perceptually cancel each other.

Do you have any evidence this is actually the case ?

Keep adding distortions until they perceptually cancel each other.

Do you have any evidence this is actually the case ?

The only gap MQA is designed to fill is the one in Bob Stuart's wallet. So far, it has failed to do that.I'm not saying 44-16 is perfectly transparent so we're done, but whatever gap 44-16 has is quite thin, and that I'm skeptical that MQA does anything to fill that gap.

So here comes the insight. What is common between (A),(B), and (C)? Gross distortions! In (A), they are mostly odd-harmonics, as Cosmik so eloquently reminded me. In (B), they are mostly even harmonics. In (C), they are all harmonics, carefully crafted to induce, via nonlinearities of transducers, and aided by DSP algorithms, the Missing Fundamental effect (https://en.wikipedia.org/wiki/Missing_fundamental).

Another hint for me was the perplexingly convoluted designs of some of the early transistor amplifiers, which were replacing in RTR and LP gear the still-fresh-in-memory vacuum-tube ones. Back then, I was thinking: "Seriously, fourteen stages of amplification? With diodes in a local feedback loop? What other than massive distortions are you going to get out from this?". But that was precisely the point! Systems as a whole did sound good.

If you put (A) and (B) together, you'll get (C). Odd harmonics + Even harmonics = All harmonics = illusion of a deep bass + perceptually arguably benign timbral deviations. In other words, there are two paths to perceptual high fidelity:

(1) Make all the distortions in audio delivery chain vanishingly small. That's the path that professional community, and part of audiophile community, has taken. It is not simple, it is expensive, yet it is universal for all genres of music, and - I agree with Cosmic - easier for scientists and engineers to understand, model, and measure. 192/24 PCM and its compressed variations, in combination with seemingly unreasonably low THD and IMD at every step, relentlessly chased down by investigators like Amir, is the contemporary practical embodiment of this approach.

(2) Keep adding distortions until they perceptually cancel each other. Majority of consumer audio gear vendors, and another part of audiophile community, took this turn. Despite being cheaper and easier to achieve as a first approximation, it is not universal in regard to genres of music, is very hard to model and measure, and keeping perceptual improvements coming gets prohibitively expensive, especially in terms of tinkering time spent, after a certain point. Nevertheless, the boutiques owners seem happy playing new age and moderately paced urban contemporary on that gear.

The framework described above allows to explain the mystery behind the still raging, after all these decades, analog vs digital controversy. CD was one of the very first generations of products on the path (1) that mass-market consumers could buy. High-end RTR/LP media and gear were the last generations of analog products on path (2). The CD is superior in many, yet not all, perceptual aspects, to the high-end RTR/LP. And vice versa.

Due to differences in hearing systems, and other factors, some of which could be unrelated to the perceptual quality of sound, some people prefer CD to RTR/LP, while others have opposite preference. Yet others prefer CD for some genres of music, or even individual music pieces, and RTR/LP for other genres and pieces.

In my opinion, there is strong evidence indicating that 192/24 PCM, if delivered inexpensively, and yet in such a way that it won't be easily stolen, may persuade some of the folks currently pursuing path (2) to switch to path (1). MQA purports to be such a technology. That's why it is of interest to me personally. Yet it is not the only technology that can aid the (2)->(1) transition, in my opinion.

Just to simplify things for people who don't want to wade through this, I highlighted in red the things which are utter bullshit. The rest is irrelevant.

You may be able to design circuits where distortion in one part cancels distortion in another part, e.g adding them if they are 180 degree out of phase. Some op-amp designer do this AFAIK. The result is what you mention under (1). This approach can only work within a specific unit. It cannot work such that one unit (e.g. preamplifier) cancels distortions in another unit (e.g. CD-player), since for this the designed or the preamplifier must know which CD player will be used.[..]In other words, there are two paths to perceptual high fidelity:

(1) Make all the distortions in audio delivery chain vanishingly small. That's the path that professional community, and part of audiophile community, has taken. It is not simple, it is expensive, yet it is universal for all genres of music, and - I agree with Cosmic - easier for scientists and engineers to understand, model, and measure. 192/24 PCM and its compressed variations, in combination with seemingly unreasonably low THD and IMD at every step, relentlessly chased down by investigators like Amir, is the contemporary practical embodiment of this approach.

(2) Keep adding distortions until they perceptually cancel each other.

Otherwise adding distortion just leads to more distortion, and I really would like to see the scientific background how this improves perceptual sound quality. I remember that somewhere Nelson Pass has said that adding 2nd order THD in an amplifier such that it is higher than 3rd order THD from the source improves perceptual sound quality. I don't know if this has been proofed by proper DBTs.

Similar threads

- Replies

- 5

- Views

- 557

- Replies

- 6

- Views

- 414

- Replies

- 24

- Views

- 1K

- Replies

- 16

- Views

- 1K

- Replies

- 17

- Views

- 765