JimB ..... Sorry for some reason my brain was mushy after listening a couple hours and it was hard to explain

. My wife, a friend and I sat on the couch and I played the clips. So we listened to the same system at the same time and I played a minute or two of each track and went back and forth two or three times playing the same parts of the tracks for sample A and B in each case. We decided this was more realistic in comparing what we thought we were hearing (i.e. does that vocal crescendo or bass drum hit sound the same in the two samples?) than listening to entire 3 to 8 minute samples in their entirety. We spent an hour, took a break (dinner, wine) and listened to the rest ... probably 2 1/2 hrs listening total, moving faster toward the end. Equipment: Tracks played from JRiver on a computer over HDMI to Emotiva XMC-1 running Dirac Live in Stereo mode. Left/Right speakers are Magneplanar 3.7; Two Outlaw subs (front/rear of the room). Nord One stereo amp (700w/4ohms). Room is well-treated with bass traps and diffusion. Measured response is very uniform with many years of careful speaker placement, Dirac calibration and listening tests. All tracks were played at the same (moderate) listening level. Due to the wide dynamic range of many of the tracks, in retrospect I should have played maybe 5db louder. (BTW, I own many thousands of high-res tracks from 96/24 to ridiculous high rate PCM, and DSD and some SACD. We mostly listen to these, though sometimes listen to a streaming service or older ripped MP3's)

The test says to pick the high-res version from each sample pair. We each used some criteria (without discussing) and marked our results on a score sheet. After results were compiled and my results submitted to Mark, I used Music Scope to determine which were the 96/24. I got 6 "right" out of 20. My wife and friend got 7 and 5 respectively. So .... for what it's worth, we pretty consistently all used our own criteria and it seems we could tell the difference in this single test, under these conditions ... BUT what we picked as the high-res was actually the

low-res the majority of the time!

My wife and I did the previous test with similar process in the same room, same equipment and we each got 5 out of 6 correct (actually picking the

high-res that time. We both got the first four, the each got one of the last two.

All that said .... the engineer in me says if we did repeated tests our results would be

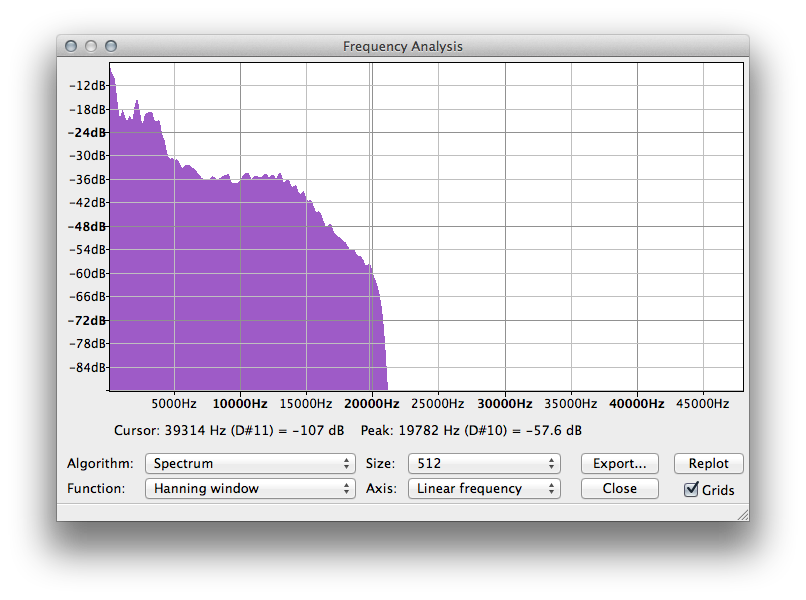

random ... as you found. At best I think it may be possible to tell the difference between samples, but I am amused by the fact that in this trial all three of us picked the low-res file most often. Could it be that there was an unintended, consistent, but unmeasurable difference in the prep of the files?

There are many ways to look at this question. What if instead of this prep process, two identical recorders were used in a live session, one recording 44.1/16 and the other 96/24 from the same feed at the same time with identical levels. No up or down sampling later, or touching the files in any way. Would an A/B/X test just to determine if one can consistently hear a

difference (not

preference as inferred here) be more valid? I think so. Etc. ... many ways to get to the root of this.

I suspect that technically high quality recordings will be indistinguishable, though there's good reason to make use of the headroom offered by 96/24 in the production process.

SAL1950 ..... I read Mark's response in his blog post yesterday. Solomon was quite harsh and unnecessarily personal in his attack. The audio industry is infested with snake-oil salesman and the streaming market is predictably vicious in tossing every bit of mud and misinformation they can muster to grab their share of this ever-growing pot of gold ... physics and facts be damned.