I will repost some things that I just posted on another thread...

As to headphones, they fail to take into consideration head related transfer/impulse functions and the auris externa - see the lectures from Dr Land at Cornell below...

Also see this -https://

www.jneurosci.org/content/24/17/4163

And this:

https://pdfs.semanticscholar.org/0e76/923ed6c85fcdd8d9a2f269d5c7493b3c3abd.pdf

"Clearly, localization is not isolated to simply the sounds heard. Many more effects contribute to

localization than that proposed by the duplex theory. Although Wightman & Kistler have shown that a

virtual auditory space can be generated through headphone delivered stimulus, they are still lacking some

key features. The ability to accurately reproduce elevation localization may be a problem for aircraft

simulations. Other cues such as head movements and learning may also help in sound localization. For

commercial applications where localization does not need such accuracy, an average HRTF can be

created to externalize sounds."

Also see this

https://pages.stolaf.edu/wp-content/uploads/sites/406/2014/07/Westerbert-eg-al-2015-Localization.pdf

View attachment 44078

There's been many a patent that discusses trying to get headphones to accurately mimic human hearing and it's interaction to the environment

In addition - see this

https://core.ac.uk/download/pdf/33427652.pdf

I wrote this a while ago on the Hoffman forum:

Typically, bass is pretty much omnidirectional below about 80-100 - the entire structure begins moving.

During studio construction one of the things we do with infinite baffle/soffit mounting designs is to isolate the

cabinets from the structure to minimize early energy transfer - this keeps the structure from transmitting bass faster than air to the mix location. Sound travels faster thru solids - recall the ol' indian ear-on-the-rail thing?

Why does sound travel faster in solids than in liquids, and faster in liquids than in gases (air)?

One thing you want to avoid is the bass from speaker coupling to the building structure and arriving at you ear sooner than the sound from the speakers. This can cause a comb filtering where you lose certain frequencies due to cancellation.

Google recording studio monitor isolation and note the tons of isolation devices sold for this reason...

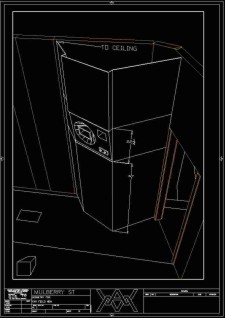

Here's a doghouse design for UREL 813's I did a while ago:

As to mixing - I rarely use pan pots for directional info in my mixes. I use various time-based methods to try and simulate the precedence effect as well as directional cues and stimulate impulse responses / head related transfer functions (HRTF).

Head-related transfer function - Wikipedia

"A pair of HRTFs for two ears can be used to synthesize a

binaural sound that seems to come from a particular point in space."

One thing it does is to really open up the mono field, since instruments are now localized and can be sized depending on the early reflections I set up in something like a convolution reverb.

A great write up here on the Convolvotron:

HRTF-Based Systems – The CIPIC Interface Laboratory Home Page

Part of a great resource for modern sound localization efforts for HMI audio:

The CIPIC Interface Laboratory Home Page – Electrical and Computer Engineering

As to low frequency information:

From

Sound localization - Wikipedia :

Evaluation for low frequencies

For frequencies below 800 Hz, the dimensions of the head (ear distance 21.5 cm, corresponding to an interaural time delay of 625 µs) are smaller than the half

wavelength of the

sound waves. So the auditory system can determine phase delays between both ears without confusion. Interaural level differences are very low in this frequency range, especially below about 200 Hz, so a precise evaluation of the input direction is nearly impossible on the basis of level differences alone. As the frequency drops below 80 Hz it becomes difficult or impossible to use either time difference or level difference to determine a sound's lateral source, because the phase difference between the ears becomes too small for a directional evaluation.

[11]

Interesting info here from Dr. Bruce Land on sound localization - end of #25 and into #26

Note the comment concerning using a bus on a DAW to mimic HRTF. Also note his refernece to the CIPIC database .

This prevents the "ear pull" associated with unbalanced RMS levels across the ears. As Dr. Land mentions, your ear localizes based on time as well as amplitude. The interaural time difference ITD (

Interaural time difference - Wikipedia ) is as critical as Interaural Level Differences (ILD). As he states, humans learn early on to derive directional cues from impulse responses at the two ears.

One thing that has to be said is the significant differences in head related transfer function between various people - but note the chart where he mentions the one person with a -48dB notch at 6kHz - the curves up to around 5kHz are fairly close and in the A weighted range...

Another great lecture on sound localization from MIT:

20. Sound localization 1: Psychophysics and neural circuits

I used various time-based techniques on this - a remix of Whole Lotta Love from the original multitracks:

Remix of WLL

Watch/listen to the Comparison video...

Another technique is to use double tracking and artificial double tracking (ADT) which will spread the instrument/spectra across the panorama -

Automatic double tracking - Wikipedia

- tho this can lead to mono compatibility issues... some effects that do this use a bunch of bandpass filters whereas you can set the delays for each band. Note what George Martin and Geoff Emerick mention using older analog style, tape-based ADT during the Anthology sessions. Again, these techniques reduce the unbalanced feeling across the head but still open up the stereo field to allow all the instruments to sit in the stereo image.

Double tracking - both natural and ADT - is prevalent in a lot of the metal mixes - for instance:

Remix of the Curse of the Twisted Tower

Note that the opening of the first clips was locked into what it is since it didn't exist on the multitracks and was flown in on the original release. But on the other samples compare the mixes and notice they don't sound as disjointed across the panorama as does the older, pan-only mixes. One of the band members commented on how he was able to hear his solos better

This was followed up by this:

I would like to ask a sub question in this regard. Let's say we have a mono recording, for simplicity. Will it ever be possible for headphones in the future to make sure that the sound does not form inside the head but in the front with normal recordings? In nature there are very few sounds that we perceive inside our head. Except when we have phlegm in the ears or eat or pat on the forehead

There was an AES paper written about this a while ago:

http://www.aes.org/e-lib/browse.cfm?elib=2591

A paper on out of head Headphone Sound Externalization

http://research.spa.aalto.fi/publications/theses/liitola_mst.pdf

As i mentioned, there's a lot of research and patents that deal with various methods to externalize headphone audio. None that I know of are completely accurate in their representation of sound localization.

http://www.y2lab.com/en/project/3d_headphone_technology/

Video from that page:

Yamaha 3D Headphone Technology : Post-production Demo

https://ieeexplore.ieee.org/document/1461788