I think the whole 'damping factor' thing is a marketting nonsense thingy.

Hmm now I feel compelled to explain my train of thoughts.

Electrical damping is done by current. The more current the more 'breaking' is going on. So it stands to reason that when a power source is short circuited the current is MUCH higher than when it sees a few Ohm or heaven forbid 120 Ohm or so.

Now here is the thing.

Let's assume the HD650 is used and we apply the '1/10th rule' we see everywhere.

An uncontroled motion of the membrane is what needs to be 'damped' by current apparantly.

That motion (that needs to be damped) generates a voltage by the voicecoil being in a magnetic field.

Lets assume that the internal resistance of that 'generator' (works as a dynamic mic. literally) that resistance is low, say 1 Ohm.

In this case the output current of that 'generator' is very high (thus greatly damped) when shorted by close to 0 Ohm.

The same generator will not be delivering much current in a 10 Ohm 'load' (the amps output R) and so little damping is going on any more.

That's the theory around the damping factor.

When you do some math here differences in currents (that does the actual damping) is HUGE... damping factor confirmed right ?

High damping current in low output R amp and poor damping on 10 Ohm output R amp.

One may have guessed it already... the source impedance, alas, of the 'generator' in the case of the HDX0 in this case is not close to 0 Ohm at all.

It's the DC resistance ... around 300 Ohm.

When one does the math and assumes the generated voltage is say 1V (for arguments sake) then the difference in damping current when connected to a 0 Ohm amp and 10 Ohm amp aren't big at all. 1/300 or 1/310 Amp in this case (3.3mA vs 3.2mA).

You guessed it... damping current differences of 3% won't be that audible.

What will become audible (raised bass) is the FR will be different because of voltage division and thus 'muddy' the bass.

The generated back EMF is what raises the impedance. Because the driver needs less current (at the same applied voltage) at the resonance frequency and the voltage remains the same the impedance rises.

The fact that some people 'report' hearing differences in orthos may well be caused by differences in loudness or from the usual source of conflicting reports (brain thingy).

At least the above is my theory untill proven otherwise.

I noticed John Siau dwells here (who has my greatest admiration) and has measured increased distortion when the output R is higher which appears to be the 'biggest' proof at the moment.

What my 'counter' theory here is ... voltage division again.

You have 0 Ohm source resistance and measure at the output of the device. All voltages there are solely coming from the amp. The headphone's generator is shorted so the induced current by the voicecoil won't become visible at all.

Add a 10 Ohm resistance INSIDE that amplifier box/device and things become different.

On the output the actual signal will appear (of course) but signals generated by the voicecoil that run through the voicecoil + output R thus will create a voltage across both the voicecoil and output R.

Since the output signal of an amp is measured as a black box with a 10 Ohm output R both the applied signal (from the amp) + the divided current (from the voicecoil + output R) will be present at the output of the amp measured.

As a distortion measurement is nothing more than measuring the difference between the input signal from the amp and the output signal minus the gain then it is logical one 'measures' a bigger difference when the output signal + attenuated back EMF from the driver is compared to the input signal.

This doesn't make the distortion that much higher, it only appears that way.

Don't know if my reasoning is valid but that's my take on output R and the 'damping factor' story from yesteryear that does not seem to go away.

Are people wrong with the 1/10th rule of thumb ?

Not entirely as most headphones are designed to be driven from a voltage source (low output R).

Some (certainly not all) headphones have varying impedances. For lower imp. headphones the end result (change in FR due to voltage division) is much worse than for higher imp. headphones due to the voltage division ratio changing.

So yes, some headphones (those with varying impedances) will show a huge change in tonal balance (bass mostly, unless they are MA or such) where other headphones, even dynamics not just planars, have no variance in tonal balance/impedance.

As the actual effect on tonal balance is complex to calculate in dB's for most I always make measurements (headphones) when driven from low output R and the (former standard) 120 Ohm output and compensate for the difference in output level (which can be huge) so tonal balance differences become obvious. Again... due to voltage division mostly but agree that for low impedance headphones (below say 50 Ohm) the damping current will also be of influence as that can easily halve or even less.

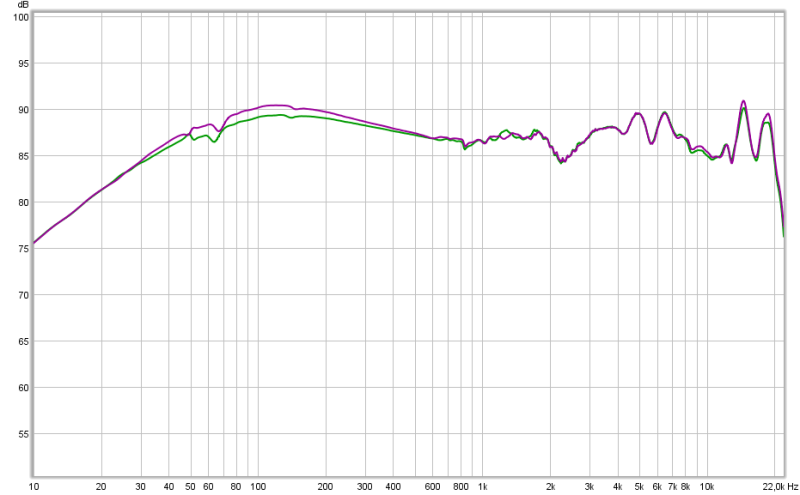

below the HD650 tonal balance change when fed through

0 Ohm and

120 Ohm. 10 Ohm would be closest to 0 Ohm.

So... best to keep the output R low in most cases (John S is right about this) but sometimes a higher output R has positive effects on the sound. Specifically those HP's that were designed while applying the old standard.

It's why my amp designs have selectable output R's in just 2 or 3 steps. So the owner is more 'flexible'.