Reproduced from an old post in another place and time... Caveats:

Based upon a kind member's comments (thanks Kal!), here is a little more background about this example:

This does not target any particular data protocol (e.g. S/PDIF, HDMI, DSD, etc.) or rate (bits/second). The concept applies to any digital stream over a transmission line, be it electrical or optical (which is usually electrical at each end). S/PDIF, HDMI, you name it... Optical cables can also do funky things on top of what the transceivers at the ends of the cable do. The data rate is generic (and pretty low); the idea was to show what limited bandwidth can do to the signal. You can scale to any rate desired. The intent is to show how cable bandwidth can affect the bits, which may impact the sound or picture. In general, with error correction and good clock circuits, digital links tend to either work or not. If they do not, you'll get dropouts (e.g. lost or garbled audio, sparkles or gaps/stutters in video) and such that are pretty obvious, but that would be a link on the very edge of failure. Usually that means a cable too long or not rated for the data rate (e.g. using an older HDMI cable for a new 4K link).

On to the old post...

Can your digital cable impact the sound of your system? Like most things, the answer is “maybe”… Reading some of the cable advertisements I see all kinds of claims. Some make no sense to me, such as “use of non-ferrous materials isolates your cable from the deleterious effects of the Earth’s magnetic field”. Hmmm… Some appear credible but describe effects that I have difficulty believing have any audible impact, such as “the polarizing voltage reduces the effect of random cable charges”. True, a d.c. offset on a cable can help reduce the impact of trapped charge in the insulation, but these charges are typically a problem for signals on the order of microvolts (1 uV = 0.000001 V) or less. Not a problem in an audio system even for the analog signals (1 uV is 120 dB below a 1 V signal), let alone the digital signals. But, there are things that matter and might cause problems in our systems.

Previously we discussed how improper terminations can cause reflections that can corrupt a digital signal. This can cause jitter, i.e. time varying edges, that are dependent upon the signal, which is really an analog signal from which we extract digital data (bits). As was discussed, this is rarely a concern for the digital system as signal recovery circuits can reject a large amount of jitter and extensive error correction makes bit errors practically unknown. The problem is when the clock extracted from the signal (bit stream) is directly used as the clock for a DAC. The DAC will pass any time-varying clock edges to the output just as if the signal was varying in time. It has no way of knowing the clock has moved, and the result is output jitter. Big enough variation and we can hear it as distortion, a rise in the noise floor, or both.

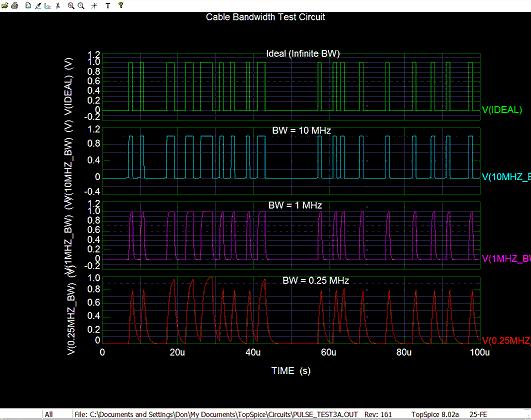

One thing all cables have is limited bandwidth. Poorly-designed cables, or even well-designed cables that are very long, may limit the signal bandwidth. This causes signal-dependent jitter. How? Look at the figure below showing an ideal bit stream and after band limiting. I used a 1 us bit period (unit interval) for convenience; this is a little less than half the rate of a CD’s bit stream.

There is almost no difference between the ideal and 10 MHz cases. Since 10 MHz is well above 1/1 us = 1 MHz we see hardly any change. Dropping to 1 MHz, some rounding of the signal has occurred, and at 0.25 MHz we see that rapid bit transitions (rapidly alternating 1’s and 0’s) no longer reach full-scale output. Looking closely you can see that the period between center crossings (when the signal crosses the 0.5 V level) changes depending upon how many 1’s or 0’s are in a row. With a number of bits in a row at the same level, the signal has time to reach full-scale (0 or 1). When the bits change more quickly, the signal does not fully reach 1 or 0. As a result, the slope is a little different, and the center-crossing is shifted slightly in time. This is signal-dependent jitter.

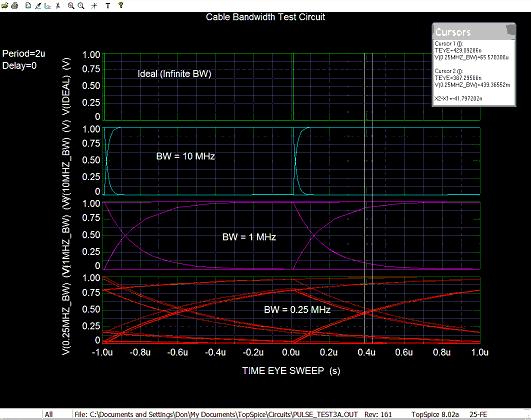

A better way to see this is to “map” all the unit-intervals on top of each other. That is, take the first 1 us unit interval (bit period) and plot it, then shift the next 1 us to the left so that it lies on top of the first, and so forth. This “folds” all the bit periods into the space of a single unit interval to create an eye diagram (because it looks sort of like an eye). See the next figure.

The top (ideal) plot shows a wide-open eye with “perfect” edges. As the bandwidth drops to 10 MHz we see the edges now curve somewhat, but there is effectively no jitter seen. That is, the lines cross at the center at one point, no “spreading”. With 1 MHz bandwidth the slower (curving) edges are very obvious, but still the crossing happens essentially at a single point. In fact jitter has increased but it is not really visible.

At 0.25 MHz there is noticeable jitter, over 41 ns peak-to-peak. That is, the center crossings no longer fall at a single point in time, but vary over about 41 ns in time. Why? Look at the 1 MHz plot and notice when the signal changes, rising or falling, it still (barely) manages to reach the top or bottom before the next crossing begins. That is, the bits reach full-scale before the end of the 1 us unit interval (bit period). However, with only 0.25 MHz bandwidth, if the bits change quickly there is not time for the preceding bit to reach full-scale before it begins to change again. You can see this in the eye where the signal starts to fall (or rise) before it reaches the top or bottom (1 V or 0 V) of the plot. This shifts the time it crosses the center (threshold). If the DAC’s clock recovery circuit does not completely reject this change, the clock period will vary with the signal, and we get jitter that causes distortion at the output of our DAC.

How bad this sounds depends upon just how much bandwidth your (digital) cable has (a function of its design and length) and how well the clock recovery circuit rejects the jitter. It is impossible to reject all jitter from the clock recovered from the bit stream, but a good design can reduce it significantly. An asynchronous system that isolates the output clock from the input clock can essentially eliminate this jitter source.

HTH - Don

- Most if not all DACs today have their own (asynchronous) clock so jitter on the incoming datastream have little impact on the output bits that get applied directly to the DAC;

- Jitter or amplitude variations won't cause data loss until very severe (many devices can recover the data cleanly from a closed eye at the input) and that does not include the error correction built into most digital transmission schemes;

- The frequencies are to illustrate a point, and make it easy to plot -- they do not correspond to any particular transmission scheme;

- This is a very complex subject and this post only scratches the surface of what can corrupt an eye and how it is recovered; and,

- This is about eight years' old so as usual some references may be out of date.

Based upon a kind member's comments (thanks Kal!), here is a little more background about this example:

This does not target any particular data protocol (e.g. S/PDIF, HDMI, DSD, etc.) or rate (bits/second). The concept applies to any digital stream over a transmission line, be it electrical or optical (which is usually electrical at each end). S/PDIF, HDMI, you name it... Optical cables can also do funky things on top of what the transceivers at the ends of the cable do. The data rate is generic (and pretty low); the idea was to show what limited bandwidth can do to the signal. You can scale to any rate desired. The intent is to show how cable bandwidth can affect the bits, which may impact the sound or picture. In general, with error correction and good clock circuits, digital links tend to either work or not. If they do not, you'll get dropouts (e.g. lost or garbled audio, sparkles or gaps/stutters in video) and such that are pretty obvious, but that would be a link on the very edge of failure. Usually that means a cable too long or not rated for the data rate (e.g. using an older HDMI cable for a new 4K link).

On to the old post...

Can your digital cable impact the sound of your system? Like most things, the answer is “maybe”… Reading some of the cable advertisements I see all kinds of claims. Some make no sense to me, such as “use of non-ferrous materials isolates your cable from the deleterious effects of the Earth’s magnetic field”. Hmmm… Some appear credible but describe effects that I have difficulty believing have any audible impact, such as “the polarizing voltage reduces the effect of random cable charges”. True, a d.c. offset on a cable can help reduce the impact of trapped charge in the insulation, but these charges are typically a problem for signals on the order of microvolts (1 uV = 0.000001 V) or less. Not a problem in an audio system even for the analog signals (1 uV is 120 dB below a 1 V signal), let alone the digital signals. But, there are things that matter and might cause problems in our systems.

Previously we discussed how improper terminations can cause reflections that can corrupt a digital signal. This can cause jitter, i.e. time varying edges, that are dependent upon the signal, which is really an analog signal from which we extract digital data (bits). As was discussed, this is rarely a concern for the digital system as signal recovery circuits can reject a large amount of jitter and extensive error correction makes bit errors practically unknown. The problem is when the clock extracted from the signal (bit stream) is directly used as the clock for a DAC. The DAC will pass any time-varying clock edges to the output just as if the signal was varying in time. It has no way of knowing the clock has moved, and the result is output jitter. Big enough variation and we can hear it as distortion, a rise in the noise floor, or both.

One thing all cables have is limited bandwidth. Poorly-designed cables, or even well-designed cables that are very long, may limit the signal bandwidth. This causes signal-dependent jitter. How? Look at the figure below showing an ideal bit stream and after band limiting. I used a 1 us bit period (unit interval) for convenience; this is a little less than half the rate of a CD’s bit stream.

There is almost no difference between the ideal and 10 MHz cases. Since 10 MHz is well above 1/1 us = 1 MHz we see hardly any change. Dropping to 1 MHz, some rounding of the signal has occurred, and at 0.25 MHz we see that rapid bit transitions (rapidly alternating 1’s and 0’s) no longer reach full-scale output. Looking closely you can see that the period between center crossings (when the signal crosses the 0.5 V level) changes depending upon how many 1’s or 0’s are in a row. With a number of bits in a row at the same level, the signal has time to reach full-scale (0 or 1). When the bits change more quickly, the signal does not fully reach 1 or 0. As a result, the slope is a little different, and the center-crossing is shifted slightly in time. This is signal-dependent jitter.

A better way to see this is to “map” all the unit-intervals on top of each other. That is, take the first 1 us unit interval (bit period) and plot it, then shift the next 1 us to the left so that it lies on top of the first, and so forth. This “folds” all the bit periods into the space of a single unit interval to create an eye diagram (because it looks sort of like an eye). See the next figure.

The top (ideal) plot shows a wide-open eye with “perfect” edges. As the bandwidth drops to 10 MHz we see the edges now curve somewhat, but there is effectively no jitter seen. That is, the lines cross at the center at one point, no “spreading”. With 1 MHz bandwidth the slower (curving) edges are very obvious, but still the crossing happens essentially at a single point. In fact jitter has increased but it is not really visible.

At 0.25 MHz there is noticeable jitter, over 41 ns peak-to-peak. That is, the center crossings no longer fall at a single point in time, but vary over about 41 ns in time. Why? Look at the 1 MHz plot and notice when the signal changes, rising or falling, it still (barely) manages to reach the top or bottom before the next crossing begins. That is, the bits reach full-scale before the end of the 1 us unit interval (bit period). However, with only 0.25 MHz bandwidth, if the bits change quickly there is not time for the preceding bit to reach full-scale before it begins to change again. You can see this in the eye where the signal starts to fall (or rise) before it reaches the top or bottom (1 V or 0 V) of the plot. This shifts the time it crosses the center (threshold). If the DAC’s clock recovery circuit does not completely reject this change, the clock period will vary with the signal, and we get jitter that causes distortion at the output of our DAC.

How bad this sounds depends upon just how much bandwidth your (digital) cable has (a function of its design and length) and how well the clock recovery circuit rejects the jitter. It is impossible to reject all jitter from the clock recovered from the bit stream, but a good design can reduce it significantly. An asynchronous system that isolates the output clock from the input clock can essentially eliminate this jitter source.

HTH - Don

Last edited: