tallbeardedone

Active Member

- Joined

- Sep 3, 2022

- Messages

- 102

- Likes

- 221

Here's a physics fact: the intended 3-dimensional stereo soundstage is ONLY possible along the center line between precisely placed stereo speakers.

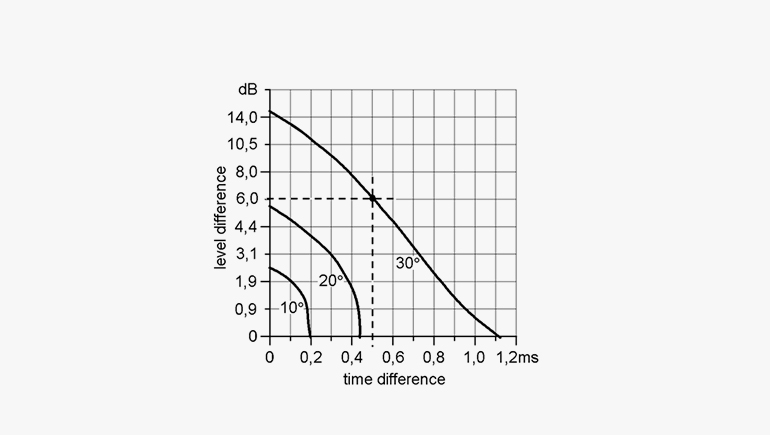

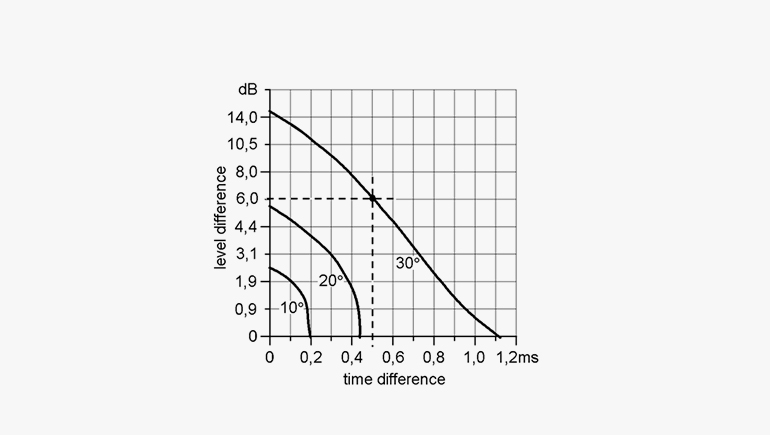

Here’s why: Stereo reproduction uses either time-of-arrival delay or intensity-difference (volume difference) between channels to place sound at a specific angle within the soundstage (see photo 1)

.jpg)

For example, to place the image in the center there must be zero time delay between the sound waves from left and right channels to your ears or the sound from each speaker must be exactly the same volume, or a combination of the two. To make the sound source appear 30 degrees off-center a time delay of approximately 1.1ms or a level difference of approx 15dB or a combination of the two (0.5ms with 6dB volume difference) must be used (see photo 2).

Long story short, the ONLY way to get the correct time delay or volume difference at your ears is to be EXACTLY mid-line between well-placed speakers. If you're off to the right or left, by the laws of physics, you are changing the time-delay and sound pressure difference at your ears, and the image/soundstage will shift accordingly.

source: https://www.dpamicrophones.com/mic-...v2UiPaHNb0uc7mi30HmoW2nimVctCU-c8wZBC2E5p_5JA

Here’s why: Stereo reproduction uses either time-of-arrival delay or intensity-difference (volume difference) between channels to place sound at a specific angle within the soundstage (see photo 1)

.jpg)

For example, to place the image in the center there must be zero time delay between the sound waves from left and right channels to your ears or the sound from each speaker must be exactly the same volume, or a combination of the two. To make the sound source appear 30 degrees off-center a time delay of approximately 1.1ms or a level difference of approx 15dB or a combination of the two (0.5ms with 6dB volume difference) must be used (see photo 2).

Long story short, the ONLY way to get the correct time delay or volume difference at your ears is to be EXACTLY mid-line between well-placed speakers. If you're off to the right or left, by the laws of physics, you are changing the time-delay and sound pressure difference at your ears, and the image/soundstage will shift accordingly.

source: https://www.dpamicrophones.com/mic-...v2UiPaHNb0uc7mi30HmoW2nimVctCU-c8wZBC2E5p_5JA

Last edited: