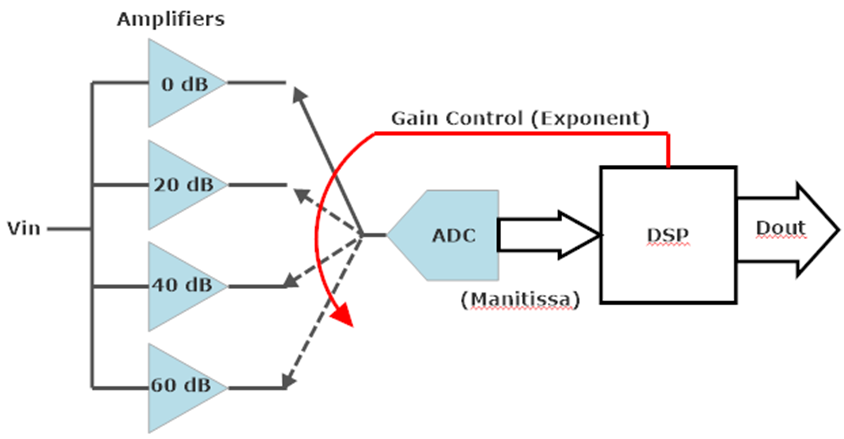

Here is an attempt to show how a floating-point ADC system might benefit a field recorder (or other device) needing to cover a wide dynamic range with better performance than a single ADC. The floating-point ADC system comprises four different gain stages (amplifiers) with switches into the ADC. The signal input Vin is applied to all four amplifiers simultaneously. The DSP determines which switch (gain stage) to use based upon the ADC’s (digital) output signal. It probably also performs calibration to ensure precise alignment among the four gain stages. The gain control setting is the exponent, and ADC output bits are the mantissa, of the floating-point output.

A gain of 20 dB is a factor of 10, so if the ADC has a full-scale range of 1 Vpk then the input would be [1, 0.1, 0.01, 0.001] Vpk to apply 1 Vpk at the ADC’s inputs for each gain stage from 0 to 60 dB. That is, each gain-of-20 dB stage means an input 1/10 smaller will be amplified to 1 Vpk, the ADC’s full-scale range. For this example, a 16-bit ADC is designed to have -100 dB THD for a full-scale signal and quantization noise sets the SNR to around 98 dB (ideal).

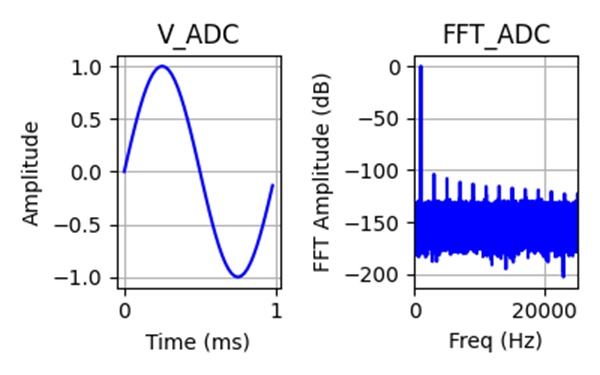

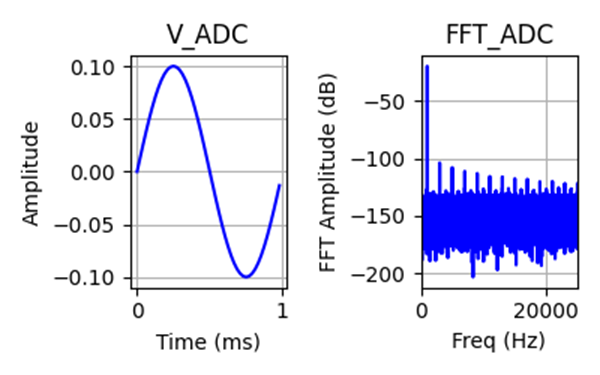

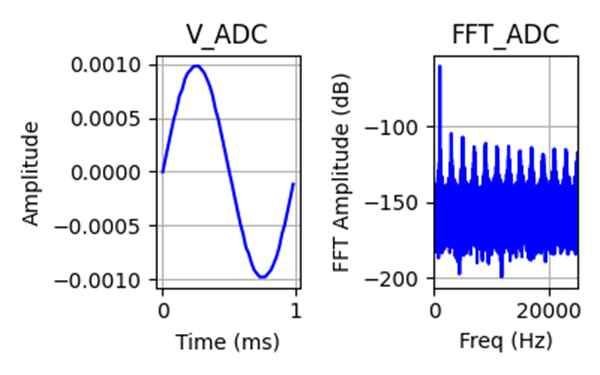

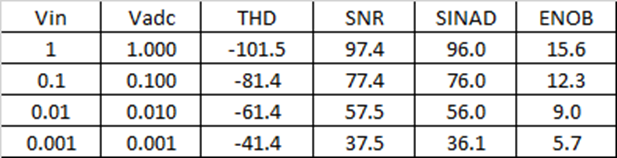

Now apply four signals ranging from full-scale (1 Vpk) down to 1 mVpk (-60 dB relative to full scale) in 20 dB (1/10th) steps and generate the results as if there was a single ADC with no gain stages. No gain switching, no floating-point operation.

As the signal gets smaller, it approaches the noise and distortion floor of the ADC, so the effective number of bits (ENOB) drops. At 1 Vpk, there is only slight reduction in performance from an ideal 16-bit converter, but as the signal gets smaller, the SINAD shows 20 dB drops tracking the signal level, which is reflected in the ENOB the ADC delivers to the system.

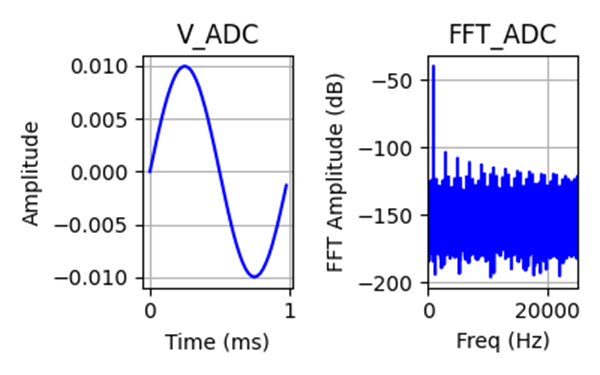

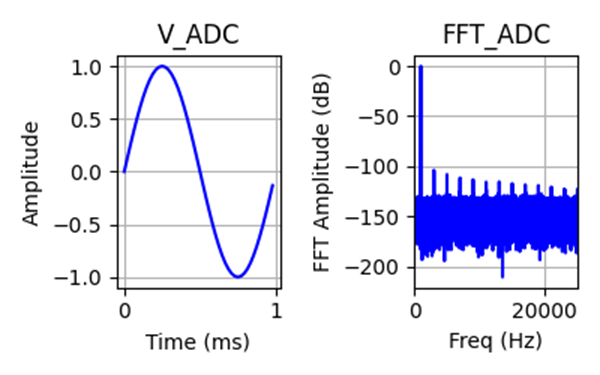

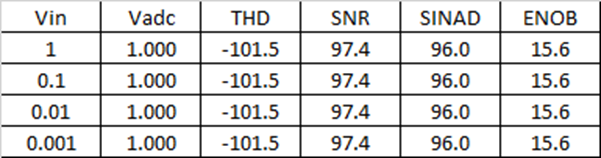

Now to implement floating-point operation, switch gain as the signal drops, adding 20 dB (10x) gain at each step and thus maintaining full-scale input to the ADC. Not surprisingly, the performance is now the same for each input level, since all the gain stages are ideal (no added noise or distortion). In practice, they will add a little noise and distortion of their own, but the results will still be much better performance than using a single ADC. I only show one plot since, due to the extra gain, the other three are the same.

For these test signals, the floating-point system provides 15.6 bits for each signal, compared to a range from 15.6 down to only 5.7 bits without switching gain before the ADC. If the signal falls between gain ranges, you will lose performance (effective bits) just as with a regular ADC, but since 20 dB is about 3 bits, you only lose about 3 bits (from ~16 down to ~13 ENOB) before switching in more gain.

If you exceed the maximum input, the ADC will still clip, so designers may choose full-scale to provide headroom beyond what the input signal can reach (e.g. maximum output from the microphone). At the low end, as the signal drops below 1 mVpk, the number of bits will fall off conventionally, losing about one bit each time the signal drops in half (6 dB). That is, at 0.25 mVpk (1/4 the signal), or 12 dB lower than the minimum that can be amplified to full scale for the ADC, the best the ADC can produce is 14 effective bits (16 – 2 = 14 ENOB).

So, for a range from 1 mVpk to 1 Vpk, we maintain ADC performance of 13 to 16 bits (ideally) using this floating-point system. Beyond 1 Vpk, the system will still clip, and below 1 mVpk the system will lose 1 bit each time the signal falls in half (same as any ADC). In contrast, a single (non-floating-point) 16-bit ADC would range from about 6 to 16 effective bits over that same 1 mVpk to 1 Vpk range.

HTH – Don

A gain of 20 dB is a factor of 10, so if the ADC has a full-scale range of 1 Vpk then the input would be [1, 0.1, 0.01, 0.001] Vpk to apply 1 Vpk at the ADC’s inputs for each gain stage from 0 to 60 dB. That is, each gain-of-20 dB stage means an input 1/10 smaller will be amplified to 1 Vpk, the ADC’s full-scale range. For this example, a 16-bit ADC is designed to have -100 dB THD for a full-scale signal and quantization noise sets the SNR to around 98 dB (ideal).

Now apply four signals ranging from full-scale (1 Vpk) down to 1 mVpk (-60 dB relative to full scale) in 20 dB (1/10th) steps and generate the results as if there was a single ADC with no gain stages. No gain switching, no floating-point operation.

As the signal gets smaller, it approaches the noise and distortion floor of the ADC, so the effective number of bits (ENOB) drops. At 1 Vpk, there is only slight reduction in performance from an ideal 16-bit converter, but as the signal gets smaller, the SINAD shows 20 dB drops tracking the signal level, which is reflected in the ENOB the ADC delivers to the system.

Now to implement floating-point operation, switch gain as the signal drops, adding 20 dB (10x) gain at each step and thus maintaining full-scale input to the ADC. Not surprisingly, the performance is now the same for each input level, since all the gain stages are ideal (no added noise or distortion). In practice, they will add a little noise and distortion of their own, but the results will still be much better performance than using a single ADC. I only show one plot since, due to the extra gain, the other three are the same.

For these test signals, the floating-point system provides 15.6 bits for each signal, compared to a range from 15.6 down to only 5.7 bits without switching gain before the ADC. If the signal falls between gain ranges, you will lose performance (effective bits) just as with a regular ADC, but since 20 dB is about 3 bits, you only lose about 3 bits (from ~16 down to ~13 ENOB) before switching in more gain.

If you exceed the maximum input, the ADC will still clip, so designers may choose full-scale to provide headroom beyond what the input signal can reach (e.g. maximum output from the microphone). At the low end, as the signal drops below 1 mVpk, the number of bits will fall off conventionally, losing about one bit each time the signal drops in half (6 dB). That is, at 0.25 mVpk (1/4 the signal), or 12 dB lower than the minimum that can be amplified to full scale for the ADC, the best the ADC can produce is 14 effective bits (16 – 2 = 14 ENOB).

So, for a range from 1 mVpk to 1 Vpk, we maintain ADC performance of 13 to 16 bits (ideally) using this floating-point system. Beyond 1 Vpk, the system will still clip, and below 1 mVpk the system will lose 1 bit each time the signal falls in half (same as any ADC). In contrast, a single (non-floating-point) 16-bit ADC would range from about 6 to 16 effective bits over that same 1 mVpk to 1 Vpk range.

HTH – Don