Misinformation on many levels. First, The preference scores on the AutoEQ Ranking page are referencing Harman IE 2019 for IEMs, nothing else. Only the separate EQ presets are using a lightly modified Harman target, which is fine. Automatic EQ doesn't become more reliable just for adhering to a particular target in this context. This makes your erroneous remark about the frequency range irrelevant, in fact 10kHz+ was never on the table for 711 couplers per IEC standards and manufacturer specs, that's why Sean Olives model didn't consider +10kHz. So AutoEQ would be doing it incorrectly if they changed it to what you want.For IEMs, @jaakkopasanen even uses not the 2019v2IE Harman target, but lowers the bass by 2 dB. I don't know why, but this 1) distortes obviously the compliance score and 2) has not objective justification. The region is also limited to 40HZ until 10kHz. So when an IEM has weak subbass or extremley unsmooth and spiky treble above 10kHz (which many, like for example the Chu, but not the Variations or the Truthear Nova, have), this is simply neglected. In my view, by doing this, and all of it is not even clearly stated, but must be extracted from the Python source code, he is doing a big disservice to the research.

-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

- Forums

- Audio, Audio, Audio!

- Headphones and Headphone Amplifier Reviews

- Headphone & IEM Reviews & Discussions

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Countering misinformation about AutoEQ

- Thread starter markanini

- Start date

MacClintock

Addicted to Fun and Learning

- Joined

- May 24, 2023

- Messages

- 529

- Likes

- 968

Not true. Misinformation is what your are dispelling. It is NOT the 2019v2IE Harman target but the bass is reduced by a ca. 2 dB shelf. Educate yourself.Misinformation on many levels. First, The preference scores on the AutoEQ Ranking page are referencing Harman IE 2019 for IEMs, nothing else.

The IEM Harman Target 2019 sounds "off" to me. Is it just me?

I had some responses in mind, but I'm checking out of this argument and leaving this here: Back on topic, is the Harman target used in AutoEQ even correct? One outcome of the above exchange is that, in looking at the Truthear Zero on AutoEQ, I noticed it overshoots almost the entire bass shelf...

audiosciencereview.com

audiosciencereview.com

See above.Only the separate EQ presets are using a lightly modified Harman target, which is fine.

Even if the region above 10 kHz is not considered by the score rating (which is not from Sean Olive, only from @jaakkopasanen), it is still a relevant region. Thus, if an IEM measures well below it, but has above it many spikes or holes in the FR, it is still bad and not very Harman compliant.Automatic EQ doesn't become more reliable just for adhering to a particular target in this context. This makes your erroneous remark about the frequency range irrelevant, in fact 10kHz+ was never on the table for 711 couplers per IEC standards and manufacturer specs, that's why Sean Olives model didn't consider +10kHz. So AutoEQ would be doing it incorrectly if they changed it to what you want.

Last edited:

I don't understand the problem. AutoEQ is fully customizable to produce EQs of any number of PEQs conforming to any target you like. If you don't like the defaults then make your own correction! Any software has to come with some default settings as a demonstration of its capabilities but they should only be considered a starting point for your own work ...

jaakkopasanen

Member

- Joined

- Jul 12, 2020

- Messages

- 87

- Likes

- 344

The scoring function is using the original targets, unmodified.Not true. Misinformation is what your are dispelling. It is NOT the 2019v2IE Harman target but the bass is reduced by a ca. 2 dB shelf. Educate yourself.

The IEM Harman Target 2019 sounds "off" to me. Is it just me?

I had some responses in mind, but I'm checking out of this argument and leaving this here: Back on topic, is the Harman target used in AutoEQ even correct? One outcome of the above exchange is that, in looking at the Truthear Zero on AutoEQ, I noticed it overshoots almost the entire bass shelf...audiosciencereview.com

See above.

Even if the region above 10 kHz is not considered by the score rating (which is not from Sean Olive, only from @jaakkopasanen), it is still a relevant region. Thus, if an IEM measures well below it, but has above it many spikes or holes in the FR, it is still bad and not very Harman compliant.

AutoEq/dbtools/update_result_indexes.py at master · jaakkopasanen/AutoEq

Automatic headphone equalization from frequency responses - jaakkopasanen/AutoEq

MacClintock

Addicted to Fun and Learning

- Joined

- May 24, 2023

- Messages

- 529

- Likes

- 968

Ok, thanks for clarifying this, as all of it is not clearly stated on the github or somewhere else and having two different targets is confusing and not justified. And furthermore the scoring function is limited to the region of 40Hz and 10 kHz, that is why many IEMs with terrible treble (like the Chu) are up there in the rating. Also the Truthear Zero:Red is much lower rated in this soring, but has higher or equally high rating as the Chu and the Salnotes Zero in the oratory measurements.The scoring function is using the original targets, unmodified.

AutoEq/dbtools/update_result_indexes.py at master · jaakkopasanen/AutoEq

Automatic headphone equalization from frequency responses - jaakkopasanen/AutoEqgithub.com

What headphones are concerned, at the top there are

| Mark Levinson No 5909 | 99 | 1.14 | 0.06 |

| Sennheiser HE 1 Orpheus 2 | 99 | 1.18 | -0.02 |

| Dyson Zone | 96 | 1.45 | 0.01 |

| Shure SRH440 | 96 | 1.31 | 0.11 |

| HIFIMAN Sundara (post-2020 earpads) | 95 | 1.54 | -0.01 |

| Philips Fidelio X2HR |

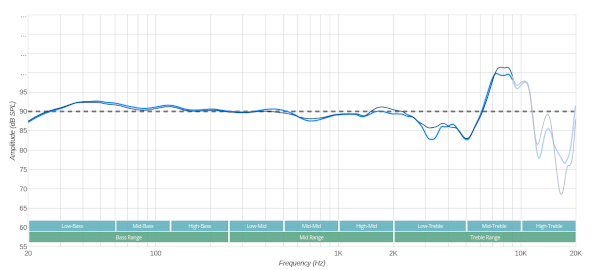

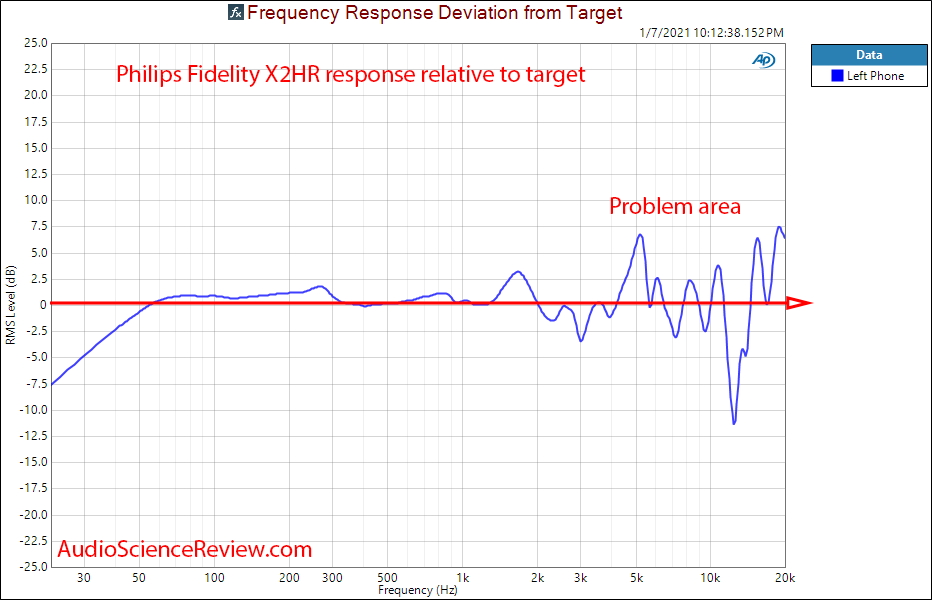

Anybody taking this seriously has lost his mind, besides the HE1 all these headphones are mediocre or just good (Sundara, SRH440, 5909) if not plain trash. For example the Fidelio has according to Amir's measurements high distortion, treble peaks, channel imbalance, strange group delay, variable impedance and cannot even be EQed properly. Yet it is the 6th accoding to this "scoring". I know, it is only meant to group the tonal balance, but after seing something like this, one should doubt the validity of this approach altogether.

Last edited:

- Joined

- Mar 29, 2021

- Messages

- 2,409

- Likes

- 4,165

I'd think Sean Olive would take this seriously as he is the lead author in this research. Do you think he lost his mind?Ok, thanks for clarifying this, as all of it is not clearly stated on the github or somewhere else and having two different targets is confusing and not justified. And furthermore the scoring function is limited to the region of 40Hz and 10 kHz, that is why many IEMs with terrible treble (like the Chu) are up there in the rating. Also the Truthear Zero:Red is much lower rated in this soring, but has higher or equally high rating as the Chu and the Salnotes Zero in the oratory measurements.

What headphones are concerned, at the top there are

Mark Levinson No 5909 99 1.14 0.06 Sennheiser HE 1 Orpheus 2 99 1.18 -0.02 Dyson Zone 96 1.45 0.01 Shure SRH440 96 1.31 0.11 HIFIMAN Sundara (post-2020 earpads) 95 1.54 -0.01 Philips Fidelio X2HR

Anybody taking this seriously has lost his mind, besides the HE1 all these headphones are mediocre or just good (Sundara, SRH440, 5909) if not plain trash. For example the Fidelio has according to Amir's measurements high distortion, treble peaks, channel imbalance, strange group delay, variable impedance and cannot even be EQed properly. Yet it is the 6th accoding to this "scoring". I know, it is only meant to group the tonal balance, but after seing something like this, one should doubt the validity of this approach altogether.

HarmonicTHD

Major Contributor

- Joined

- Mar 18, 2022

- Messages

- 3,326

- Likes

- 4,836

It clearly state what it does - compliance with Harman target not more not less.Ok, thanks for clarifying this, as all of it is not clearly stated on the github or somewhere else and having two different targets is confusing and not justified. And furthermore the scoring function is limited to the region of 40Hz and 10 kHz, that is why many IEMs with terrible treble (like the Chu) are up there in the rating. Also the Truthear Zero:Red is much lower rated in this soring, but has higher or equally high rating as the Chu and the Salnotes Zero in the oratory measurements.

What headphones are concerned, at the top there are

Mark Levinson No 5909 99 1.14 0.06 Sennheiser HE 1 Orpheus 2 99 1.18 -0.02 Dyson Zone 96 1.45 0.01 Shure SRH440 96 1.31 0.11 HIFIMAN Sundara (post-2020 earpads) 95 1.54 -0.01 Philips Fidelio X2HR

Anybody taking this seriously has lost his mind, besides the HE1 all these headphones are mediocre or just good (Sundara, SRH440, 5909) if not plain trash. For example the Fidelio has according to Amir's measurements high distortion, treble peaks, channel imbalance, strange group delay, variable impedance and cannot even be EQed properly. Yet it is the 6th accoding to this "scoring". I know, it is only meant to group the tonal balance, but after seing something like this, one should doubt the validity of this approach altogether.

You are now adding other characteristics which of course are not considered in the score, as clearly stated. Yes those other characteristics are as important and that’s why no one knowledgeable will select headphones on Harman target compliance alone, especially as one can EQ to it.

jaakkopasanen

Member

- Joined

- Jul 12, 2020

- Messages

- 87

- Likes

- 344

I simply implemented the preference prediction score as it is defined in the research. I won't comment too much about the validity and completeness of the research, but the score does correlate very well with double blind listening tests.Ok, thanks for clarifying this, as all of it is not clearly stated on the github or somewhere else and having two different targets is confusing and not justified. And furthermore the scoring function is limited to the region of 40Hz and 10 kHz, that is why many IEMs with terrible treble (like the Chu) are up there in the rating. Also the Truthear Zero:Red is much lower rated in this soring, but has higher or equally high rating as the Chu and the Salnotes Zero in the oratory measurements.

What headphones are concerned, at the top there are

Mark Levinson No 5909 99 1.14 0.06 Sennheiser HE 1 Orpheus 2 99 1.18 -0.02 Dyson Zone 96 1.45 0.01 Shure SRH440 96 1.31 0.11 HIFIMAN Sundara (post-2020 earpads) 95 1.54 -0.01 Philips Fidelio X2HR

Anybody taking this seriously has lost his mind, besides the HE1 all these headphones are mediocre or just good (Sundara, SRH440, 5909) if not plain trash. For example the Fidelio has according to Amir's measurements high distortion, treble peaks, channel imbalance, strange group delay, variable impedance and cannot even be EQed properly. Yet it is the 6th accoding to this "scoring". I know, it is only meant to group the tonal balance, but after seing something like this, one should doubt the validity of this approach altogether.

. I know, it is only meant to group the tonal balance, but after seing something like this, one should doubt the validity of this approach altogether.

It seems to me that you are free to do exactly that.

MacClintock

Addicted to Fun and Learning

- Joined

- May 24, 2023

- Messages

- 529

- Likes

- 968

That actually seems to be the problem. People see it and take it for granted, thinking it really has preditive power for the sound quality of a headphone, which it doesn't.I simply implemented the preference prediction score as it is defined in the research.

Could you please show me a publication where the Dyson Zone and the Fidelio X2HR do perform that good? Or are you just talking about some virtual headphone which were EQed to their FR? Because that way you would neglect high distortion, treble peaks, channel imbalance, strange group delay, variable impedance and the fact that some of these cannot be EQed properly.I won't comment too much about the validity and completeness of the research, but the score does correlate very well with double blind listening tests.

solderdude

Grand Contributor

Dyson:

aside from the huge (+10dB) treble peak (in the 'sharpness' frequency band) it seems to have an otherwise excellent tonality.

X2HR (to me) with a little help in the treble and bass lowered a bit sounded quite good to me.

tonality wise, overall and smoothed, this seems to be the correct tonality.

But yes... sounds nothing like the HE1 and Sundara is bass shy compared to the others.

And I agree... the ratings based on some numbers on tonal balance measured on a specific fixture is not related to sound quality but has some relation to tonality.

aside from the huge (+10dB) treble peak (in the 'sharpness' frequency band) it seems to have an otherwise excellent tonality.

X2HR (to me) with a little help in the treble and bass lowered a bit sounded quite good to me.

tonality wise, overall and smoothed, this seems to be the correct tonality.

But yes... sounds nothing like the HE1 and Sundara is bass shy compared to the others.

And I agree... the ratings based on some numbers on tonal balance measured on a specific fixture is not related to sound quality but has some relation to tonality.

Last edited:

MacClintock

Addicted to Fun and Learning

- Joined

- May 24, 2023

- Messages

- 529

- Likes

- 968

Unfortunately not everbody is knowledgeable.It clearly state what it does - compliance with Harman target not more not less.

You are now adding other characteristics which of course are not considered in the score, as clearly stated. Yes those other characteristics are as important and that’s why no one knowledgeable

Not every headphone can be EQed easily, see the Fidelio example.will select headphones on Harman target compliance alone, especially as one can EQ to it.

MacClintock

Addicted to Fun and Learning

- Joined

- May 24, 2023

- Messages

- 529

- Likes

- 968

Thank you, this is exacly my point. In fact in many cases just some "faint resemblance" to sound quality.And I agree... the ratings based on some numbers on tonal balance measured on a specific fixture is not related to sound quality but has some relation to tonality.

MacClintock

Addicted to Fun and Learning

- Joined

- May 24, 2023

- Messages

- 529

- Likes

- 968

Sean Olive, whose reasearch I hold in high esteem, is well aware of the limits of the scoring system, especially if it is taken as a pure indicator of sound quality. Or do you think he walks around with the Dyson Zone, sometimes replacing it with the Fidelio and the Chu?I'd think Sean Olive would take this seriously as he is the lead author in this research. Do you think he lost his mind?

Last edited:

- Joined

- Mar 29, 2021

- Messages

- 2,409

- Likes

- 4,165

Apparent absurdity of that mental image demonstrates nothing other than the fact that instead of making an actual statement, you are just resorting to argumentum ad absurdum.Sean Olive, whose reasearch I hold in high esteem, is well aware of the limits of the scoring system, especially if it is taking as a pure indicator of sound quality. Or do you think he walks around with the Dyson Zone, sometimes replacing it with the Fidelio and the Chu?

Do you know what are the limitations of the scoring system?

And enlighten us please, how does "group delay" correlate with preference?

And what exactly is the effect of "high distortion"?

Tell me please, which of those headphones that score 95 and higher in the predictive scoring system have such high distortion that they are objectively not preferable?

With sources if possible please.

Last edited:

MacClintock

Addicted to Fun and Learning

- Joined

- May 24, 2023

- Messages

- 529

- Likes

- 968

I gave you the arguments all above in previous postings.Apparent absurdity of that mental image demonstrates nothing other than the fact that instead of making an actual statement, you are just resorting to argumentum ad absurdum.

Do you know what are the limitations of the scoring system?

Messy group delay may cause resonances, polarity issues, strange soundstage, etc.And enlighten us please, how does "group delay" correlate with preference?

Just make a listening test, if you really don't know the basics of hifi.And what exactly is the effect of "high distortion"?

As I mentioned earlier, the Fidelio, ranked no. 6. has quite high distortion and Amir didn't like it at all, even after EQ. He also did not like the no. 1, the 5909. What more is needed to discredit this mindless use of the ranking system?Tell me please, which of those headphones that score 95 and higher in the predictive scoring system have such high distortion that they are objectively not preferable?

With sources if possible please.

- Joined

- Mar 29, 2021

- Messages

- 2,409

- Likes

- 4,165

That is it?I gave you the arguments all above in previous postings.

Messy group delay may cause resonances, polarity issues, strange soundstage, etc.

Just make a listening test, if you really don't know the basics of hifi.

As I mentioned earlier, the Fidelio, ranked no. 6. has quite high distortion and Amir didn't like it at all, even after EQ. He also did not like the no. 1, the 5909. What more is needed to discredit this mindless use of the ranking system?

The basis of your strong criticism of the research, going as far as referring to people who take it seriously as lost their minds, is "Amir did not like it" - is that it?

I'll tell you what's more needed: some actual, objective proof.

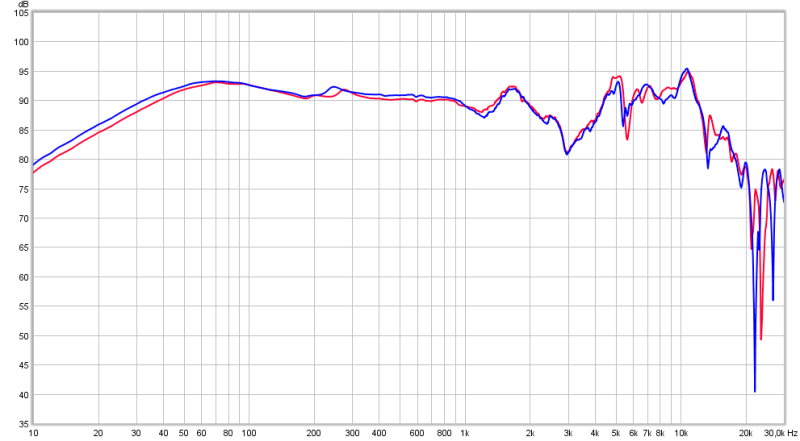

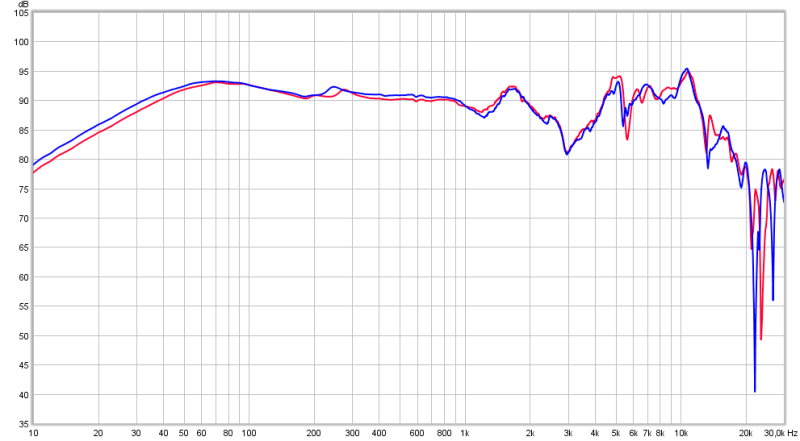

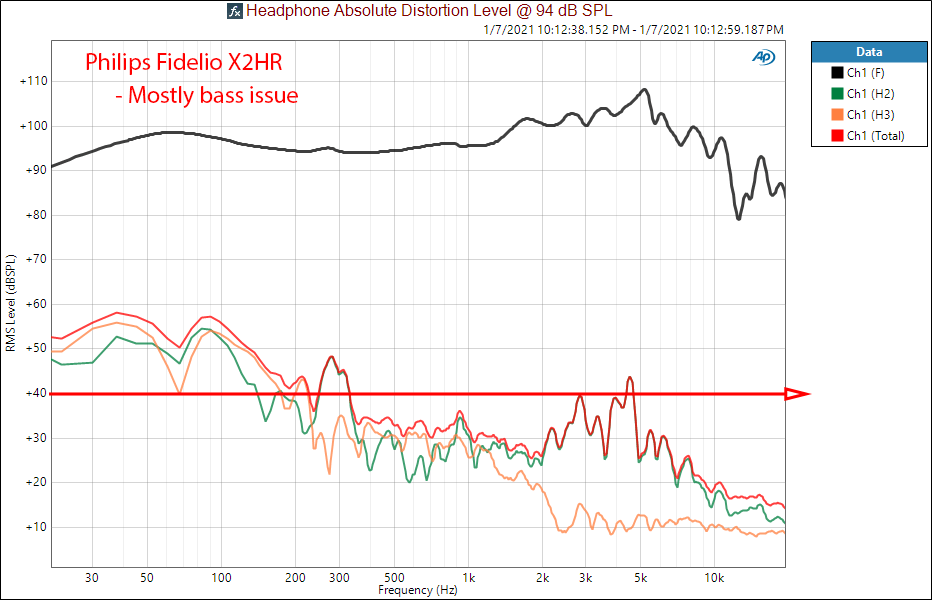

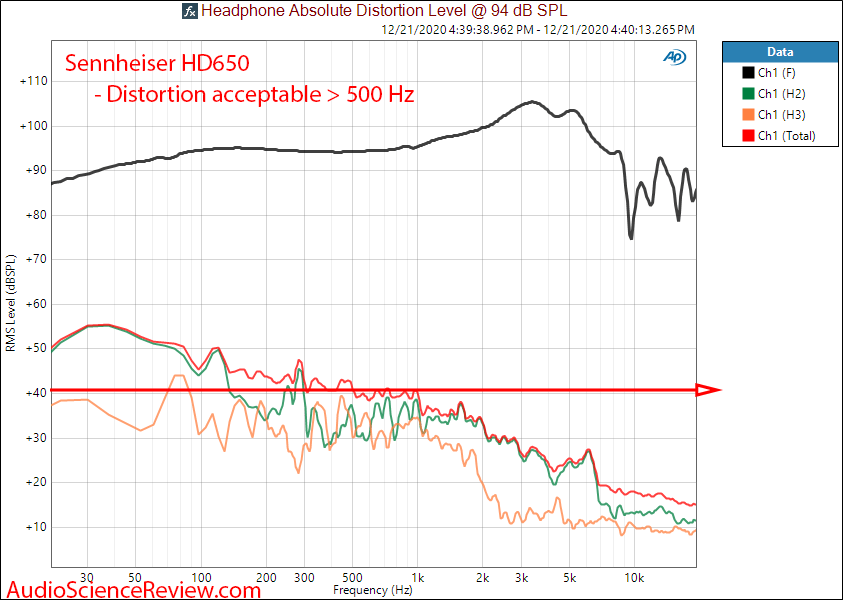

You said high distortion. Here are the distortion level graphs for Fidelio and HD650 side by side. What makes HD650's distortion acceptable and Fidelio's "high"?

Big EQ will be on the bass where Fidelio has a lot better extension down to 50Hz compared to HD650 which has a response that starts to droop of at around 100Hz. And the little bump at around 5K, no real reason to think it is audible, but it will be toned down in the EQ anyway. Same for distortion at 270Hz range. So I'd argue that Fidelio EQ'ed to target will have less distortion than HD650 EQ'ed to target. Do you disagree?

You said messy group delay. Here are the GD for Fidelio and HD650 side by side. Which one is messier and how does it effect sound quality or preference - explain please.

If there is more, do explain. What makes Fidelio objectively a bad headphone, please, treat me as a total novice and spell it all out for me.

Last edited:

MacClintock

Addicted to Fun and Learning

- Joined

- May 24, 2023

- Messages

- 529

- Likes

- 968

He did not like it for reasons, and it is for these reasons that the Fidelio is objectively a bad or at best mediocre headphone.That is it?

The basis of your strong criticism of the research, going as far as referring to people who take it seriously as lost their minds, is "Amir did not like it" - is that it?

In contrast to the HD 650 the Fidelio has also a series of resonance peaks in the treble, which are generally almost impossible to EQ properly.

Yes, distortion is overall higher on the Fidelio, just look at the graphs, especially in the bass where both need a boost.I'll tell you what's more needed: some actual, objective proof.

You said high distortion. Here are the distortion level graphs for Fidelio and HD650 side by side. What makes HD650's distortion acceptable and Fidelio's "high"?

Big EQ will be on the bass where Fidelio has a lot better extension down to 50Hz compared to HD650 which has a response that starts to droop of at around 100Hz. And the little bump at around 5K, no real reason to think it is audible, but it will be toned down in the EQ anyway. Same for distortion at 270Hz range. So I'd argue that Fidelio EQ'ed to target will have less distortion than HD650 EQ'ed to target. Do you disagree?

If there is more, do explain. What makes Fidelio objectively a bad headphone, please,

If’s funny how you selectively put some graphs. The others, that don’t support your claim, you discard, namely the gross channel imbalance (2-4 dB in a wide frequency range), the spiky reasonance peaks in the FR and the nonconstant impedance. The first makes an unpleasant listening experience in general and the latter will make it not possible to accurately EQ to a given target, exactly what Amir experienced.

That is not difficult as you seem to be one.treat me as a total novice and spell it all out for me.

Last edited:

@MacClintock what's your beef with, the ranking table or the people who treat it like gospel? From above posts you seem completely aware of the limitations of the statistical model so I'm not sure why you're so bothered that some headphones you don't like appear at the top. Not sure what you're suggesting Jaako does instead. The fact some people aren't knowledgeable enough to make proper use of tools/info/etc. is unfortunately an issue in all walks of life.

- Joined

- Oct 25, 2019

- Messages

- 11,112

- Likes

- 14,777

Suspect its both. If the table (or indeed Oratory's scores he puts on his PDFs) didnt exist then people wouldnt run around quoting scores like it meant anymore than how likely a headphone's stock tonality is to be "liked" by the most number of people. TBH I think more value can be obtained from the rankings from a consumer perspective by using it to rule out poor sounding headphones from ones purchasing decisions than assuming the ones at the top of the list are automatically the ones to look at.@MacClintock what's your beef with, the ranking table or the people who treat it like gospel? From above posts you seem completely aware of the limitations of the statistical model so I'm not sure why you're so bothered that some headphones you don't like appear at the top. Not sure what you're suggesting Jaako does instead. The fact some people aren't knowledgeable enough to make proper use of tools/info/etc. is unfortunately an issue in all walks of life.

I reckon the scoring has considerably more value for manufacturers, especially if they measure and calculate during the design/ proto phase.

Similar threads

- Replies

- 3

- Views

- 2K

- Replies

- 9

- Views

- 1K