There are quite a few very tech-savvy people here on ASR, so I'm copying my post on another forum to here for feedback and recommendations. Apologies if you have seen this already elsewhere.

The goal of the experiment is to provide a way for others to hear what my speaker system sounds like in my listening room. To do this, the thought is, that I need to capture the impulse response at my listening position. Then anyone can use my captured IR file to apply to their own music playback. To avoid applying your own system/room IR on top of mine, the listening should be performed using quality headphones. Here are the details:

My measured impulse response files linked below. Please try applying them using a convolution engine to your headphone playback and let me know what you think. I recommend not applying cross-feed initially, as that's how I listen. See if this helps you visualize/hear into my listening environment. Very curious about your feedback!

IR was captured at my listening position, playing a sine-sweep using REW using a Behringer calibrated mic. REW was also used to create the IR files. Room is my basement, somewhat cluttered and irregular wall/door openings but no windows. Thinly carpeted cement floor, speakers are located about 5m from the listening position, with about 4m in between, with very slight toe-in. Hung ceiling is about 8.5ft high. Speakers for this test were PSB Stratus Gold, and headphones were HE-560.

To me, the sound is much richer, more 3-D than when used without IR. A bit bass-heavy for my taste, but not exceedingly so. Tonal quality is very similar to my speaker playback, and I hear a lot of the spatial dimensions that must be part of the reverberant space in my listening room. Whether or not this accurately represents my room is hard to say, but I find that the sound is much closer to the in-room quality than without the IR "correction".

Actual IR files:

Left IR

Right IR

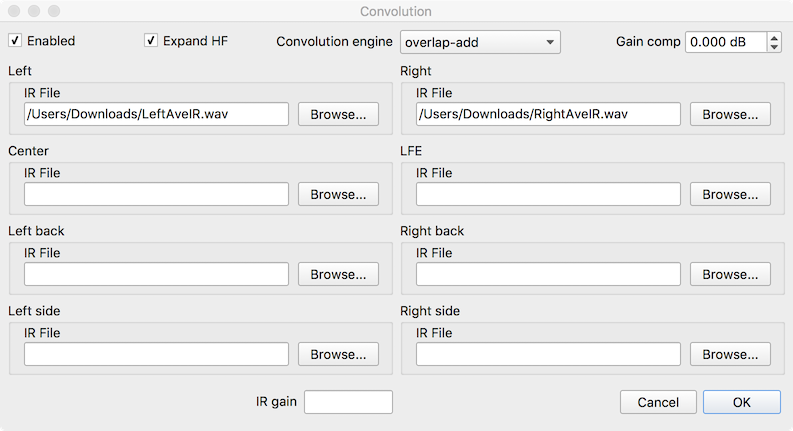

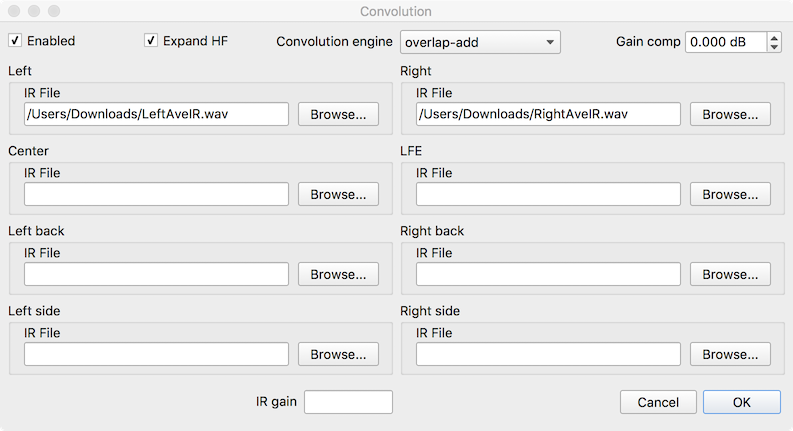

Here's my HQPlayer convolver configuration:

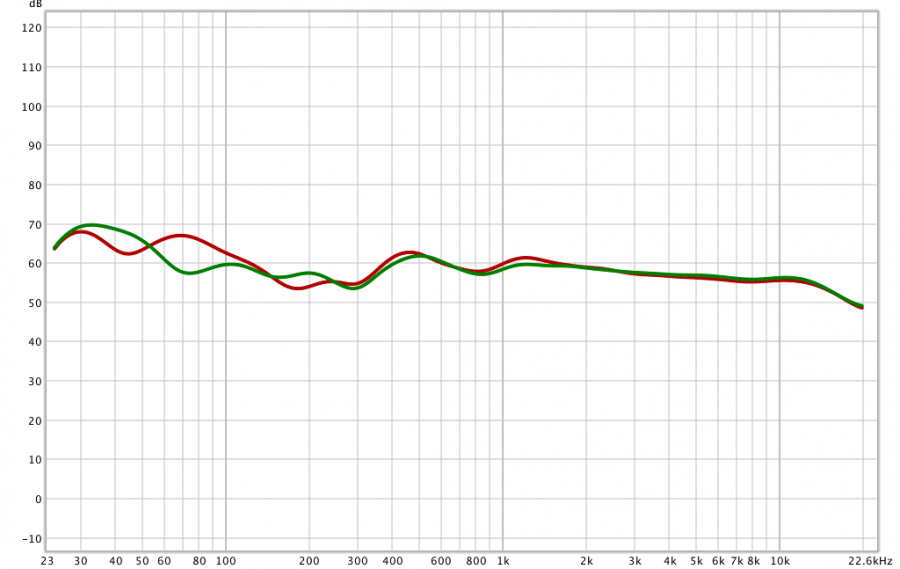

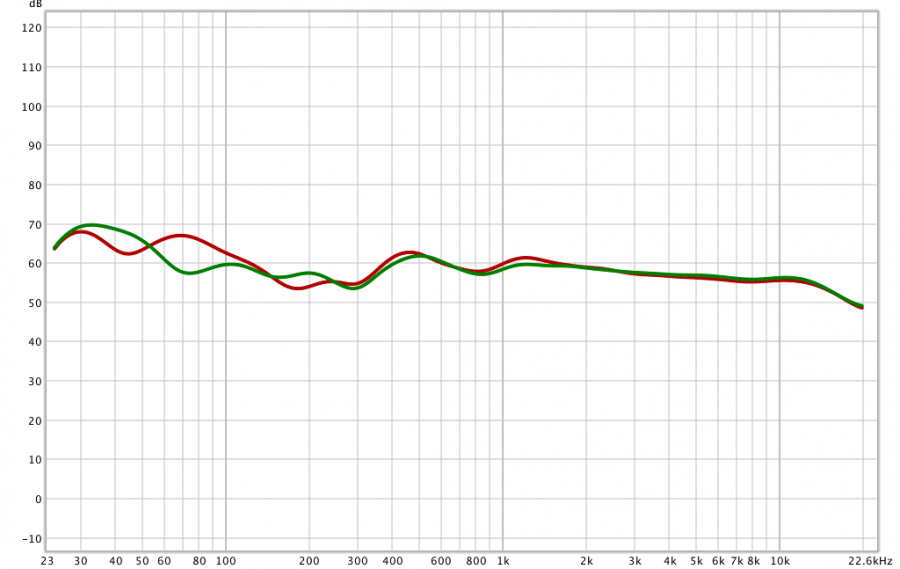

Measured response (left is red):

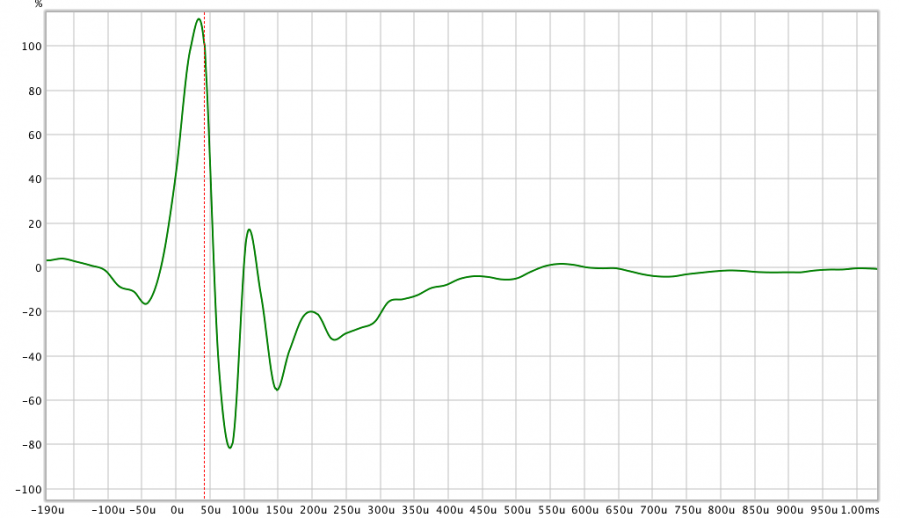

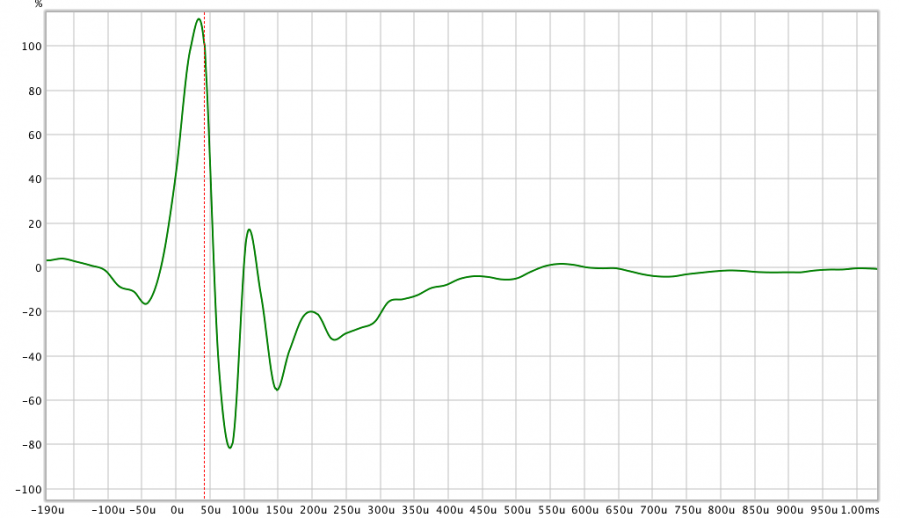

IR (left):

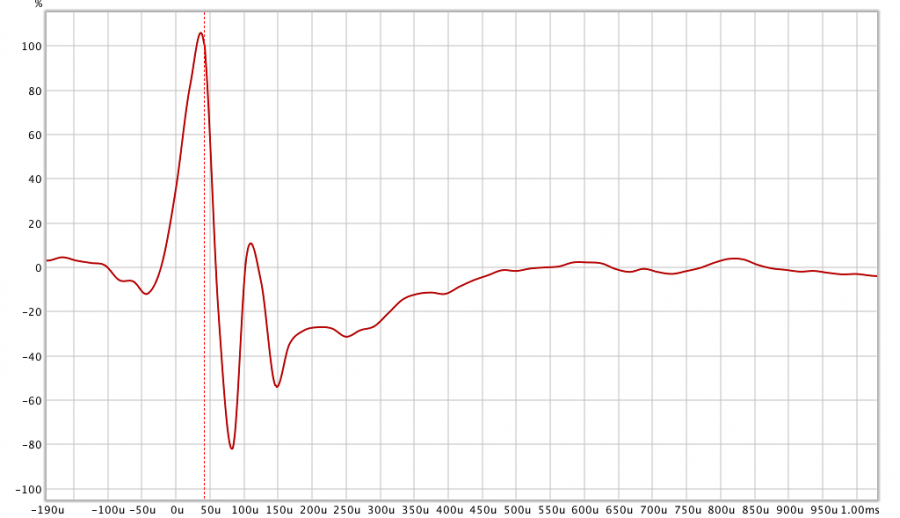

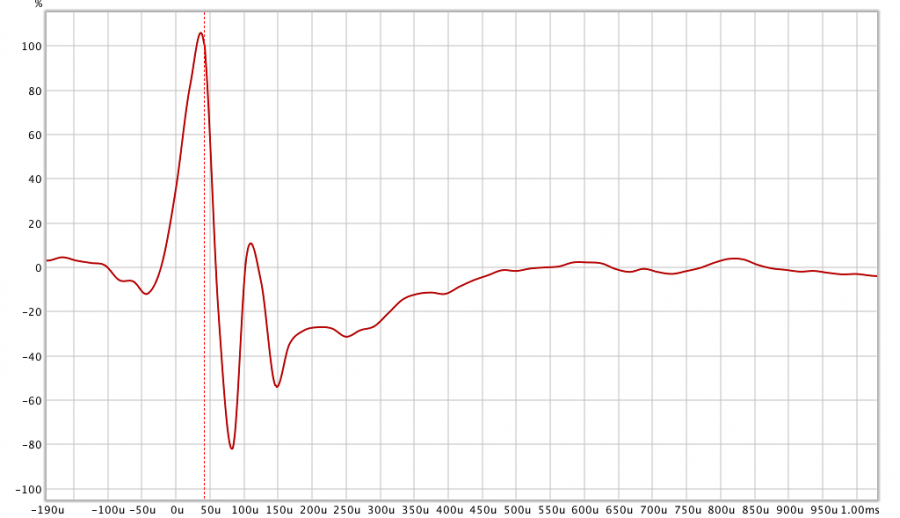

IR (right):

The goal of the experiment is to provide a way for others to hear what my speaker system sounds like in my listening room. To do this, the thought is, that I need to capture the impulse response at my listening position. Then anyone can use my captured IR file to apply to their own music playback. To avoid applying your own system/room IR on top of mine, the listening should be performed using quality headphones. Here are the details:

My measured impulse response files linked below. Please try applying them using a convolution engine to your headphone playback and let me know what you think. I recommend not applying cross-feed initially, as that's how I listen. See if this helps you visualize/hear into my listening environment. Very curious about your feedback!

IR was captured at my listening position, playing a sine-sweep using REW using a Behringer calibrated mic. REW was also used to create the IR files. Room is my basement, somewhat cluttered and irregular wall/door openings but no windows. Thinly carpeted cement floor, speakers are located about 5m from the listening position, with about 4m in between, with very slight toe-in. Hung ceiling is about 8.5ft high. Speakers for this test were PSB Stratus Gold, and headphones were HE-560.

To me, the sound is much richer, more 3-D than when used without IR. A bit bass-heavy for my taste, but not exceedingly so. Tonal quality is very similar to my speaker playback, and I hear a lot of the spatial dimensions that must be part of the reverberant space in my listening room. Whether or not this accurately represents my room is hard to say, but I find that the sound is much closer to the in-room quality than without the IR "correction".

Actual IR files:

Left IR

Right IR

Here's my HQPlayer convolver configuration:

Measured response (left is red):

IR (left):

IR (right):