-

Welcome to ASR. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Can YOU tell the difference between FLAC & MP3? - Part 2

- Thread starter audiofilet

- Start date

OP

audiofilet

Member

- Joined

- Sep 9, 2021

- Messages

- 79

- Likes

- 38

- Thread Starter

- #22

Huge deep purple fan here, that's an automatic Like.Kudos for testing this subject again. Testing will hugely be dependent on the kind of music/track u r using. Classical music does not stress MP3 encoder much. Try distorted/unlinear heavy guitar music. U might notice difference.

My go to test track is "Hush" by Deep Purple with its beginning "drummer" section played on guitar.

Yeah, I definitely preferred the Jazz track by Holly Cole over Piano.

I play the violin myself so it was easy for me to recognize the notes and compare them.

I'm currently repeating the test with AAC. Any other music suggestions?

Sgt. Ear Ache

Major Contributor

Here's another suggestion for a test...

Step 1 - place the 320kb files in one folder, and the high res files in another.

Step 2 - don't listen to anything for a few hours.

Step 3 - have someone cue up one or the other folders for you so you don't know which it is you are hearing.

Step 4 - listen to the music in the folder and ID it as either mp3 or high res.

Step 5 - repeat the test the next day

Do that for a couple weeks and at the end see how often you got it right.

Step 1 - place the 320kb files in one folder, and the high res files in another.

Step 2 - don't listen to anything for a few hours.

Step 3 - have someone cue up one or the other folders for you so you don't know which it is you are hearing.

Step 4 - listen to the music in the folder and ID it as either mp3 or high res.

Step 5 - repeat the test the next day

Do that for a couple weeks and at the end see how often you got it right.

audio2design

Major Contributor

- Joined

- Nov 29, 2020

- Messages

- 1,769

- Likes

- 1,864

Experiment: ABX Test to determine whether I can detect an audible difference between 192KHZ/24bit FLAC & 320kb/s MP3

Sundara's were awesome. That was around the time where the Liquid Spark died, so I couldn't test them with it. I used Oratory 1990's Optimal Harman Parametric EQ settings. Same issue regarding open-back, but I felt pretty confident.

What equipment was used to level match and at what frequency?

OP

audiofilet

Member

- Joined

- Sep 9, 2021

- Messages

- 79

- Likes

- 38

- Thread Starter

- #25

Yeah, that's exactly what the ABX test does, you either play Song X or Y and have to determine whether its the lossless or compressed one. Actually, it uses A,B and X,YHere's another suggestion for a test...

Step 1 - place the 320kb files in one folder, and the high res files in another.

Step 2 - don't listen to anything for a few hours.

Step 3 - have someone cue up one or the other folders for you so you don't know which it is you are hearing.

Step 4 - listen to the music in the folder and ID it as either mp3 or high res.

Step 5 - repeat the test the next day

Do that for a couple weeks and at the end see how often you got it right.

audio2design

Major Contributor

- Joined

- Nov 29, 2020

- Messages

- 1,769

- Likes

- 1,864

Questionable whether ABX is best for MP3/FLAC detection. ABX testing is the preferred method for testing for an unknown difference. That is not actually what you are testing for. You are testing for a known difference (at least for a trained listener). A simple classifier test (paired comparison), i.e. put A and B into the proper bins is adequate and likely superior as there is less temporal distance between comparisons depending on how you did your ABX (i.e. was X fixed and you were allowed to repeatedly compare A and B to X till you were confident in your determination?).

If you are simply playing 30 seconds of A, 30 seconds of B, then 30 seconds of X and trying to pick correctly, then you are probably testing more YOUR ability to know how an MP3 may differ (which ability may be low), as opposed to if there is a detectable audible difference. User controlled paired comparison (typical on Internet, "can you pick the MP3"), and user controlled ABX where X is fixed and A or B can be rapidly compared under user control will have far better discriminating capability than a basic play A, play B, play X, pick which is X test, with untrained testers who don't know what they are looking for.

You have illustrated that under casual listening that it is difficult to discriminate.

ABX is a formal test method, but one needs to understand how to apply it and what it is measuring.

If you are simply playing 30 seconds of A, 30 seconds of B, then 30 seconds of X and trying to pick correctly, then you are probably testing more YOUR ability to know how an MP3 may differ (which ability may be low), as opposed to if there is a detectable audible difference. User controlled paired comparison (typical on Internet, "can you pick the MP3"), and user controlled ABX where X is fixed and A or B can be rapidly compared under user control will have far better discriminating capability than a basic play A, play B, play X, pick which is X test, with untrained testers who don't know what they are looking for.

You have illustrated that under casual listening that it is difficult to discriminate.

ABX is a formal test method, but one needs to understand how to apply it and what it is measuring.

Last edited:

audio2design

Major Contributor

- Joined

- Nov 29, 2020

- Messages

- 1,769

- Likes

- 1,864

Yeah, that's exactly what the ABX test does, you either play Song X or Y and have to determine whether its the lossless or compressed one. Actually, it uses A,B and X,Y

That's not how ABX works. In ABX, A, B and X tracks are played and you have to indicate whether X matches A or B. You wouldn't know which is the compressed or uncompressed one.

Sgt. Ear Ache

Major Contributor

Yeah, that's exactly what the ABX test does, you either play Song X or Y and have to determine whether its the lossless or compressed one. Actually, it uses A,B and X,Y

yeah, i know what an ABX test does. What I'm suggesting is you do a test that is basically the same as a "normal" audiophile subjectivist test, only you do it blind.

What ABX testing has pretty much always proven to me when I've taken them is how ludicrous the sorts of assessments done (and the conclusions drawn from them) in general audiophile comparisons/reviews are...

Last edited:

JRS

Major Contributor

As much as I believe your efforts are misguided, I respect you for taking the time to do it right--well almost, but good enough. You are being two tough on yourself--you don't need 9/10 to reach significance, 8/10 will do. Typically a p=0.05 is considered sufficient evidence--what this means is that there is a 5 percent or less chance that you achieved a certain result, if the two outcomes (choosing either a or B) are equally probable, as would be the case with a heads/tails coin flip. Now if there is one principle in statistics that everyone should know it is the law of large numbers. To illustrate, consider doing 10,000 trials. Headache right? Well if the two are equally probable the number of say tails will be very close to 5000. The odds of a deviation of even 100 as in 5100-4900 are remote.

The more trials the greater the power, that is the less likely it will be a fluke. If we were to flip a coin 4 times and it came up heads each time, then it would almost reach "significance" (less than 0.05) It is 1/16 or .0625.

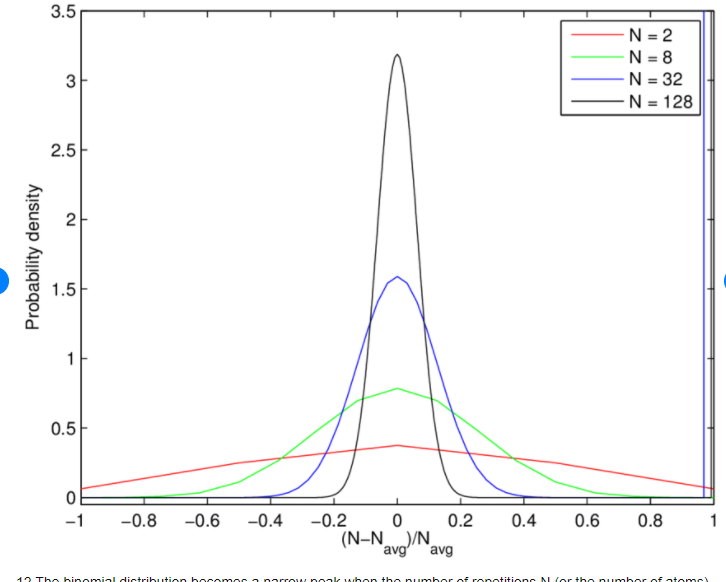

So for 40 trials, you only need 25 or more right to reach significance. Nowhere is it more true than math that a picture is worth a thousand words. Consider and look at the difference between the n=8 curve and the n=128 curve. Notice that it gets spikier as N increases. Thats the law of large numbers--more and more of the outcomes (were we to repeat the trial say 100 times) are close to the expected mean of 1/2*128 =64. They have normalized the curve--in this case the -0.2 and +0.2 would be at roughly 51 and 77. Lets look at your case below:

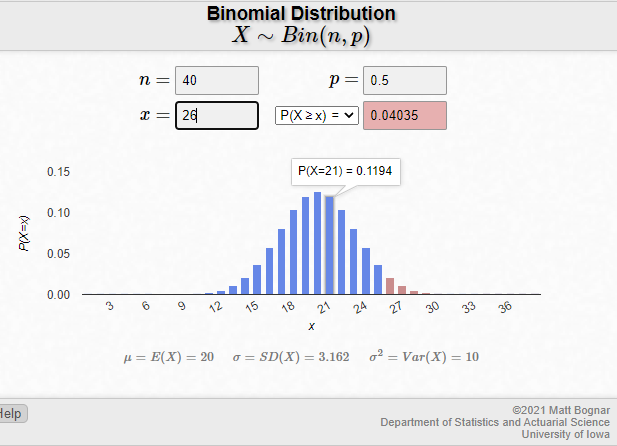

What you are seeing is a bar chart representing the probability of each particular outcome. The bar at 20 is the tallest--seems reasonable. But now go down to 26 (the first brown bar) and read across to the x axis, and you see it's tiny--maybe 0.02. But we don't want to restrict ourselves to getting exactly 26 right, we want to include every one that is even better,27,28, 29, .....40. Well if you're with me this far, the probability of just 29 is minute, and 30 can't be seen; also invisible 31, 32, 33....40. So long story short, you get 30/40 you is smoking it. You get 35, I want whatever you're smoking.

Now as payback for the free statistics guide, why is it that even with a low bar like 26, people can't do it. Oh I mean once in a while he/she might, but not reliably.

Is it possible that there is no difference?

The more trials the greater the power, that is the less likely it will be a fluke. If we were to flip a coin 4 times and it came up heads each time, then it would almost reach "significance" (less than 0.05) It is 1/16 or .0625.

So for 40 trials, you only need 25 or more right to reach significance. Nowhere is it more true than math that a picture is worth a thousand words. Consider and look at the difference between the n=8 curve and the n=128 curve. Notice that it gets spikier as N increases. Thats the law of large numbers--more and more of the outcomes (were we to repeat the trial say 100 times) are close to the expected mean of 1/2*128 =64. They have normalized the curve--in this case the -0.2 and +0.2 would be at roughly 51 and 77. Lets look at your case below:

What you are seeing is a bar chart representing the probability of each particular outcome. The bar at 20 is the tallest--seems reasonable. But now go down to 26 (the first brown bar) and read across to the x axis, and you see it's tiny--maybe 0.02. But we don't want to restrict ourselves to getting exactly 26 right, we want to include every one that is even better,27,28, 29, .....40. Well if you're with me this far, the probability of just 29 is minute, and 30 can't be seen; also invisible 31, 32, 33....40. So long story short, you get 30/40 you is smoking it. You get 35, I want whatever you're smoking.

Now as payback for the free statistics guide, why is it that even with a low bar like 26, people can't do it. Oh I mean once in a while he/she might, but not reliably.

Is it possible that there is no difference?

JSmith

Master Contributor

Nup... 5 sigma, 3x10-7 thanks or it didn't happen. /syou get 30/40 you is smoking it

Perceptually, quite likely;Is it possible that there is no difference?

Objectivists vs. Subjectivists - Who's right?

I am actually a good example for this, haha. To be perfectly honest, after performing countless ABX tests over a span of ~13 hours, I do believe that my original, untested observation, regarding the difference between lossless and compressed formats, may have been slightly exaggerated, yet not...

audiosciencereview.com

audiosciencereview.com

JSmith

JRS

Major Contributor

That always struck me as a damn high bar, but if you are claiming to have glimpsed God (Higgs Boson), best have some extraordinary evidence. In the end I was less than bowled over to discover that after analyzing so many exabytes of data, that enough events within a certain energy band were identified to claim discovery. I wanted to see a selfie of the team and God, not a lot of pomp and ceremony celebrating electron trails in a computer. I was very moved by the fact that Higgs was there--in fact I cried, so even nerds have feelings. At least with LIGO their was a squiggle to admire....Nup... 5 sigma, 3x10-7 thanks or it didn't happen. /s

Perceptually, quite likely;

Objectivists vs. Subjectivists - Who's right?

I am actually a good example for this, haha. To be perfectly honest, after performing countless ABX tests over a span of ~13 hours, I do believe that my original, untested observation, regarding the difference between lossless and compressed formats, may have been slightly exaggerated, yet not...audiosciencereview.com

JSmith

danadam

Major Contributor

- Joined

- Jan 20, 2017

- Messages

- 1,356

- Likes

- 2,150

But what will be the effect size?So for 40 trials, you only need 25 or more right to reach significance.

It seems like nobody is ever talking about the effect size.

JRS

Major Contributor

Effects come in all sizes and unfortunately shapes. Certainly it would be easy to consider each trial of 40 a separate test, and then to look at the distribution of those results while comparing to the null result. I think it would be far more interesting to weigh the different trials-the OP has said as much, sometimes he felt like he was guessing, while on others had tuned it to some nuance and felt more confident. Perhaps he assigns weights such as 1-4 representing no clue, a hint of one, a solid gut feeling, and at 4, yea I'm certain I'm hearing a difference. Then what would be interesting is to correlate the p value produced on a given trial with the confidence level. If the correlation coefficients were strong, then I believe we would be able to say something about a phenomenon that could include the genuine ability to detect the differences. I haven't looked over the details, but we'd need to be certain that there are no other differences besides sampling rate. If this test hadn't been done to death already with nothing but null results, I'd be all over this. Again more power could be injected by taking a sample of 25 able-eared enthusiasts to do the same test. If we found 2 or 3 who consistently outperformed their peers, then you got something to hang a hat on. And we know that they are out there--Maybe it was the Harman data, but there was one listener who clearly had golden ears. But that was a much rougher comparison--damn I wish I could remember where I saw that, In any case it wasn't at the level of sampling rates.But what will be the effect size?

It seems like nobody is ever talking about the effect size.

Audioisnobiggie

Member

- Joined

- Apr 2, 2023

- Messages

- 6

- Likes

- 0

MP3's are exactly like porn, and you can also have way more of them that way, since it's just that. But don’t worry, you don't need to have a problem with that in music. FLAC still sounds noisier from being decompressed first. Only WAV files from solid state can make you the slackers you're supposed to be being while you listen, suckers. Only WAV files could possibly be exact playback of the original copy. But don't forget, although digital skips the recording medium, it records only timeless samples to be played back over time. Garbage, nobody will care about people anymore with digital. They'll always have not wanting people to find out problems, anyways.

MP3's are exactly like porn, and you can also have way more of them that way, since it's just that. But don’t worry, you don't need to have a problem with that in music. FLAC still sounds noisier from being decompressed first. Only WAV files from solid state can make you the slackers you're supposed to be being while you listen, suckers. Only WAV files could possibly be exact playback of the original copy. But don't forget, although digital skips the recording medium, it records only timeless samples to be played back over time. Garbage, nobody will care about people anymore with digital. They'll always have not wanting people to find out problems, anyways.

JSmith

Master Contributor

Sorry if I misunderstand, however are you saying you collect porn?MP3's are exactly like porn, and you can also have way more of them that way, since it's just that.

JSmith

Similar threads

- Replies

- 5

- Views

- 807

- Replies

- 9

- Views

- 1K

- Replies

- 14

- Views

- 1K

- Replies

- 10

- Views

- 1K