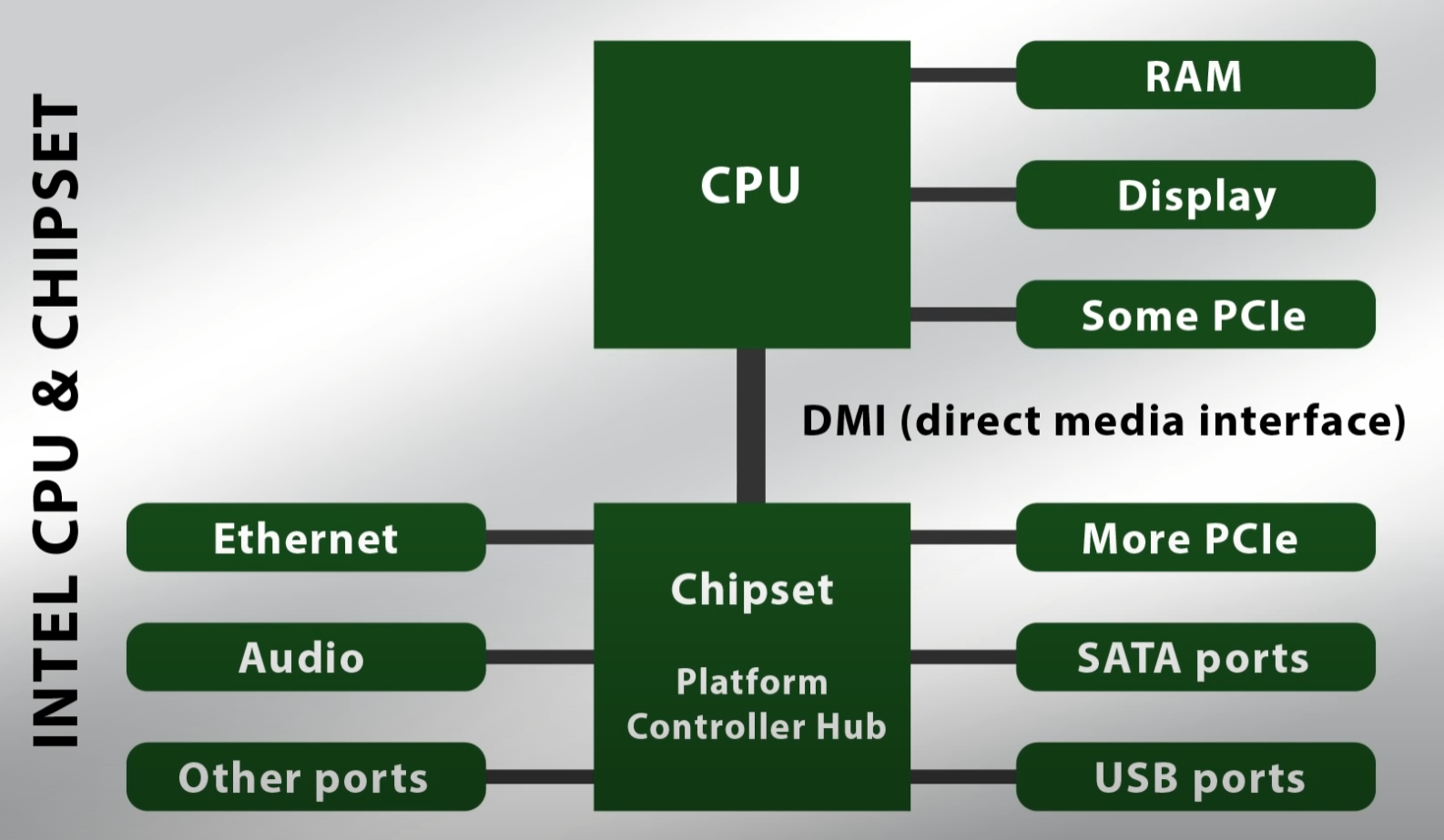

So what is DMI and why is it important? DMI is the bandwidth tunnel between your Motherboard's Chipset's PCH/Southbridge and your CPU. DMI is important because the bandwidth is divided between your USB ports, Non-CPU linked PCIe slots, SATA ports and others. Knowing this information will help your understand why your USB DACs strutters when you use, for example, using 2 PCIe 3.0 NVMe M.2s at RAID 0 through the DMI 3.0 bus.

First, we gotta talk about PCIe lanes. PCIe lanes is the amount of bandwidth lanes a Chipset and a CPU can handle at once. For example, a 6th generation Intel CPU (Something like a i3 6100), would have 16 PCIe lanes. Those CPU PCIe lanes are used for the top CPU-linked PCIe 3.0 x16 slot. A modern Intel i3 12100, would have 20 PCIe lanes for the top CPU-linked PCIe 5.0/4.0 x16 slot and rest for the CPU-linked PCIe 4.0 NVMe slot. CPU PCIe lanes can be split with other CPU-linked slots if the board has them. The chipset has PCIe lanes but some are already shared with USB ports and such. Although some motherboards may appear to have multiple x16 slots, in reality most of the time they are x4.

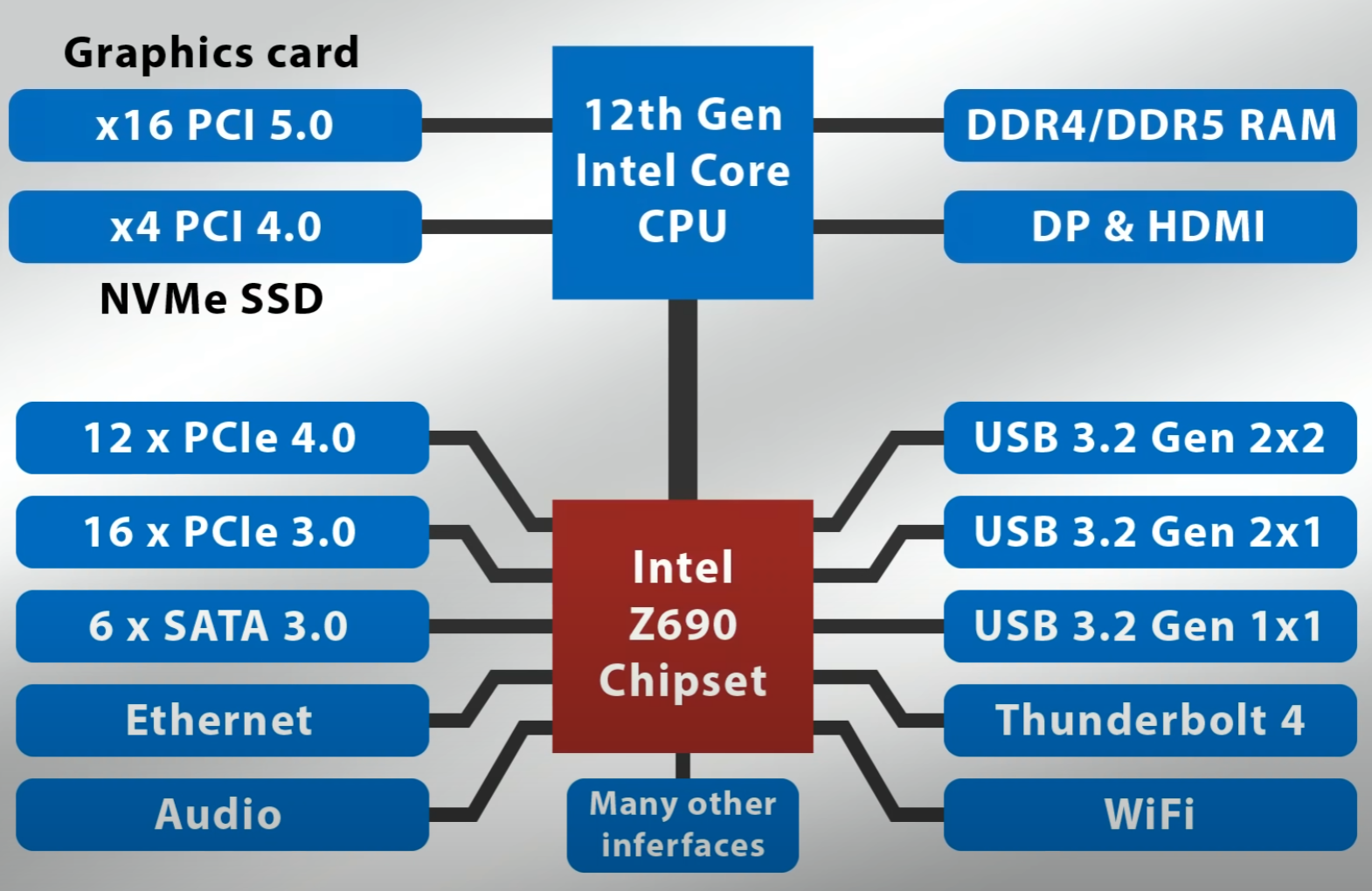

A chart of a modern Intel system (From "ExplainingComputers"):

Bandwidth in this thread means Total Max speed if everything was running at once.

If you want a CPU-linked PCIe slot (Bypass DMI), then your top PCIe slot supports that but usually people use it for there GPU. Even though your CPU-Linked and Non-CPU linked PCIe slots are not the same, they still share PCIe Lanes (PCIe lanes amount depends on your CPU/Chipset and how its divided/used).

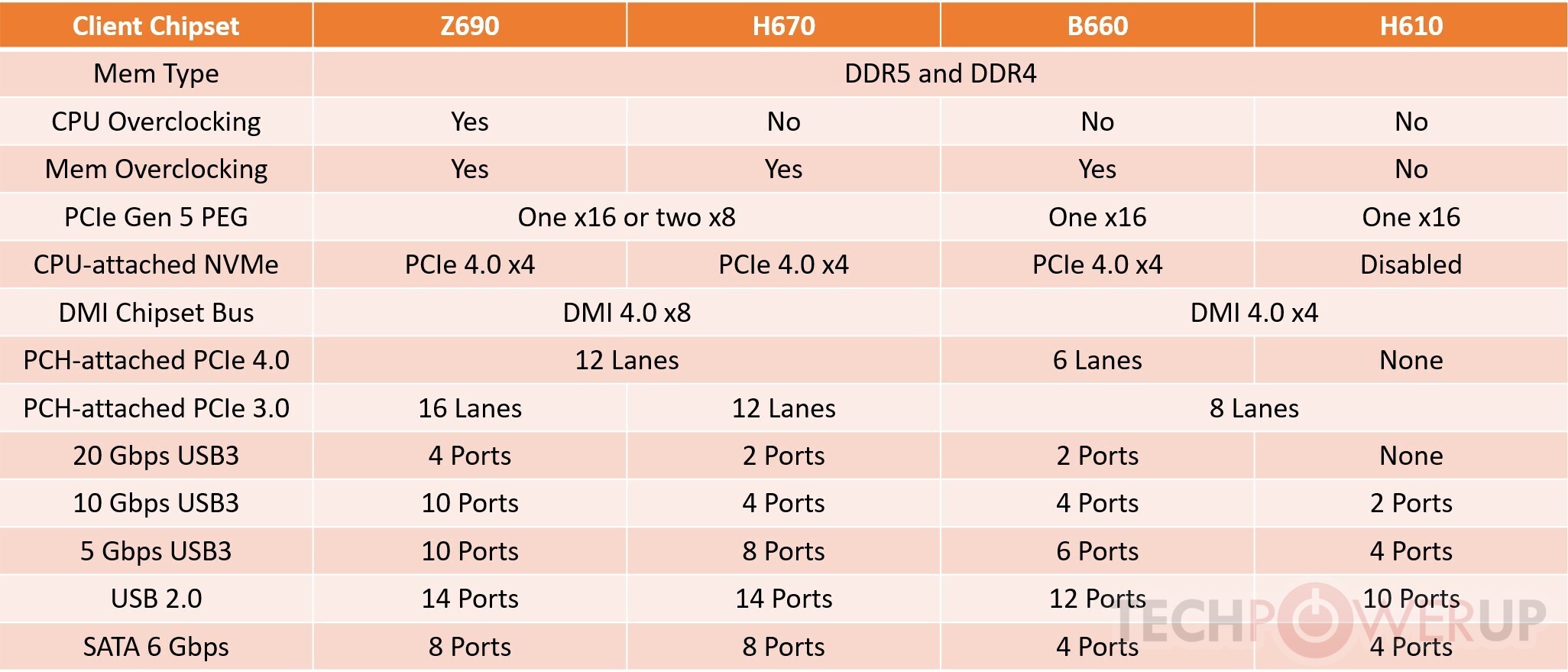

For the longest time (2015 - 2021), Intel used DMI 3.0 on motherboards (4,000 megabytes/sec [PCIE 3.0 x4 speed's]), which is very slow. If you bought a very cheap motherboard between that era, you probably got DMI 2.0 still (2,000 megabytes/sec [PCIE 2.0 x4 speed's]. With DMI 4.0 (Intel 600 series) and depending on your motherboard, speeds are between 8,000 megabytes/sec to 16,000 megabytes/sec.

Chart of Intel 600-series chipset differences (Picture from "TechPowerUp"):

Chart of a Intel 600-series using DMI 4.0 (Picture from "ExplainingComputers"):

DMI 3.0 motherboards generally can 1 have PCIE 3.0 NVME SSD (Non-CPU linked), which means it technically takes up your whole DMI bandwidth and you'll rarely use the max speed of your NVMe SSD. Also In the DMI 3.0 era, if you wanted to have a NVMe PCIE 3.0 SSD with your GPU, your GPU's bandwidth would be cut in half from x16 to x8 (Due to set Intel CPU PCIe speed configurations). Luckily most PCIE 3.0 GPU's don't suffer from performance loss due to them not using the full x16. When you add a SATA Card to your system (That's connected to the Non-CPU linked slots), it's essentially like adding more SATA slots directly to the motherboard, and the major downside is that the bandwidth is divided more.

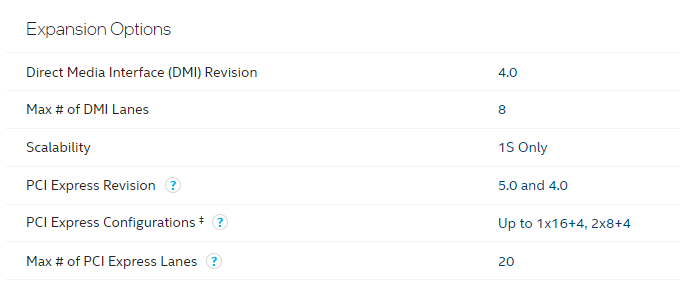

A picture of the "Expansion Options" of a i7-12700:

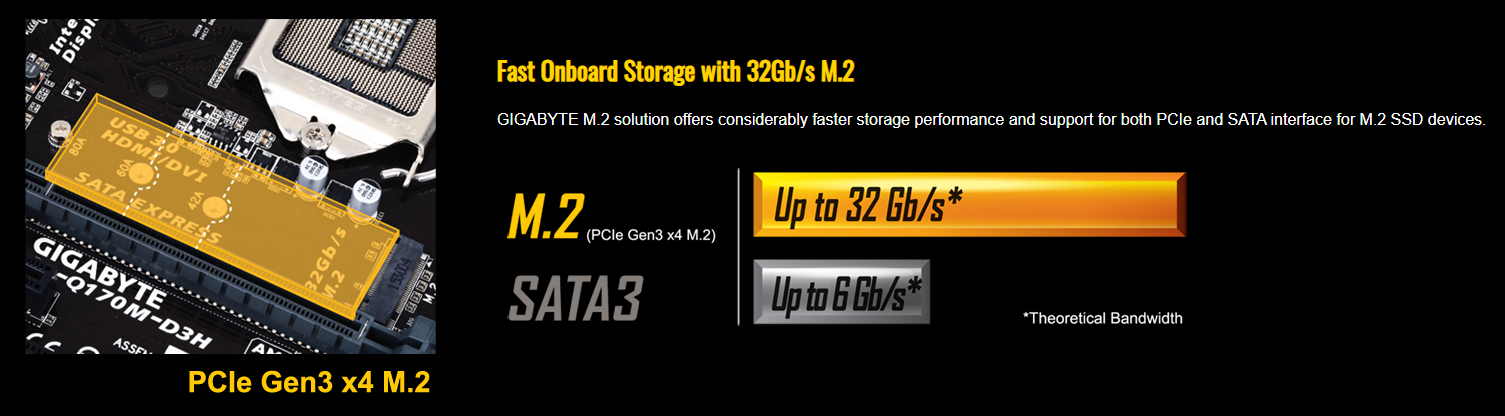

Picture of a Gigabyte MB that has a Non-CPU linked M.2 Slot:

*32 Gigabits/sec = 4,000 megabytes/sec, which is the DMI 3.0 Max Bandwidth limit.

If you have a Nvidia SLI board of the DMI 3.0 era, then you could have 2 or more slots talk directly the to the CPU rather then just 1. If you used both slots, each slot would be running at x8 or x16 if your only using 1. There is non-SLI modern motherboards that have 2 CPU-linked slots but companies rarely advertise this feature and you have to be knowable about motherboards.

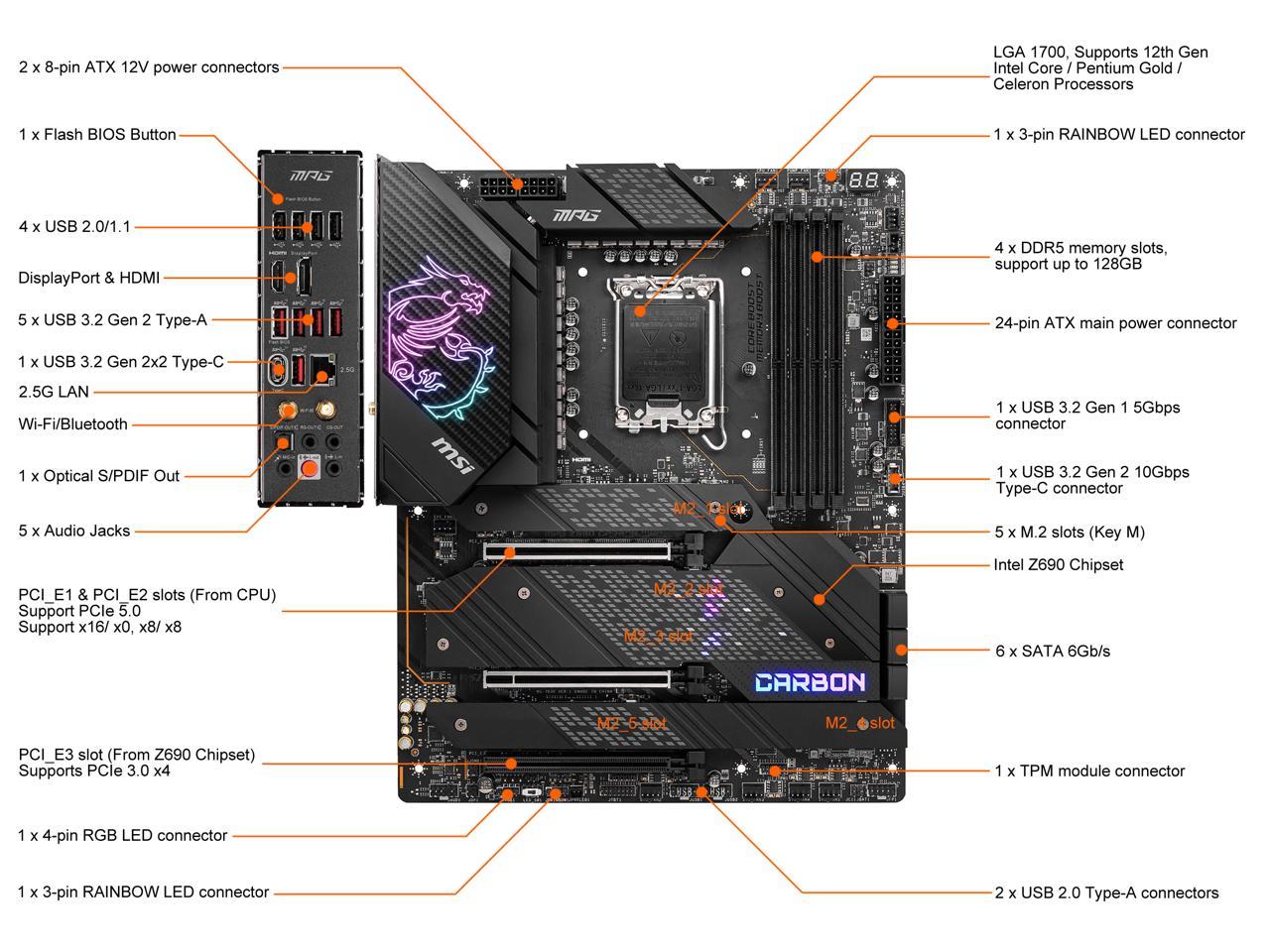

Picture of a MSI MPG Z690 that has two CPU-linked slots (Newegg):

These days, PCIe 4.0 motherboards (That still use DMI 3.0 and also use DMI 4.0), can have 1 NVMe PCIE 4.0 SSD directly linked to the CPU, just like the top PCIe slot. Beware that there is "PCIe hybrid" motherboards, meaning your top PCIe slot & top NVMe slot is CPU linked & PCIe 4.0 while your other Non-CPU linked slots are PCIE 3.0. There is also PCIe 5.0 Hybrid motherboards (Top Slot is PCIe 5.0 while other slots are 4.0 or even 3.0). Dual PCIe 5.0 slot MB's can split bandwidth (x8/x8), which means you could run (if your MB supports bifurcation) 2-PCIe 5.0 M.2 NVMe SSDs in RAID 0, which is 32,000 megabytes/sec total.

Currently there is no major advantage jumping from a SATA SSD to a NVME SSD right now. Although in terms of value, buying a SATA 2.5 SSD over a NVME SSD is a bad idea since the cost is the same, so might as well get the NVMe SSD. In the future with Microsoft's DirectStorage API, games can load at least 10x faster with current hardware even SATA 2.5 SSDs somehow.

Theoretically, A USB PCIe Card that's connected to a Intel CPU-linked slot, could perform better then the on-board USB ports. The limiting factor I could imagine is PCIe lanes and your USB PCIe card's controller. This would be perfect for DACs since they don't have to worry about DPC-Latency hungry ethernet controllers and other USB devices. I don't have much knowledge on AMD systems but I have heard some USB ports do actually connect to the CPU directly.

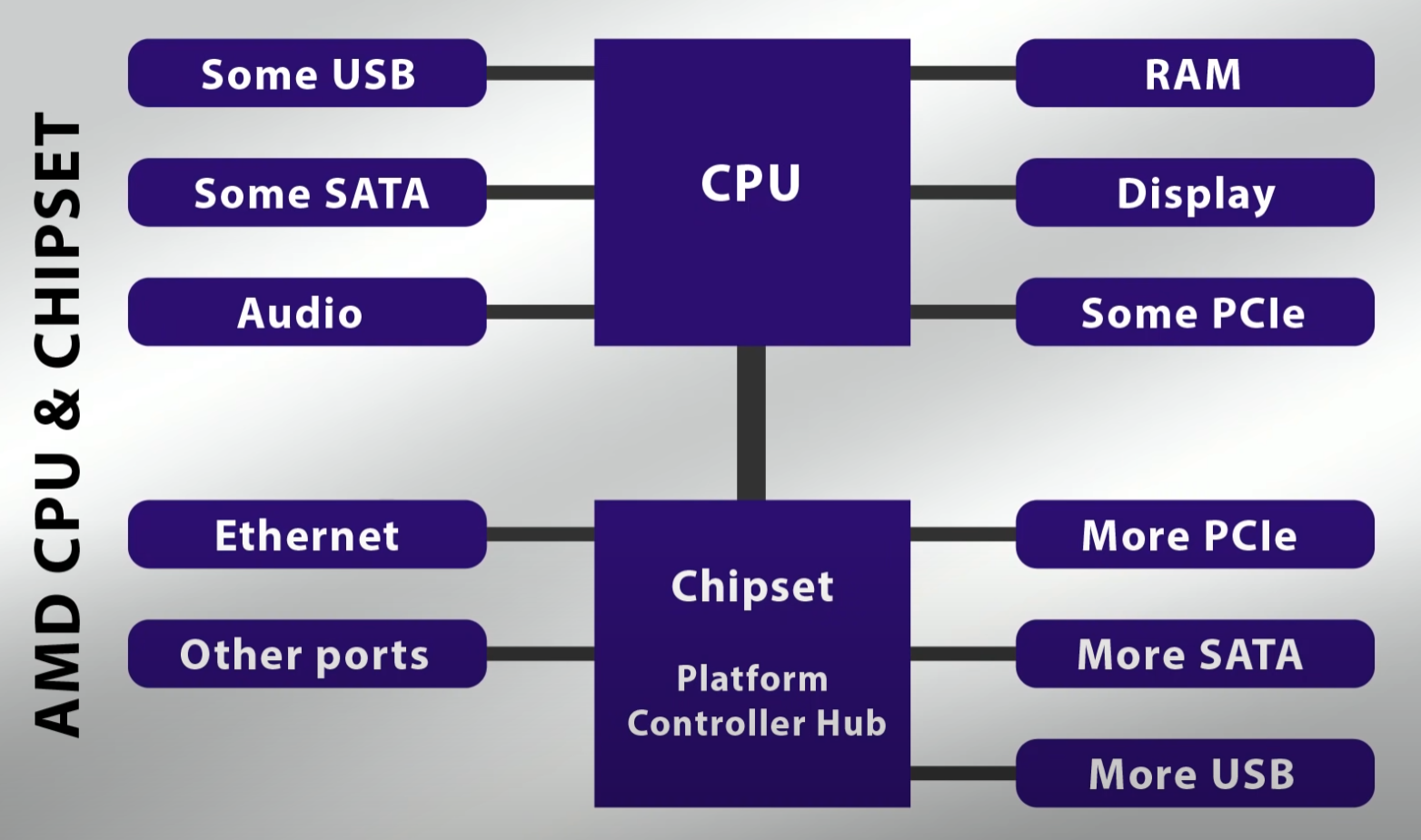

A chart of a modern AMD system (by "ExplainingComputers"):

Edit (SATA M.2's): I didn't mention SATA M.2's because there technically a worst value for consumers that want a SSD. SATA M.2's take up the same space as a NVMe M.2 but have SATA3 speeds while disabling 1-2 SATA ports. SATA M.2's cost the same as a SATA 2.5 SSD and a NVMe SSD, it's not always dumb to buy a SATA 2.5 SSD. SATA 2.5 SSD's can be used in old consoles and computers while SATA M.2's take up a valuable slot on your motherboard which could've been used for a same-price 3x+ speed NVMe SSD. There is literally no good use for SATA M.2's.

Edit (Chipset Drivers): Every motherboard comes with chipset drivers but nobody notices. Windows 10 does a great job at providing basic drivers for your chipset. Its similar to the "memory support list" for your motherboard, you should get the "correct" tested memory but you don't have to. If you have actual chipset drivers installed (Via Windows Update), it's mostly outdated like your video card drivers. What's the point of getting the proper drivers? Stability and potentially more performance.

Edit (CPU Graphics/Integrated Graphics): These days its a smart idea to install your integrated graphics drivers alongside your Nvidia/AMD video card drivers. There is a few benefits to having both installed (If you got a integrated graphics & video outputs on your motherboard).

The benefits:

Edit (RAID NVMe PCIe 3.0 M.2s): With some Nvidia SLI PCIe 3.0 motherboards (That has 2 or more CPU linked slots), you can't RAID NVMe drives because age (Well really bifurcation). Despite the 2nd slot on these boards offering x8 support, they can only detect 1 drive even though technically they can run 2 PCIe 3.0 drives at full speed. The reason we can't do this on these specific boards is because lack of support for bifurcation. Bifurcation allows the computer to split up a slot (usually x16 into x4/x4/x4/4) or x8 into x4/x4). If you got a PCIe 4.0 motherboard, you probably have bifurcation support but in the PCIe 3.0 land, its rare. There is one product that can use 2 PCIe 3.0 NVMe drives at full speed without bifurcation but its pricey, its called the WD Black AN1500. The WD Black AN1500 almost reaches 1 PCIe 4.0 NVMe speeds by using RAID 0 with 2 PCIe PCIE 3.0 NVMe drives on a actual x8 slot on your motherboard. The value of this product (1TB for $220) is very sad considering the Samsung 980 Pro is faster and a lot cheaper (1TB for $120), although the Samsung is PCIe 4.0. At the end of the day, well currently, the benefit of having a 1000mb+/sec drive is not that noticeable from SATA SSD. Current games do not utilize those high speeds but games in the future that use Microsoft's DirectStorage API (Similar to PS5's) will benefit from these high speed SSDs.

X570 and Z690, which is better.

In this section, well go over the bandwidth limitations, manufacturer limitations, etc, of each chipset. Heads-up, with X570 will be using the "latest" CPU and well be using motherboards that include 2 CPU-Linked PCIe slots.

Z690:

For any Z690 MB, you'll get 1 CPU-linked (PCIE 4.0) M.2 slot, at least 2 DMI-linked (PCIE 4.0) M.2 slots and 1 PCIE 5.0 x16 slot. If a Z690 MB features 3 or more DMI-linked PCIE 4.0 M.2 slots, the bandwidth is split between the PCIe 4.0 M.2s. If there's 4 DMI-linked M.2 PCIE 4.0 slots and your using 4 PCIE 3.0 M.2s, your bandwidth is not divided. Also on Z690 MBs, the bottom x4 PCIE slot is usually tied to a DMI-linked PCIE 4.0 M.2 slot, so if you populate the M.2 slot, the x4 slot is disabled or the x4 slot runs at half speed and also the M.2 does too.

Z690 uses DMI 4.0 (Which is equivalent to PCIE 4.0 x8, in speed is 16,000 megabyte/sec) while Z590 uses DMI 3.0 (Which is equivalent to PCIE 3.0 x4, in speed is 4,000 megabytes), in terms of value Z590 is an awful value if you care about chipset bandwidth, M.2's and/or PCIe devices.

If you spend more on a Z690 MB, you can gain 2 PCIE 5.0 x16 slots, which can run in x16/x0 or x8/x8. Sadly you cannot divide the x8/x8 into x8/x4+x4 due to Intel not the motherboard makers. However, you are technically future proofed for 1 PCIE 5.0 M.2 SSD [with adapter] (or 2 if your not using a GPU) and since it is CPU-linked also, In theory with the right future APIs, you could load games/programs faster than a PS5. It would've been cool to run 2 PCIE 5.0 M.2's (with GPU installed) or 4 PCIE 5.0 M.2s, but you could thank Intel for this weird limitation that shouldn't technically exist.

X570:

First, we gotta talk about PCIe lanes. PCIe lanes is the amount of bandwidth lanes a Chipset and a CPU can handle at once. For example, a 6th generation Intel CPU (Something like a i3 6100), would have 16 PCIe lanes. Those CPU PCIe lanes are used for the top CPU-linked PCIe 3.0 x16 slot. A modern Intel i3 12100, would have 20 PCIe lanes for the top CPU-linked PCIe 5.0/4.0 x16 slot and rest for the CPU-linked PCIe 4.0 NVMe slot. CPU PCIe lanes can be split with other CPU-linked slots if the board has them. The chipset has PCIe lanes but some are already shared with USB ports and such. Although some motherboards may appear to have multiple x16 slots, in reality most of the time they are x4.

A chart of a modern Intel system (From "ExplainingComputers"):

Bandwidth in this thread means Total Max speed if everything was running at once.

If you want a CPU-linked PCIe slot (Bypass DMI), then your top PCIe slot supports that but usually people use it for there GPU. Even though your CPU-Linked and Non-CPU linked PCIe slots are not the same, they still share PCIe Lanes (PCIe lanes amount depends on your CPU/Chipset and how its divided/used).

For the longest time (2015 - 2021), Intel used DMI 3.0 on motherboards (4,000 megabytes/sec [PCIE 3.0 x4 speed's]), which is very slow. If you bought a very cheap motherboard between that era, you probably got DMI 2.0 still (2,000 megabytes/sec [PCIE 2.0 x4 speed's]. With DMI 4.0 (Intel 600 series) and depending on your motherboard, speeds are between 8,000 megabytes/sec to 16,000 megabytes/sec.

Chart of Intel 600-series chipset differences (Picture from "TechPowerUp"):

Chart of a Intel 600-series using DMI 4.0 (Picture from "ExplainingComputers"):

DMI 3.0 motherboards generally can 1 have PCIE 3.0 NVME SSD (Non-CPU linked), which means it technically takes up your whole DMI bandwidth and you'll rarely use the max speed of your NVMe SSD. Also In the DMI 3.0 era, if you wanted to have a NVMe PCIE 3.0 SSD with your GPU, your GPU's bandwidth would be cut in half from x16 to x8 (Due to set Intel CPU PCIe speed configurations). Luckily most PCIE 3.0 GPU's don't suffer from performance loss due to them not using the full x16. When you add a SATA Card to your system (That's connected to the Non-CPU linked slots), it's essentially like adding more SATA slots directly to the motherboard, and the major downside is that the bandwidth is divided more.

A picture of the "Expansion Options" of a i7-12700:

Picture of a Gigabyte MB that has a Non-CPU linked M.2 Slot:

*32 Gigabits/sec = 4,000 megabytes/sec, which is the DMI 3.0 Max Bandwidth limit.

If you have a Nvidia SLI board of the DMI 3.0 era, then you could have 2 or more slots talk directly the to the CPU rather then just 1. If you used both slots, each slot would be running at x8 or x16 if your only using 1. There is non-SLI modern motherboards that have 2 CPU-linked slots but companies rarely advertise this feature and you have to be knowable about motherboards.

Picture of a MSI MPG Z690 that has two CPU-linked slots (Newegg):

These days, PCIe 4.0 motherboards (That still use DMI 3.0 and also use DMI 4.0), can have 1 NVMe PCIE 4.0 SSD directly linked to the CPU, just like the top PCIe slot. Beware that there is "PCIe hybrid" motherboards, meaning your top PCIe slot & top NVMe slot is CPU linked & PCIe 4.0 while your other Non-CPU linked slots are PCIE 3.0. There is also PCIe 5.0 Hybrid motherboards (Top Slot is PCIe 5.0 while other slots are 4.0 or even 3.0). Dual PCIe 5.0 slot MB's can split bandwidth (x8/x8), which means you could run (if your MB supports bifurcation) 2-PCIe 5.0 M.2 NVMe SSDs in RAID 0, which is 32,000 megabytes/sec total.

Currently there is no major advantage jumping from a SATA SSD to a NVME SSD right now. Although in terms of value, buying a SATA 2.5 SSD over a NVME SSD is a bad idea since the cost is the same, so might as well get the NVMe SSD. In the future with Microsoft's DirectStorage API, games can load at least 10x faster with current hardware even SATA 2.5 SSDs somehow.

Theoretically, A USB PCIe Card that's connected to a Intel CPU-linked slot, could perform better then the on-board USB ports. The limiting factor I could imagine is PCIe lanes and your USB PCIe card's controller. This would be perfect for DACs since they don't have to worry about DPC-Latency hungry ethernet controllers and other USB devices. I don't have much knowledge on AMD systems but I have heard some USB ports do actually connect to the CPU directly.

A chart of a modern AMD system (by "ExplainingComputers"):

Edit (SATA M.2's): I didn't mention SATA M.2's because there technically a worst value for consumers that want a SSD. SATA M.2's take up the same space as a NVMe M.2 but have SATA3 speeds while disabling 1-2 SATA ports. SATA M.2's cost the same as a SATA 2.5 SSD and a NVMe SSD, it's not always dumb to buy a SATA 2.5 SSD. SATA 2.5 SSD's can be used in old consoles and computers while SATA M.2's take up a valuable slot on your motherboard which could've been used for a same-price 3x+ speed NVMe SSD. There is literally no good use for SATA M.2's.

Edit (Chipset Drivers): Every motherboard comes with chipset drivers but nobody notices. Windows 10 does a great job at providing basic drivers for your chipset. Its similar to the "memory support list" for your motherboard, you should get the "correct" tested memory but you don't have to. If you have actual chipset drivers installed (Via Windows Update), it's mostly outdated like your video card drivers. What's the point of getting the proper drivers? Stability and potentially more performance.

Edit (CPU Graphics/Integrated Graphics): These days its a smart idea to install your integrated graphics drivers alongside your Nvidia/AMD video card drivers. There is a few benefits to having both installed (If you got a integrated graphics & video outputs on your motherboard).

The benefits:

- Resource dividing. A good example would be using your integrated graphics to record your gameplay that's being rendered by your video card. Windows or yourself can choose which GPU to use. If proper balancing, you can gain FPS in your videogames.

- Easy video passthrough. With modern Windows you can passthrough your video cards graphics through your motherboards video outputs or vice versa. This feature is great for people that have broken or even no video ports on there video card.

Edit (RAID NVMe PCIe 3.0 M.2s): With some Nvidia SLI PCIe 3.0 motherboards (That has 2 or more CPU linked slots), you can't RAID NVMe drives because age (Well really bifurcation). Despite the 2nd slot on these boards offering x8 support, they can only detect 1 drive even though technically they can run 2 PCIe 3.0 drives at full speed. The reason we can't do this on these specific boards is because lack of support for bifurcation. Bifurcation allows the computer to split up a slot (usually x16 into x4/x4/x4/4) or x8 into x4/x4). If you got a PCIe 4.0 motherboard, you probably have bifurcation support but in the PCIe 3.0 land, its rare. There is one product that can use 2 PCIe 3.0 NVMe drives at full speed without bifurcation but its pricey, its called the WD Black AN1500. The WD Black AN1500 almost reaches 1 PCIe 4.0 NVMe speeds by using RAID 0 with 2 PCIe PCIE 3.0 NVMe drives on a actual x8 slot on your motherboard. The value of this product (1TB for $220) is very sad considering the Samsung 980 Pro is faster and a lot cheaper (1TB for $120), although the Samsung is PCIe 4.0. At the end of the day, well currently, the benefit of having a 1000mb+/sec drive is not that noticeable from SATA SSD. Current games do not utilize those high speeds but games in the future that use Microsoft's DirectStorage API (Similar to PS5's) will benefit from these high speed SSDs.

X570 and Z690, which is better.

In this section, well go over the bandwidth limitations, manufacturer limitations, etc, of each chipset. Heads-up, with X570 will be using the "latest" CPU and well be using motherboards that include 2 CPU-Linked PCIe slots.

Z690:

For any Z690 MB, you'll get 1 CPU-linked (PCIE 4.0) M.2 slot, at least 2 DMI-linked (PCIE 4.0) M.2 slots and 1 PCIE 5.0 x16 slot. If a Z690 MB features 3 or more DMI-linked PCIE 4.0 M.2 slots, the bandwidth is split between the PCIe 4.0 M.2s. If there's 4 DMI-linked M.2 PCIE 4.0 slots and your using 4 PCIE 3.0 M.2s, your bandwidth is not divided. Also on Z690 MBs, the bottom x4 PCIE slot is usually tied to a DMI-linked PCIE 4.0 M.2 slot, so if you populate the M.2 slot, the x4 slot is disabled or the x4 slot runs at half speed and also the M.2 does too.

Z690 uses DMI 4.0 (Which is equivalent to PCIE 4.0 x8, in speed is 16,000 megabyte/sec) while Z590 uses DMI 3.0 (Which is equivalent to PCIE 3.0 x4, in speed is 4,000 megabytes), in terms of value Z590 is an awful value if you care about chipset bandwidth, M.2's and/or PCIe devices.

If you spend more on a Z690 MB, you can gain 2 PCIE 5.0 x16 slots, which can run in x16/x0 or x8/x8. Sadly you cannot divide the x8/x8 into x8/x4+x4 due to Intel not the motherboard makers. However, you are technically future proofed for 1 PCIE 5.0 M.2 SSD [with adapter] (or 2 if your not using a GPU) and since it is CPU-linked also, In theory with the right future APIs, you could load games/programs faster than a PS5. It would've been cool to run 2 PCIE 5.0 M.2's (with GPU installed) or 4 PCIE 5.0 M.2s, but you could thank Intel for this weird limitation that shouldn't technically exist.

X570:

Last edited: