Signal processing methods can be complex to understand leading to misconceptions. None is more victim of that than upsampling or interpolation. I am sure you have heard of people saying they play their HD content on 4K display and it looks "almost 4K." Same with audio. There is this notion that upsampling content to higher sample rate will result in more resolution. Alas, both of these are completely false.

Nothing in the process of interpolation creates more information/detail. Nothing. The algorithms (methods) used have no intelligence whatsoever as to try to guess what is supposed to be there. Instead they rely on methods that generate more pixels (image/video) or PCM samples (audio).

The definition of an ideal interpolator or resampler is one that creates these new samples but generates no new distortion. That's right. The only thing an interpolator can do is to reduce fidelity, not increase it!

If I asked you what the number is between 1 and 3 what would you say? 2? Nope. Who says that number was halfway in the middle? Yet that is what a simple mathematical method would produce. By using more samples we may be able to better that guess but ultimately the process is quite "dumb." It makes no attempt to understand the sequence of numbers and attempt to figure out as say, a human would, to determine the missing values.

Let's visualize these with an image. Here is a picture I took of the Sake barrels ("Kazaridaru") outside of the Meiji Shrine in Tokyo, Japan:

Its dimensions are 1024 pixels wide by 648 pixels high. Now let's reduce the resolution of that image in half in each dimension and the "upsample" it back to 1024x648. If the process can make up information that is lost when we reduced its size, the new image should look just like the above:

I hope you see that we did not get there. The dimensions of the image are the same as before but clearly the image is softer. In image/video, softer image means it has less high frequency information. That is exactly what happens when we chop down an 88.2 Khz high-res audio sample to 44.1. Half the bandwidth is lost in that conversion. Upsampling back to 88.2 Khz will not get us that lost detail back. It is the same process as what happened to the above image.

This is not the full story though. Let's look at the same transformation to half size and the back up, this time with a different interpolation/upsampling algorithm:

Notice the image is now sharper than the previous enlargement. In some way it seems that we got some of our lost resolution back. But we did not. What happened was that we took the lower resolution image and amplified its high frequency content, giving the illusion of it having more resolution. In audio terms, it is like turning up the treble. You will get a higher pitched sound but it recovers no more detail than what is in the music.

Note that there is a trade off in doing this. If you look around the Chinese symbols, there is a white halo. That is distortion we created by boosting the high frequencies as we enlarged the image. Now you see how true my previous definition of interpolation/resampling was. That we could do harm in the process of conversion.

That harm though may seem like a benefit. If you step back from your monitor far enough, you may not notice the artifacts but still see the benefit of sharper image. Same with audio. A resampling/upsampling algorithm can make the sound different, increasing its highs or reducing them and subjectively make the sound better to us. But again, in no case does it actually recover information that was lost. We are simply manipulating the information we have.

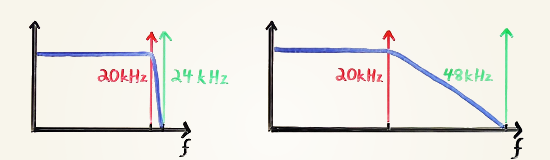

Another way to understand this topic is that interpolation is actually a low pass filter! Yes, that is all it is. Let's look at the simplest way we can enlarge an image which is to simply double up the pixels:

Notice that we now have a very sharp image but obviously something is wrong about it. It is all "pixelated." Those blocks now invent new high frequency information that is not supposed to be there (sharp edges of the blocks). A low-pass filter can remedy that by filing off those sharp blocks turning them into the first enlargement we had.

Nothing in the process of interpolation creates more information/detail. Nothing. The algorithms (methods) used have no intelligence whatsoever as to try to guess what is supposed to be there. Instead they rely on methods that generate more pixels (image/video) or PCM samples (audio).

The definition of an ideal interpolator or resampler is one that creates these new samples but generates no new distortion. That's right. The only thing an interpolator can do is to reduce fidelity, not increase it!

If I asked you what the number is between 1 and 3 what would you say? 2? Nope. Who says that number was halfway in the middle? Yet that is what a simple mathematical method would produce. By using more samples we may be able to better that guess but ultimately the process is quite "dumb." It makes no attempt to understand the sequence of numbers and attempt to figure out as say, a human would, to determine the missing values.

Let's visualize these with an image. Here is a picture I took of the Sake barrels ("Kazaridaru") outside of the Meiji Shrine in Tokyo, Japan:

Its dimensions are 1024 pixels wide by 648 pixels high. Now let's reduce the resolution of that image in half in each dimension and the "upsample" it back to 1024x648. If the process can make up information that is lost when we reduced its size, the new image should look just like the above:

I hope you see that we did not get there. The dimensions of the image are the same as before but clearly the image is softer. In image/video, softer image means it has less high frequency information. That is exactly what happens when we chop down an 88.2 Khz high-res audio sample to 44.1. Half the bandwidth is lost in that conversion. Upsampling back to 88.2 Khz will not get us that lost detail back. It is the same process as what happened to the above image.

This is not the full story though. Let's look at the same transformation to half size and the back up, this time with a different interpolation/upsampling algorithm:

Notice the image is now sharper than the previous enlargement. In some way it seems that we got some of our lost resolution back. But we did not. What happened was that we took the lower resolution image and amplified its high frequency content, giving the illusion of it having more resolution. In audio terms, it is like turning up the treble. You will get a higher pitched sound but it recovers no more detail than what is in the music.

Note that there is a trade off in doing this. If you look around the Chinese symbols, there is a white halo. That is distortion we created by boosting the high frequencies as we enlarged the image. Now you see how true my previous definition of interpolation/resampling was. That we could do harm in the process of conversion.

That harm though may seem like a benefit. If you step back from your monitor far enough, you may not notice the artifacts but still see the benefit of sharper image. Same with audio. A resampling/upsampling algorithm can make the sound different, increasing its highs or reducing them and subjectively make the sound better to us. But again, in no case does it actually recover information that was lost. We are simply manipulating the information we have.

Another way to understand this topic is that interpolation is actually a low pass filter! Yes, that is all it is. Let's look at the simplest way we can enlarge an image which is to simply double up the pixels:

Notice that we now have a very sharp image but obviously something is wrong about it. It is all "pixelated." Those blocks now invent new high frequency information that is not supposed to be there (sharp edges of the blocks). A low-pass filter can remedy that by filing off those sharp blocks turning them into the first enlargement we had.

Last edited: