Interference

Member

- Joined

- Mar 14, 2018

- Messages

- 88

- Likes

- 112

Hi all. I am having some learning time testing my good old E-MU 0404 soundcard in loopback. The card as combined inputs:

- TRS, Zin = 1 MOhm, FS = 12 dBV FS (~ 4 Vrms)

- XLR, Zin = 1.5 kOhm FS = 6 dBV FS (~ 2 Vrms)

When using a TRS to RCA adapter, output is measured at 2,07 Vrms with my multimeter. Stays within few per-mils going from 50 to 1000 Hz. Output impedance (line) is 560 Ohm. Using this signal as a calibration, I estimated the full scale of the input to be a little lower than the specs - as well as the output is a bit higher than the specs; probably this has to do with the gain stages.

Coming to the loobpack measurements, first thing I discovered is that cables do matter. Using a cheap generic cable against a S/PDIF-rated coaxial, so shielded shows noise floor variations as high as 10 dB, especially between 2 and 10 kHz. Probably won't be relevant at all when reproducing sound with a digital line chain, but it's critical for measurements and could impact low-level signals.

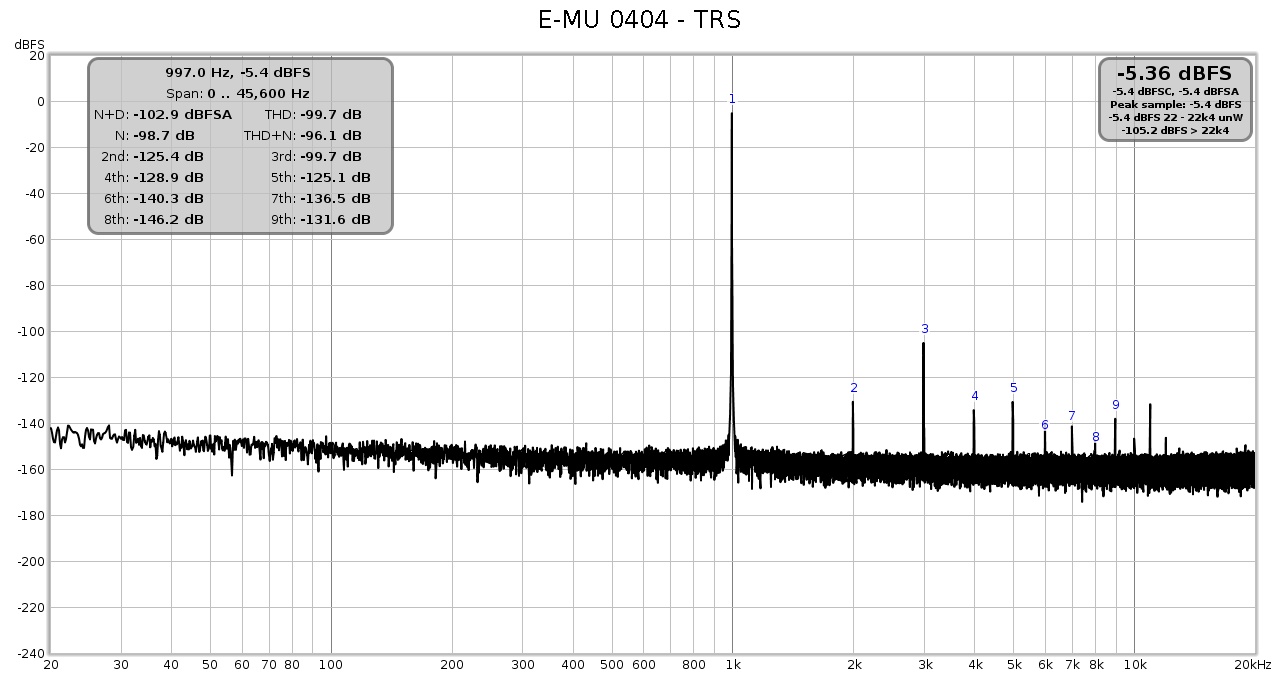

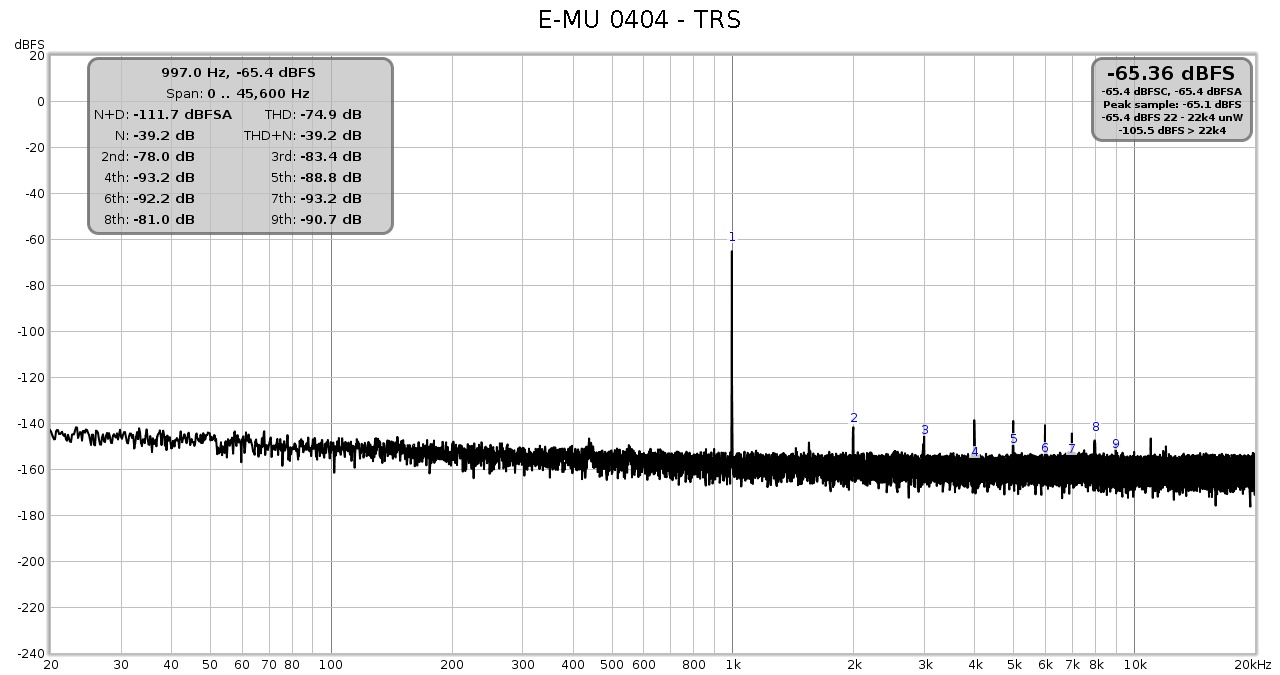

Let's come to the loopback measurements (all with the good cable). I have done 0.0 dBFS and a -60 dBFS loopback tests for the two input configurations. Note that:

- FS is different, so only relative measurements matter;

- input impedance is low for the XLR input, so the signal is reduced to 1.5 / (1.5 + 0.56).

0.0 dBFS

-60.0 dBFS

Observations and questions

- XLR input is ~ 2-3 dB quieter than TRS input, not sure if this is compatible with the lower input impedance (1 M in parallel with 560 shouldn't bring much more noise); could also be the different gain stage. Not sure, however it's not so much puzzling;

- distortion is ~2-3 dB lower for XLR when the signal is FS, but is 5 dB lower in favour of TRS when the signal is -60 dBFS. Note that the XLR input is more near to saturation for the 0.0 dBFS signal.

Can anybody give an interpretation of this? I am curious.

- TRS, Zin = 1 MOhm, FS = 12 dBV FS (~ 4 Vrms)

- XLR, Zin = 1.5 kOhm FS = 6 dBV FS (~ 2 Vrms)

When using a TRS to RCA adapter, output is measured at 2,07 Vrms with my multimeter. Stays within few per-mils going from 50 to 1000 Hz. Output impedance (line) is 560 Ohm. Using this signal as a calibration, I estimated the full scale of the input to be a little lower than the specs - as well as the output is a bit higher than the specs; probably this has to do with the gain stages.

Coming to the loobpack measurements, first thing I discovered is that cables do matter. Using a cheap generic cable against a S/PDIF-rated coaxial, so shielded shows noise floor variations as high as 10 dB, especially between 2 and 10 kHz. Probably won't be relevant at all when reproducing sound with a digital line chain, but it's critical for measurements and could impact low-level signals.

Let's come to the loopback measurements (all with the good cable). I have done 0.0 dBFS and a -60 dBFS loopback tests for the two input configurations. Note that:

- FS is different, so only relative measurements matter;

- input impedance is low for the XLR input, so the signal is reduced to 1.5 / (1.5 + 0.56).

0.0 dBFS

-60.0 dBFS

Observations and questions

- XLR input is ~ 2-3 dB quieter than TRS input, not sure if this is compatible with the lower input impedance (1 M in parallel with 560 shouldn't bring much more noise); could also be the different gain stage. Not sure, however it's not so much puzzling;

- distortion is ~2-3 dB lower for XLR when the signal is FS, but is 5 dB lower in favour of TRS when the signal is -60 dBFS. Note that the XLR input is more near to saturation for the 0.0 dBFS signal.

Can anybody give an interpretation of this? I am curious.