What is the exact wording?If he wants to exchange with you and us about sighted vs blind and his research.

-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The frailty of Sighted Listening Tests

- Thread starter patate91

- Start date

- Thread Starter

- #42

What is the exact wording?

We are a couple of people on ASR forum that have an exchange with Amir about sighted speakers evaluation.

I wanted to know if you would be interested to exchange with us. I think a lot of people could benefits from this.

Regards

I think of what an optometrist does when you get an eye exam. Look at the letters on the wall. He makes a change, "is that better or worse?" A sighted sighted test. Even an untrained person can go from blurry vision to quite good vision to function in the everyday world this way.

Then there's this:

https://www.eyeglassguide.com/my-vi...rmines the,into a prescription for eyeglasses.

KaiserSoze

Addicted to Fun and Learning

- Joined

- Jun 8, 2020

- Messages

- 699

- Likes

- 592

Importantly from Olive's article:

"Finally, the tests found that experienced and inexperienced listeners (both male and female) tended to prefer the same loudspeakers, which has been confirmed in a more recent, larger study. The experienced listeners were simply more consistent in their responses. As it turned out, the experienced listeners were no more or no less immune to the effects of visual biases than inexperienced listeners."

So a "trained" listener simply comes to their conclusions faster and are more consistent but doesn't appear to affect their biases. Perhaps there is different training bias that this article doesn't discuss, if anyone has a link or information on that it would be helpful.

I've read this before (the quote from Olive's article). There is something about it that bugs me. Experienced listeners are just as strongly affected by bias as everyone else, but they are more consistent. It would seem that experienced listeners have consistent biases whereas inexperienced listeners have inconsistent biases. Does not bias imply preference, and does not preference imply consistency? If experienced listeners are biased and also more consistent, experienced listeners are more biased. I don't see any way around simple logical inference, but then why didn't Olive say plainly that experienced listeners are more biased? The only way I see around the problem is if by "consistent" he means that they are more consistent in preferring better-measuring speakers, but in this case they are less biased than inexperienced listeners. Am I the only person who sees a problem with this?

- Joined

- Feb 23, 2016

- Messages

- 20,759

- Likes

- 37,600

Your post doesn't make any sense. The bias sighted had no bearing on the consistent performance blind. The bias isn't the source of the consistency.I've read this before (the quote from Olive's article). There is something about it that bugs me. Experienced listeners are just as strongly affected by bias as everyone else, but they are more consistent. It would seem that experienced listeners have consistent biases whereas inexperienced listeners have inconsistent biases. Does not bias imply preference, and does not preference imply consistency? If experienced listeners are biased and also more consistent, experienced listeners are more biased. I don't see any way around simple logical inference, but then why didn't Olive say plainly that experienced listeners are more biased? The only way I see around the problem is if by "consistent" he means that they are more consistent in preferring better-measuring speakers, but in this case they are less biased than inexperienced listeners. Am I the only person who sees a problem with this?

- Thread Starter

- #46

I've read this before (the quote from Olive's article). There is something about it that bugs me. Experienced listeners are just as strongly affected by bias as everyone else, but they are more consistent. It would seem that experienced listeners have consistent biases whereas inexperienced listeners have inconsistent biases. Does not bias imply preference, and does not preference imply consistency? If experienced listeners are biased and also more consistent, experienced listeners are more biased. I don't see any way around simple logical inference, but then why didn't Olive say plainly that experienced listeners are more biased? The only way I see around the problem is if by "consistent" he means that they are more consistent in preferring better-measuring speakers, but in this case they are less biased than inexperienced listeners. Am I the only person who sees a problem with this?

Trained listener what to hear for and are easier to "managed". They can help a lot and saves valuable time ans money. In other words they are more "efficient". But as imdividuals they are as much as untThere's certainly untrained listener than can be very unreliable. Consistency je not directly link with biais.

KaiserSoze

Addicted to Fun and Learning

- Joined

- Jun 8, 2020

- Messages

- 699

- Likes

- 592

Now this uncovers a very interesting problem. The only way you can know whether the auto-refractor does what it is supposed to do is by setting up the phoropter and allowing the subject to compare visual acuity for the Rx values given by the auto-refractor vs. other Rx numbers that differ slightly from the numbers given by the auto-refractor. Since the phoropter is relied on to validate the auto-refractor, the phoropter can never be replaced by the auto-refractor. This observation isn't just some clever mind puzzle or whatever. This is the reality.

KaiserSoze

Addicted to Fun and Learning

- Joined

- Jun 8, 2020

- Messages

- 699

- Likes

- 592

Your post doesn't make any sense. The bias sighted had no bearing on the consistent performance blind. The bias isn't the source of the consistency.

Well, it was a given that if I posted what I posted it wouldn't take long at all before someone would come along and write something like, "Your post doesn't make any sense." Further predictable was the fact that the first person to do this would demonstrate the inability to write a syntactically correct sentence. What I wrote makes perfect sense, and is not concerned with anything other than what Olive wrote in that quote. If you have any honest interest in knowing whether it really makes sense, you're going to have to first learn to comprehend English and then look only at the quote, nothing else. I have pointed out the reason that it is logically suspicious, and the reason I gave makes perfect, 100% sense.

- Joined

- Feb 23, 2016

- Messages

- 20,759

- Likes

- 37,600

Results of posting from a phone. Shortcut some logical inferences I thought you'd be able to follow. Maybe I'll post in more specificity later when it's not on a phone.Well, it was a given that if I posted what I posted it wouldn't take long at all before someone would come along and write something like, "Your post doesn't make any sense." Further predictable was the fact that the first person to do this would demonstrate the inability to write a syntactically correct sentence. What I wrote makes perfect sense, and is not concerned with anything other than what Olive wrote in that quote. If you have any honest interest in knowing whether it really makes sense, you're going to have to first learn to comprehend English and then look only at the quote, nothing else. I have pointed out the reason that it is logically suspicious, and the reason I gave makes perfect, 100% sense.

- Joined

- May 18, 2020

- Messages

- 1,285

- Likes

- 2,938

Well, it was a given that if I posted what I posted it wouldn't take long at all before someone would come along and write something like, "Your post doesn't make any sense." Further predictable was the fact that the first person to do this would demonstrate the inability to write a syntactically correct sentence. What I wrote makes perfect sense, and is not concerned with anything other than what Olive wrote in that quote. If you have any honest interest in knowing whether it really makes sense, you're going to have to first learn to comprehend English and then look only at the quote, nothing else. I have pointed out the reason that it is logically suspicious, and the reason I gave makes perfect, 100% sense.

Well, if you want a second opinion, your post made no sense. You didn't understand Olive's quote, and thereby confused and conflated two separate things. First, experienced listeners, tested blind, enter preference scores similar to each other, whereas inexperienced listeners, tested blind, enter relatively scattered scores, but when summed and averaged and processed, both groups' scores put the same speaker in the same relative position in the preference hierarchy.

Then came a period (or a full stop, depending on your geographical persuasion) which means the next sentence isn't necessarily connected to the previous, as indeed in this case it wasn't, because second: when tested sighted, both groups displayed the same degree of vulnerability to sighted bias.

The two thoughts were not connected. One did not follow on from the other. No doubt the quote has been mangled by editing and repetition, but one as syntactically sophisticated as you should have no trouble picking it apart.

And finally (not that he needs me to defend him) I thought Blumlein's all-thumbs one-liner was a model of economy, elegance, and grammatical and syntactical accuracy.

I figured you would mis-position the discussion and that is precisely what you did.We are a couple of people on ASR forum that have an exchange with Amir about sighted speakers evaluation.

I wanted to know if you would be interested to exchange with us. I think a lot of people could benefits from this.

Regards

The question is whether random sighted testing by someone is valid. It is not. Question is specifically whether trained listeners can provide useful feedback even when their testing is sighted. And when they have the benefit of the objective measurements.

If you had stated the debate accurately, it could have gotten you an answer that he could provide in that message rather than pondering if he is going to come here to debate a bunch of subjectivists defending sighted listening.

I've read this before (the quote from Olive's article). There is something about it that bugs me. Experienced listeners are just as strongly affected by bias as everyone else, but they are more consistent. It would seem that experienced listeners have consistent biases whereas inexperienced listeners have inconsistent biases. Does not bias imply preference, and does not preference imply consistency? If experienced listeners are biased and also more consistent, experienced listeners are more biased. I don't see any way around simple logical inference, but then why didn't Olive say plainly that experienced listeners are more biased? The only way I see around the problem is if by "consistent" he means that they are more consistent in preferring better-measuring speakers, but in this case they are less biased than inexperienced listeners. Am I the only person who sees a problem with this?

I think this is mixing up 2 separate studies. The sighted vs blind study simply showed how sighted bias can affect a listeners perceptions of the sound quality and why the tests need to be done blind. The trained vs untrained listeners study was done to show that Harman could use fewer trained listeners and achieve the same statistical significance as a much larger untrained group of listeners, the listening tests were still done blind though. Neither of these studies claims that trained listeners are less affected by bias, in fact there is a chart that shows the "experienced listener" actually differentiated much more in the blind and sighted portion of the test. These were all Harman employees so I'm not sure if experienced means the same as their now formal training but it does mention experience with this sort of listening test.

here's that chart:

- Thread Starter

- #53

I figured you would mis-position the discussion and that is precisely what you did.

The question is whether random sighted testing by someone is valid. It is not. Question is specifically whether trained listeners can provide useful feedback even when their testing is sighted. And when they have the benefit of the objective measurements.

If you had stated the debate accurately, it could have gotten you an answer that he could provide in that message rather than pondering if he is going to come here to debate a bunch of subjectivists defending sighted listening.

You're the one who said in other threads that since you're a trained listener + your experience that you were less subject to biais.

I hope people will help to lead the exchange. But first let see if Dr Olive accept.

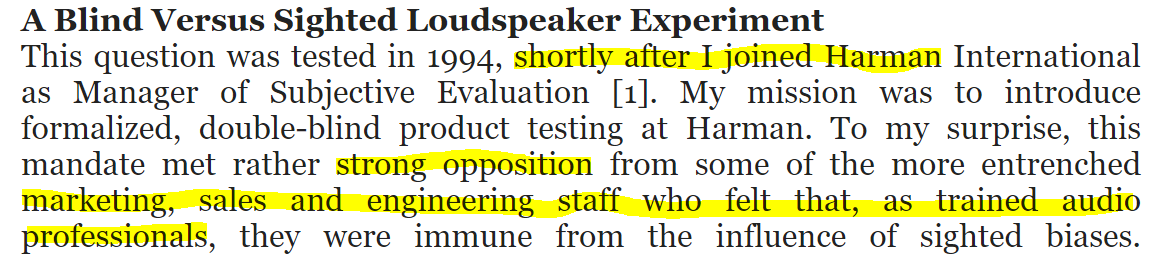

OK, let's dig into the study and clear out some confusion. I was going to tell this story as Sean told it to us in person but I see that it is right there in his blog post:

Focusing on the first sentence, there was essentially a revolt against the new people coming in saying controlled tests are necessary for proper evaluation of speakers. The "old guard" immediately took this as a challenge to their expertise and stated that they knew what the speaker sounded like and were in no use of any academics to tell them otherwise. So Sean and crew set out to prove them wrong.

Now the key part is the last section I have highlighted. "trained audio professionals" were not trained/critical listeners as I am talking about. They were existing sales, marketing and engineering staff who had been in audio business for a long time at Harman and elsewhere. Heaven knows we all agree that such jobs do not give them automatic qualification to be trained listeners.

A trained listener in my book gets paid to only evaluate audio fidelity. He is properly taught what to look for and he makes a living performing such tests. No one would have had such experience prior to Sean arriving and certainly would not hold the position of sales, marketing, etc.

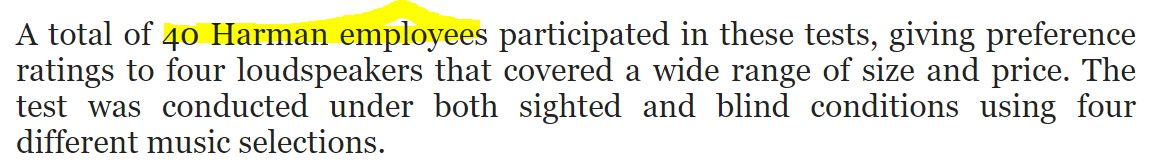

Here is the next bit:

All testers -- experienced or otherwise -- were Harman employees. No one like me who has no stake at the company or its products was in the study. Remember, the goal was to prove the Harman employees wrong.

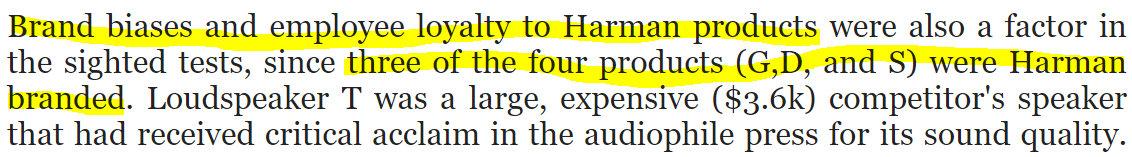

Here is the punchline and why you don't want to extrapolate from this study to what I am doing at ASR:

Of course Harman employees would have strong views regarding their own products in sighted tests that would disappear in blind tests. You all keep saying "we are talking about biases all humans have." Well no. None of us have an employee relationship with the products being tested, and neither do I. None of us have pride of brand or engineering in the products being tested either. So what impacted these employees the most in sighted tests, would not be in play in ours.

That is NOT to say that was the only bias. Seeing an expensive speaker like T and a cheap one even from Harman does bias people. Am I effected by that? Maybe sometimes. But I am on record in recommending cheap $99 speakers as well as ugly DIY speakers from people I am not necessarily fond of. In other words, I have demonstrated isolation from such biases to high degree.

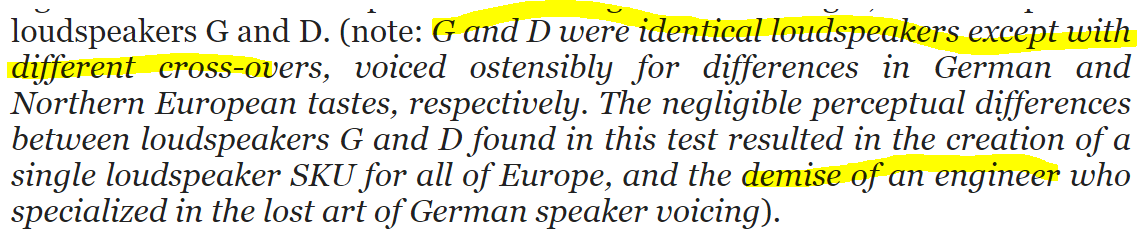

And of course there was silliness like this:

This is a very strong bias: people who take positions in a company, i.e. German people want a sound, and then go on to create such products. Separating themselves from their own children becomes very hard. That is why my compression team despite having their own trained listeners/testers, would come to me for bit decisions for listening tests. I was not biased like the team that created the technology. As a result, I would routinely be harder on the technology and find flaws that needed to be fixed.

Likewise, that engineered needed to be proven wrong and let go.

Discussion and Conclusions

This study was not aimed at determining usefulness of professionally and formally trained listeners in sighted evaluations. It was designed to solve an internal problem where people did not want to believe in controlled testing, period. What the researchers showed that being in audio industry doesn't make you good in evaluating speakers in sighted testing. This "industry experience" was stated as the person having training causing people here to keep referring to this study even though it doesn't apply.

There are also other differences. I come fully armed into most listening session with objective performance of a speaker that gives me a strong direction as to what the speaker may do, and what faults it has. I am trained to listen critically, past the beauty of music in wide range of domains and audio impairments. Someone like me was not tested in the study.

Note that as I have corrected people countless times, I am not infallible. I may be biased but the biggest source of error is that I am not sitting there comparing 4 speakers at once that get the same score. I do go back and forth manually at times but that is coursery. So my reference of what is good is somewhat variable. A person in Harman tests has a better situation than me with four speakers to compare against each other.

So some amount of error exists in my subjective assessments. With correlation of 75% or better with good measurements, whatever error there is, is small. It has to be or our measurements are quite wrong!

Focusing on the first sentence, there was essentially a revolt against the new people coming in saying controlled tests are necessary for proper evaluation of speakers. The "old guard" immediately took this as a challenge to their expertise and stated that they knew what the speaker sounded like and were in no use of any academics to tell them otherwise. So Sean and crew set out to prove them wrong.

Now the key part is the last section I have highlighted. "trained audio professionals" were not trained/critical listeners as I am talking about. They were existing sales, marketing and engineering staff who had been in audio business for a long time at Harman and elsewhere. Heaven knows we all agree that such jobs do not give them automatic qualification to be trained listeners.

A trained listener in my book gets paid to only evaluate audio fidelity. He is properly taught what to look for and he makes a living performing such tests. No one would have had such experience prior to Sean arriving and certainly would not hold the position of sales, marketing, etc.

Here is the next bit:

All testers -- experienced or otherwise -- were Harman employees. No one like me who has no stake at the company or its products was in the study. Remember, the goal was to prove the Harman employees wrong.

Here is the punchline and why you don't want to extrapolate from this study to what I am doing at ASR:

Of course Harman employees would have strong views regarding their own products in sighted tests that would disappear in blind tests. You all keep saying "we are talking about biases all humans have." Well no. None of us have an employee relationship with the products being tested, and neither do I. None of us have pride of brand or engineering in the products being tested either. So what impacted these employees the most in sighted tests, would not be in play in ours.

That is NOT to say that was the only bias. Seeing an expensive speaker like T and a cheap one even from Harman does bias people. Am I effected by that? Maybe sometimes. But I am on record in recommending cheap $99 speakers as well as ugly DIY speakers from people I am not necessarily fond of. In other words, I have demonstrated isolation from such biases to high degree.

And of course there was silliness like this:

This is a very strong bias: people who take positions in a company, i.e. German people want a sound, and then go on to create such products. Separating themselves from their own children becomes very hard. That is why my compression team despite having their own trained listeners/testers, would come to me for bit decisions for listening tests. I was not biased like the team that created the technology. As a result, I would routinely be harder on the technology and find flaws that needed to be fixed.

Likewise, that engineered needed to be proven wrong and let go.

Discussion and Conclusions

This study was not aimed at determining usefulness of professionally and formally trained listeners in sighted evaluations. It was designed to solve an internal problem where people did not want to believe in controlled testing, period. What the researchers showed that being in audio industry doesn't make you good in evaluating speakers in sighted testing. This "industry experience" was stated as the person having training causing people here to keep referring to this study even though it doesn't apply.

There are also other differences. I come fully armed into most listening session with objective performance of a speaker that gives me a strong direction as to what the speaker may do, and what faults it has. I am trained to listen critically, past the beauty of music in wide range of domains and audio impairments. Someone like me was not tested in the study.

Note that as I have corrected people countless times, I am not infallible. I may be biased but the biggest source of error is that I am not sitting there comparing 4 speakers at once that get the same score. I do go back and forth manually at times but that is coursery. So my reference of what is good is somewhat variable. A person in Harman tests has a better situation than me with four speakers to compare against each other.

So some amount of error exists in my subjective assessments. With correlation of 75% or better with good measurements, whatever error there is, is small. It has to be or our measurements are quite wrong!

And yet you refused to say that (trained listeners) to Sean. You baited him as if this is the generic challenge of sighted versus blind which it absolutely is not. If he comes and is disappointed with your representation and leaves, I know who to hold accountable.You're the one who said in other threads that since you're a trained listener + your experience that you were less subject to biais.

- Thread Starter

- #56

And yet you refused to say that (trained listeners) to Sean. You baited him as if this is the generic challenge of sighted versus blind which it absolutely is not. If he comes and is disappointed with your representation and leaves, I know who to hold accountable.

I shared the link to this thread too.

I shared the link to this thread too.

I'm curious why you don't respond to any of the points that Amir has made regarding the paper and blog post. He even helpfully bolded some statements / clarifications / conclusions. Since you cited the paper / blog post, do you agree with his analysis? Or you disagree?

Discussion and Conclusions

This study was not aimed at determining usefulness of professionally and formally trained listeners in sighted evaluations. It was designed to solve an internal problem where people did not want to believe in controlled testing, period. What the researchers showed that being in audio industry doesn't make you good in evaluating speakers in sighted testing. This "industry experience" was stated as the person having training causing people here to keep referring to this study even though it doesn't apply.

There are also other differences. I come fully armed into most listening session with objective performance of a speaker that gives me a strong direction as to what the speaker may do, and what faults it has. I am trained to listen critically, past the beauty of music in wide range of domains and audio impairments. Someone like me was not tested in the study.

Note that as I have corrected people countless times, I am not infallible. I may be biased but the biggest source of error is that I am not sitting there comparing 4 speakers at once that get the same score. I do go back and forth manually at times but that is coursery. So my reference of what is good is somewhat variable. A person in Harman tests has a better situation than me with four speakers to compare against each other.

So some amount of error exists in my subjective assessments. With correlation of 75% or better with good measurements, whatever error there is, is small. It has to be or our measurements are quite wrong!

Good points and the "experienced listeners" vague definition is the weak part of the study but it is still the basis for Harman conducting their listening tests blind. Logic says that if Harman thought their trained listeners were just as reliable in sighted evaluations, they would have no need to run the tests blind.

Similar threads

- Replies

- 9

- Views

- 1K

- Replies

- 19

- Views

- 2K

- Replies

- 2K

- Views

- 270K

- Replies

- 2

- Views

- 1K

- Replies

- 3

- Views

- 727