That's a lot of questions I'll do my best.

... answers ....

O.K. now it is clear what you were looking for. It really wasn't before to me, it seemed open and vague.

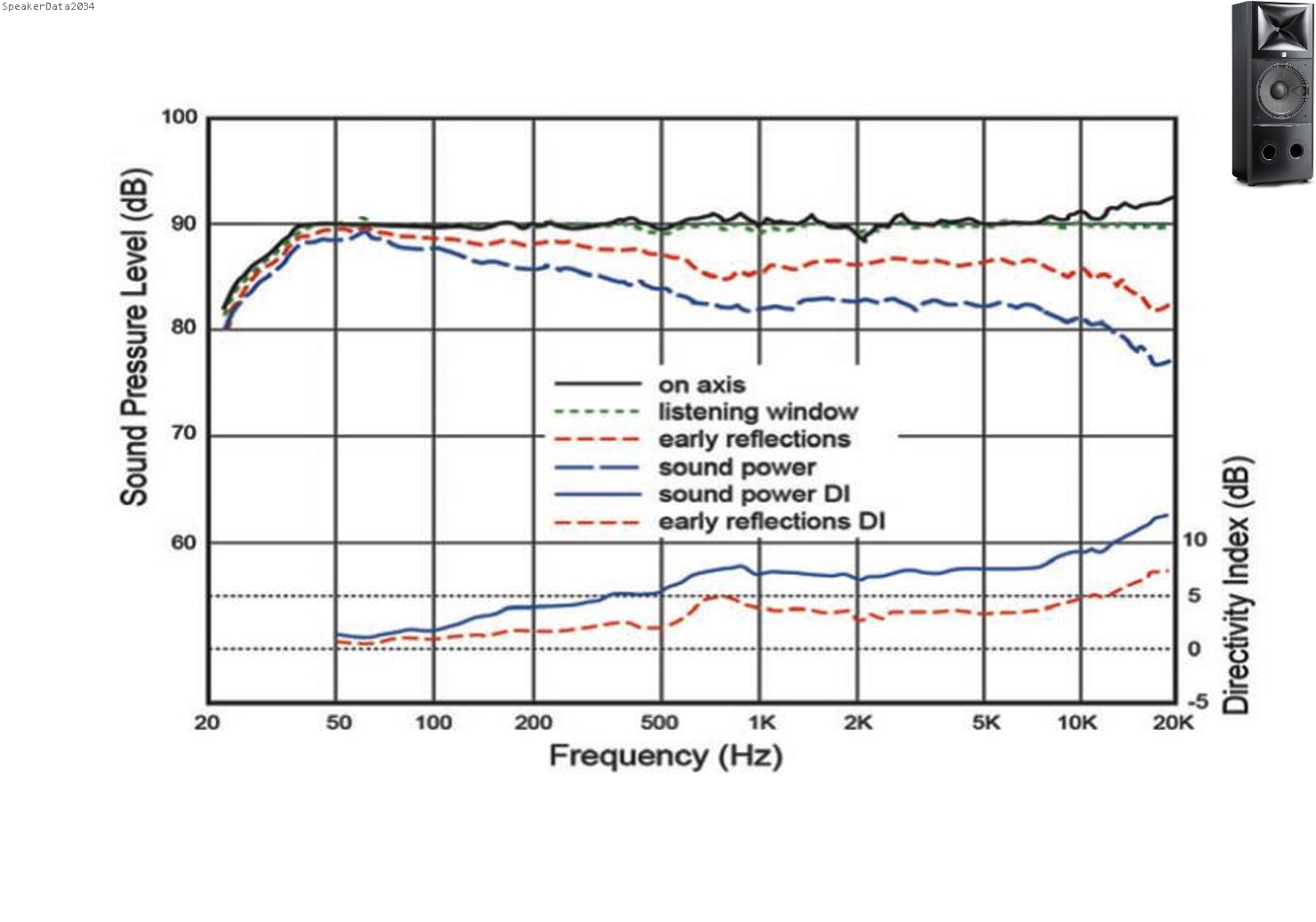

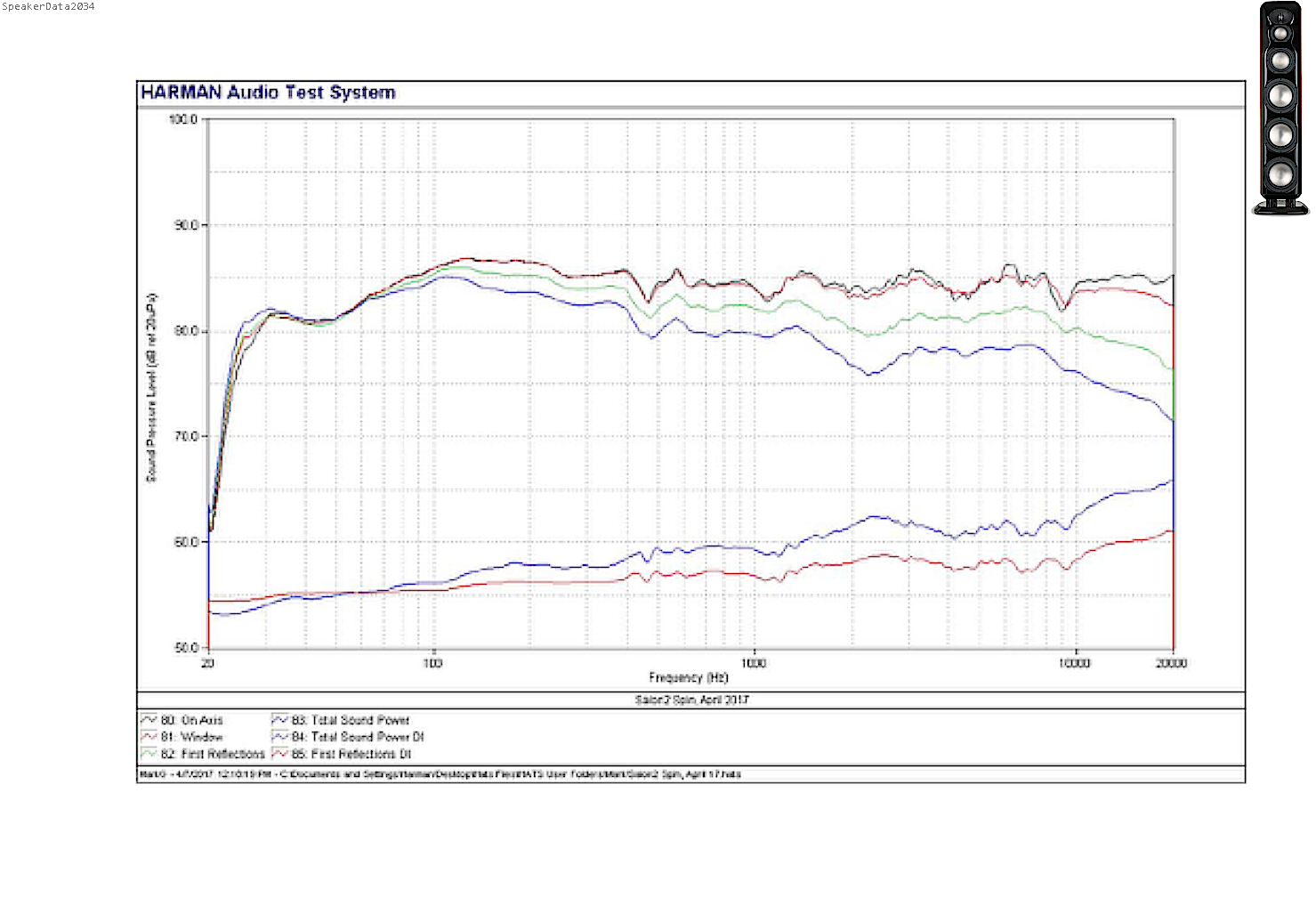

Suppose ASR only posted plots and perhaps some comments on the plots (talking 'bout the speakers).

I can tell you with absolute certainty that while I understand how the plots are taken and what they show the plots do tell me something about the tonal character and that they have a certain directivity I honestly cannot predict from all these plots how these will sound in my room.

I can guesstimate how they will sound on an open field and how tonal balance might change when I walk around it.

Can't say how it will sound in my room though. Way too inexperienced for that.

In this case I can look for reviews, perhaps even from people I kind of trust.

When Amir would decide to only post plots I would not be much wiser. When he also mentions about its sound (in his room) he just mentions how he hears it. It is additional info that adds to the whole review.

I don't give a crap if he does this sighted or blinded nor if he compares it and to what (haven't heard his speakers so have no reference anyway).

His impressions and comments may tell me more than all the plots.

When other reviewers review speakers you can be 100% certain they will be sighted. You can't be certain about motives either or taste of the reviewer (unless you know things he reviewed and agree with that).

Blind takes away some biases. That's all. Nice if you have something to prove.

As Olive said. There is a place for sighted listening as well.

I get the defensive stuff from Amir and why he feels compelled to spend time to defend his integrity and that there sometimes are discrepancies and inconsistencies. Don't know if it is worth a thread and many hours that could be spent on more useful things.

I suppose those that read the discussed papers and comments already know Olive's standpoint in this. If not, he hasn't made things clear enough.

Personally I think it is interesting to debate the pro's and con's of blind vs sighted tests.

To me its a waste of time to argue about the site's owner and if and how much he agrees with someone else.

I don't even care how good a listener he is. I really don't. I only care how something will sound to me in my house. I don't give a crap about other peoples research either. I read it, digest it and if it got me curious I'll follow up on it, audition it etc.

All the research read everywhere and also my experience tells me that to test/compare with a high degree of accuracy a test should be:

Blind, level matched, well chosen test material, well defined test parameters, optimal conditions, checked by measurement), repeatable, have statistic relevance and it must be clear how the test was performed and the conclusions are drawn.

One can draw certain conclusions from such a test. It won't tell me if I like it and works well in my home. I may have a feeling it might or might not.

A sighted test in my home will tell me all I want/need to know and is very easy and quick to do. That is when sighted has its place.

I don't care what others think about that or if if Amir is inconsistent now and then for whatever reason. I expect the man to be honest to himself and have no reason to think he is not.

All people that have an opinion have fans, followers, people that don't care, haters. That's life.

I can understand others may feel different about all this but cannot put myself in their shoes.