I have brought this up several times previously.

https://www.audiosciencereview.com/...ts-of-benchmark-dac1-usb-dac.3708/#post-88831

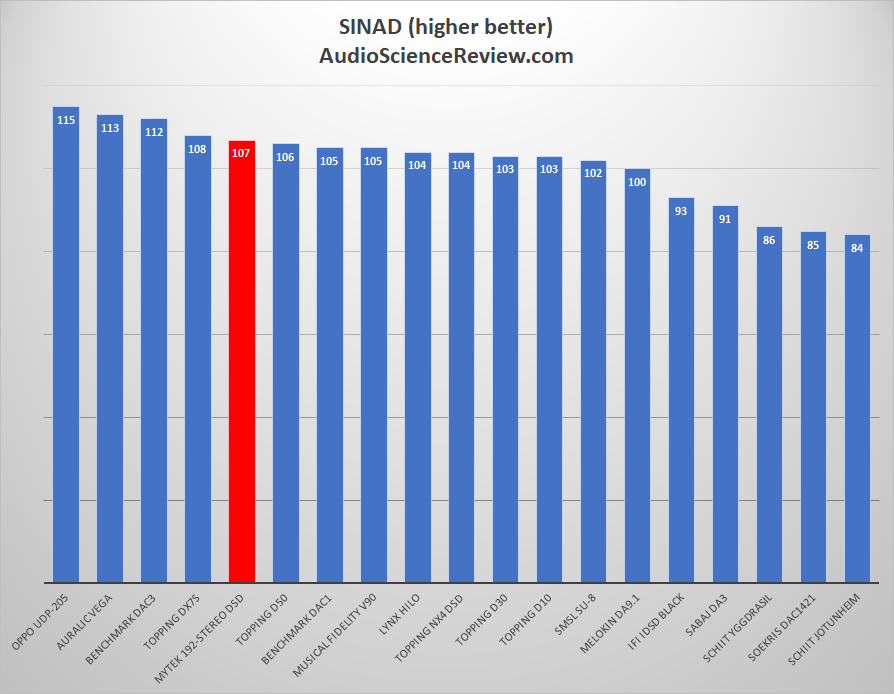

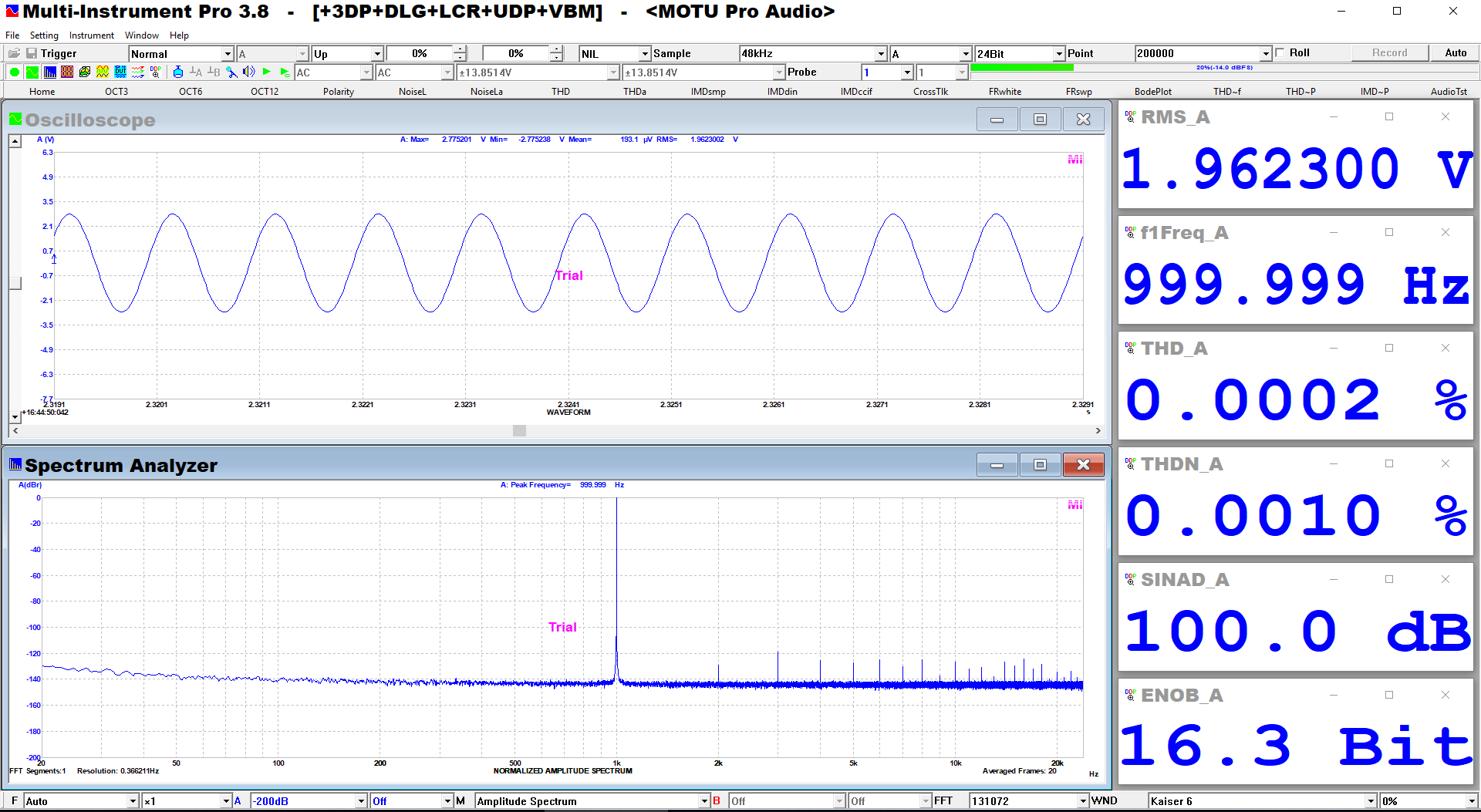

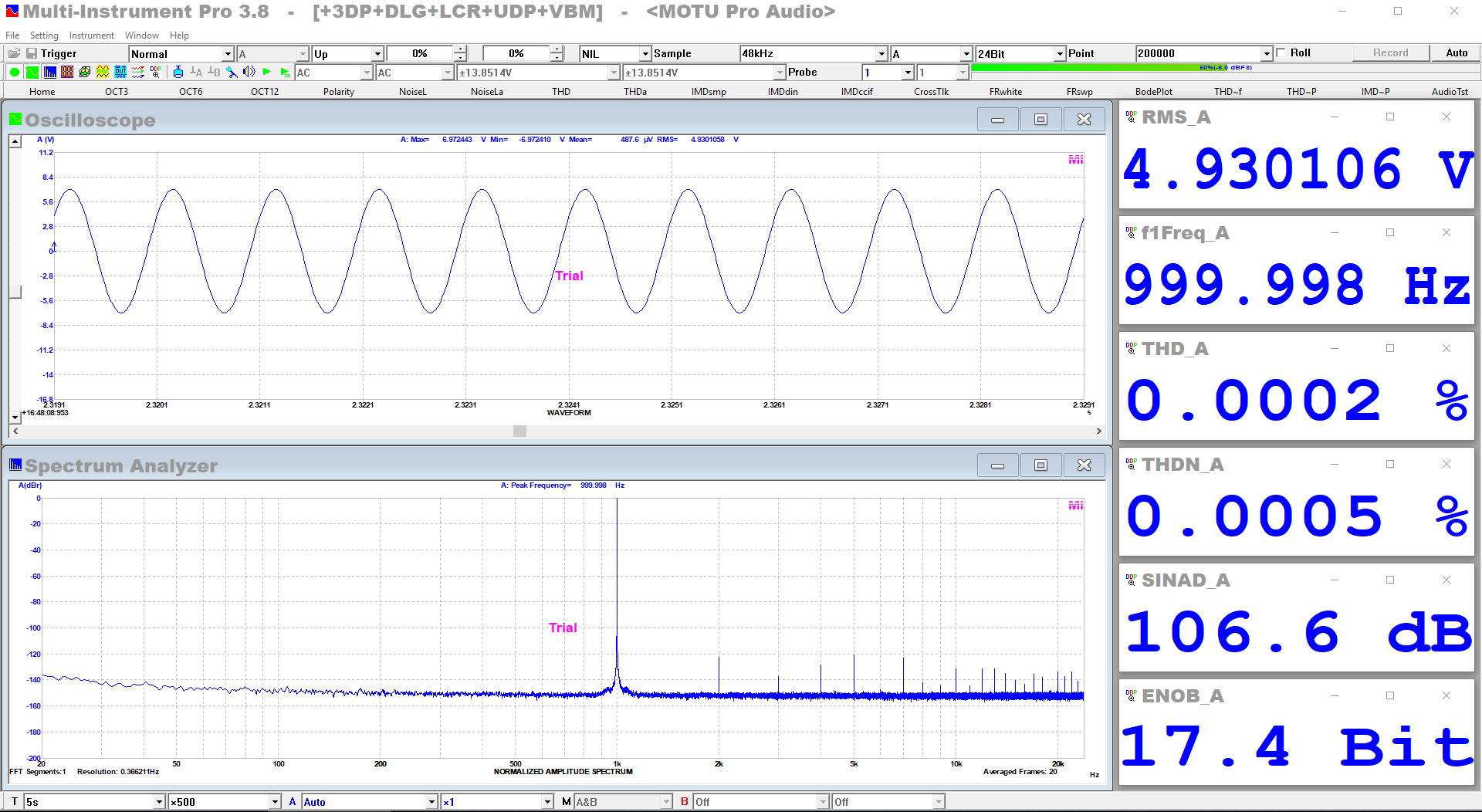

The S/N+D comparison bar graph is certainly not a fair or level playing field due to the widely different output levels. In short, it is rather meaningless. The commentary on SINAD numbers in reviews should take note of the relative level and call it for what it is, not just focus on the absolute number.

We also have a whole slew of D/A converters that appear

inherently faulty and need help with tweaking their output levels to maximize S/N and minimize their THD/Clipping near 0dbFS it would seem.

With DACs that often is - 1dbFS instead of 0 dbFS.

IMO, D/As with poor performance on or around 0dBFS should be called for their poor design and their numbers published as is. After all, it is only a few decades ago where 0dBFS in all D/As gave the absolute best case figures.

This is a problem. As of late I have started to think that in the Dashboard view I should show the best case scenario even if it means lowering the volume to get around extreme clipping and such. Open to feedback on this.

The concept of helping a product achieve its best S/N and THD figures seems ridiculous to me. What's next, redesigning Schiit products before testing them?

Where line-level devices put out obscenely high levels, that are essentially unusable in normal operation, in an attempt to get industry leading S/N numbers, those tactics should also be called for what they are.

2.0V* has been a consumer level standard for digital since the introduction of the Compact Disc in 1983. At the time, there were various reasons for that number (minimum residual noise in line stages, the current output LSBs and of course, maximizing S/N). Previous to that, 'line' level for amplifier inputs, and most devices was 150mV for full power.

Pro level is a different story, with it's own standards for level and S/N should be referenced to those standards.

Headphone amplifier S/N should be referenced to their

specified rated output level, not their maximum output achieved.

Amplifiers have always been referenced to 1 watt (2.83V@8ohm) and their

specified rated power.

The most important number is the residual noise in uV. Wideband and weighted. This is rarely specified. With more and more gear running SMPS supplies, the 22KHz bandwidth limit hides a lot of sins. I work back from Amir's S/N numbers to approx total residual as it is always the limiting factor. It's what gets amplified right through the chain and becomes the hiss you hear with your efficient speakers and high gain amplification. That noise is what buries your bits.

* incidentally, a last minute change to the consortium's specs called for an increase from 1.4V to 2.0V. The reasons for this are not in any of the early CD documentation and books that I have, however, I believe it was an attempt for the already designed Toshiba 14bit budget D/A converters** to hit the minimum S/N spec the consortium (the Japanese manufacturers) wanted for worldwide release and not let Sony gain (pun intended) the upper hand in published specs. Consider Sony had already released in the Japanese home market, the CDP-101 with a 2.0V rated output, 6 months prior...

**TD6710N which appeared in several early budget machines.

Here is that last minute change to production for the Hitachi DA-1000, dated March 1, 1983.

Basically, output buffer gain change and ~3dB of S/N & DR improvement for free, just in time for the worldwide debut of CD...

So, as you can see, output level and S/N games have been going on for many decades.