-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

MQA Deep Dive - I published music on tidal to test MQA

- Thread starter GoldenOne

- Start date

- Status

- Not open for further replies.

Huh? This is the first quote from them:

'"MQA has no DRM component or application outside the studio," it says. "We have no opinion on this beyond noting that DRM is a futile exercise for general music distribution." '

This tells me they absolutely understand that their format better not have DRM. And that they know the labels don't care about being told about that or they would not be quoted saying so.

Control ⊃ DRM ⊃ Copy-Protection

If you replace "⊃" by "=", then the statement is no longer true.

Grooved

Addicted to Fun and Learning

- Joined

- Feb 26, 2021

- Messages

- 679

- Likes

- 441

There are other ways to do it, but it's indeed the simple one. Will reject any non-MQA fileThe MQA Tag Restorer app from MQA is still working and can still get those hidden MQA tags.

awdeeoh

Member

- Joined

- Feb 24, 2020

- Messages

- 68

- Likes

- 28

I've yet to find any instance where the hifi version of an mqa track was not simply the mqa file without flagging.

If you're aware of any I'd be happy to check. 2lno or other purchase sites are a different situation (or at least I would sincerely hope they don't have the same issue.....)

Try the UMG ones.

Grooved

Addicted to Fun and Learning

- Joined

- Feb 26, 2021

- Messages

- 679

- Likes

- 441

... "MQA provides the opportunity to deliver the exact sound heard from the real master without actually putting the crown jewels out there to be stolen."

The thing to not forget is the difference between the MQA file created, and the technology. If this line was true, by "MQA", he should say "MQA technology" as the file by itself doesn't achieve that goal, in the hypothesis that file+MQA processing achieves that, which I also doubt at least for this main reason : most of all mixes and masters are done in PCM, and while you are listening to do the master, you're listening a PCM file being converted to analog to feed the amp/speakers. You adjust it based on this listening.

If you were to use another codec in the chain, there is a big chance that your adjustments would be different.

End of the story on the part saying that MQA is allowing you to listen what the artist/engineer intended. At this moment, it adds a small change, that some can like, some won't, that may work better on some tracks and not on others, but there's a change to what the mastering studio created.

The only possibility of MQA ending up sounding better than PCM in the sense of how anyone want it to sounds, and maybe better than PCM because there is part of the technology that has some potential, would be to work on the creation/production with the codec from the start.

This is the only way it can improve something, and certainly the main goal of Stuart and his crew (inserting it in the whole chain).

Last edited:

- Joined

- Nov 6, 2018

- Messages

- 1,448

- Likes

- 4,813

As best I can tell, the agitation came from companies that were competitive to Meridian and decided it would be on their dead body they would adopt something from their competitors.

For those companies, what is/was the business case for adopting a new closed source proprietary lossy codec and licensing it from a third party?

For us, customers, why do we need a new lossy codec?

There is no plan because there is no DRM provision in MQA. Why don't we speculate that they are bank robbers too?

people in this thread are identifying the ability to turn the blue light on as proprietary and hence drm. Correct, though, nothing is stopping the consumer from playing the music, blue light or not.

this begs the question though. How does mqa plan on making money? Install a few million blue lights in consumer homes and then charge the labels and streaming services to light them up? I don’t think there are a few million standalone dacs in the world that can fit a blue light.

or the converse? Convince the labels to encode using mqa so that consumers feel that they need to have blue lights or they are not getting the whole file?

the problem seems to be that they are trying to do both at once, which is a classic chicken and egg problem. They want labels and streaming services to pay to encode for the value add of a blue light in the consumer end while at the same time trying to convince the consumer that they need a blue light to complete their listening experience.

I see your point about the blue light now, amir. This all comes down to a blue light that no one particularly wants to pay for.

JSmith

Master Contributor

Misleading paying consumers is no laughing matter and shouldn't be treated trivially... effectively it's bait and switch here to an extent. If you were lied to about a different kind of product I would expect you'd be returning same for a refund as not fit for purpose etc.They are lying to me!

JSmith

Raindog123

Major Contributor

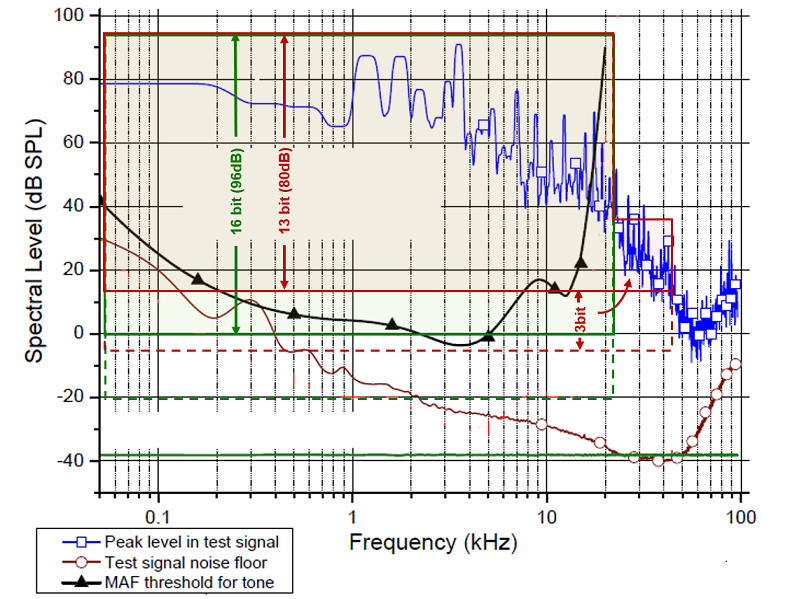

A quick “alternative” look at the CD (16/44ksps [22kHz audio]) vs MQA 1st unfold (to 88ksps/44kHz). It maps codec’s “information capacity” onto the “amount of data needed to represent the music”. And is based on Meridian's own data kindly pointed at by @amirm:

The blue line is the measured music spectrum “envelope” (throughout the entire song, the energy at each frequency is somewhere between its noise floor and this max). The brown line is measured noise floor (constant through the song, but would vary between recording setups). The black line is the minimum level a human ear can hear this frequency (MAF [minimum audible field]. See how it peaks at 20kHz!)

On top of Meridian’s measurements, I’ve superimposed (1) the [solid] green area depicting the amount/type of information stored in a Redbook (“CD”) encoded file - its 16 bits offer 96dB of dynamic range and 44ksps translate to 22kHz of audio bandwidth; (2) the solid red area is MQA’s 44kHz audio band – now reduced in dynamic range - as a few bits are “borrowed” to carry the 1st unfold (22-44kHz band) information. [Here, let’s just assume that such a computational transformation does exist and can be implemented.] With the help of the dither technique, the [quantization] noise floor can be further lowered – for both CD and MQA – that is represented by bottom dashed areas.

Now, a couple of observations. If the three curves measured by Meridian (the MQA folks) – the music spectrum envelope, music noise floor, and our hearing ability – do represent the real world, then (1) CD’s 16/44 (the green area) is fully adequate and sufficient to deliver everything – both dynamic and frequency ranges – that a human can hear. Well, duh. (2) While there is [inaudible, “ultrasonic”] energy above 22kHz in the spectrum of real music – as many sound sources and their harmonics do not have our ears’ limitations – (a) it is inaudible to humans, (b) a 16-bit MQA hardly has the dynamic range to accurately represent it (3 bits only provide 20dB, so need at least 24-bit MQA), and (c) if after all this we still want this inaudible to us (!) 22+kHz band to be played-back by our audio systems, a “normal hi-res” (24/96+) format provides both unrestricted dynamic and frequency ranges to deliver all the music information in there.

And a couple of questions I still have: (1) How accurate is the above Meridian data? (Eg, are there recordings requiring substantially higher dynamic ranges (both above and below 22kHz)? Is the MAF hearing-threshold curve valid?) (2) Can the MQA actually [dynamically, recording-based] “split” the base band (<22kHz) 24 bits [of each sample, in time domain] into these “non-MQA backward compatible” baseband portion and the “fold(s)” info? (Can not only the dynamic ranges but also absolute levels be represented? And is there a proof it actually works?) (3) Finally, again why do we care about inaudible ultrasonic band?

The blue line is the measured music spectrum “envelope” (throughout the entire song, the energy at each frequency is somewhere between its noise floor and this max). The brown line is measured noise floor (constant through the song, but would vary between recording setups). The black line is the minimum level a human ear can hear this frequency (MAF [minimum audible field]. See how it peaks at 20kHz!)

On top of Meridian’s measurements, I’ve superimposed (1) the [solid] green area depicting the amount/type of information stored in a Redbook (“CD”) encoded file - its 16 bits offer 96dB of dynamic range and 44ksps translate to 22kHz of audio bandwidth; (2) the solid red area is MQA’s 44kHz audio band – now reduced in dynamic range - as a few bits are “borrowed” to carry the 1st unfold (22-44kHz band) information. [Here, let’s just assume that such a computational transformation does exist and can be implemented.] With the help of the dither technique, the [quantization] noise floor can be further lowered – for both CD and MQA – that is represented by bottom dashed areas.

Now, a couple of observations. If the three curves measured by Meridian (the MQA folks) – the music spectrum envelope, music noise floor, and our hearing ability – do represent the real world, then (1) CD’s 16/44 (the green area) is fully adequate and sufficient to deliver everything – both dynamic and frequency ranges – that a human can hear. Well, duh. (2) While there is [inaudible, “ultrasonic”] energy above 22kHz in the spectrum of real music – as many sound sources and their harmonics do not have our ears’ limitations – (a) it is inaudible to humans, (b) a 16-bit MQA hardly has the dynamic range to accurately represent it (3 bits only provide 20dB, so need at least 24-bit MQA), and (c) if after all this we still want this inaudible to us (!) 22+kHz band to be played-back by our audio systems, a “normal hi-res” (24/96+) format provides both unrestricted dynamic and frequency ranges to deliver all the music information in there.

And a couple of questions I still have: (1) How accurate is the above Meridian data? (Eg, are there recordings requiring substantially higher dynamic ranges (both above and below 22kHz)? Is the MAF hearing-threshold curve valid?) (2) Can the MQA actually [dynamically, recording-based] “split” the base band (<22kHz) 24 bits [of each sample, in time domain] into these “non-MQA backward compatible” baseband portion and the “fold(s)” info? (Can not only the dynamic ranges but also absolute levels be represented? And is there a proof it actually works?) (3) Finally, again why do we care about inaudible ultrasonic band?

Last edited:

bidn

Active Member

How about : Malevolent Qualityless Audiofraud ?

Huh? This is the first quote from them:

'"MQA has no DRM component or application outside the studio," it says. "We have no opinion on this beyond noting that DRM is a futile exercise for general music distribution." '

This tells me they absolutely understand that their format better not have DRM. And that they know the labels don't care about being told about that or they would not be quoted saying so.

Of course MQA folks know all of this because they are always in discussions with labels and music distributors. People raising objections otherwise seem to do so without the benefit of that knowledge.

Defintion of DRM according to Wikipedia:

Digital rights management (DRM) tools or technological protection measures (TPM)[1] are a set of access control technologies for restricting the use of proprietary hardware and copyrightedworks.[2] DRM technologies try to control the use, modification, and distribution of copyrighted works (such as software and multimedia content), as well as systems within devices that enforce these policies.

MQA strives to do all of these things! Surely the marketing is very clever here. Just claiming it’s not DRM technology doesn’t make it true, though. Obviously it’s a much more lite version of what we have been given before mass market streaming became popular. But that doesn’t really matter. The purpose is still the same. That is what the labels see. They are given an way back from the mistakes they made years ago. And they also see the clever marketing happening. It’s a typical “move along, nothing to see here” strategy.

valerianf

Addicted to Fun and Learning

It would be interesting to compare the same measurements between Tidal/MQA and Amazon music HD.

As Amazon music HD output 24 bit/192 kHz I am guessing that a clear winner will appear.

As Amazon music HD output 24 bit/192 kHz I am guessing that a clear winner will appear.

guenthi_r

Active Member

Amazon Music HD delivers Bitperfect Redbook & HiRes PCM, checked this with some random Albums.It would be interesting to compare the same measurements between Tidal/MQA and Amazon music HD.

As Amazon music HD output 24 bit/192 kHz I am guessing that a clear winner will appear.

Raindog123

Major Contributor

The fervor seems religious to me. Worthy of a better cause. At the end of the day, this is a storm in a glass of water. The no-MQA logos in signatures are downright bizarre, in my opinion.

You are forgetting, we are largely talking about “a hobby”. With the exception of a few professional snake-oil spin-doctors here (and the jury is still out on the MQA flock), the rest of us have day jobs. And, boy, have you ever seen the enthusiasm of a Star Wars cosplayer, or a Manchester United fan!? As for “bizarre logos”, you have not seen my hockey jersey!

Last edited:

Don Hills

Addicted to Fun and Learning

Moby is MQA.

The problem is that the 'hifi' version is simply MQA but with MQA flagging removed. meaning any tools to check for MQA flagging will say it is not MQA. But actually it is.

...

The same happened with my tracks, the "HiFi" version had no MQA flagging at all and nothing would recognise it as MQA. But it was 100% bitperfect to the MQA release and was not the same as my master.

...

I'm confused... I don't see how this could be. You download the "Hifi" and "Master" versions, one turns on the MQA indicator when you play it, the other doesn't. The bitstream that turns on the MQA indicator is embedded in the audio, so how can the files be bit identical?

bidn

Active Member

Defintion of DRM according to Wikipedia:

MQA strives to do all of these things! Surely the marketing is very clever here. Just claiming it’s not DRM technology doesn’t make it true, though. Obviously it’s a much more lite version of what we have been given before mass market streaming became popular. But that doesn’t really matter. The purpose is still the same. That is what the labels see. They are given an way back from the mistakes they made years ago. And they also see the clever marketing happening. It’s a typical “move along, nothing to see here” strategy.

Very well said!

Numerous, if not all, MQA claims re. the side of audio customers are lies (and often even contradictory!...):

- it is high quality, Iossless;

- preserving and giving access to the original masters;

- detecting and erasing noise in the original audio;

- preserving, controlling and showing data integrity that the customer gets the original music intended by the artists (blue light);

- having no DRM encryption

- and so on...

Very impressive! Don't they deserve gold medals for several categories?:

- highest number of false claims

- biggest audio scam

- most invasive scam re. the number of audio industry areas affected

- most obscure audio codec

- weirdest audio codec

...

I vote for Malevolent Qualityless Audiofraud !

Last edited:

When you find a fact as crude as the one showing this graph, if you are interested in learning something instead of just bashing MQA, what would be interesting is to discuss why do you think that gap is happening, that happens to be exactly at the Nyquist frequency of Redbook (other than the presumption of most people here that this MQA process is done by absolutely incompetent engineers, of course)...

My guess: MQA claims that it is trying to correct the aliasing problems found in sources when dealing with digital data (instead of analog sources), applying some filter techniques. Perhaps if they can't fix it because of the nature of the source presented, the algorithm assumes that is better just to erase a small 1/3 octave supra-aural band instead of leaving those aliasing artifacts in. Is it that bad? from a religious "losslessness" credo it is; if you are instead interested in the aural results, perhaps it is not.

I could not let this one slide

Notice anything strange except for the hole in the middle? No? Then let's do some "folding" and show you (I've done this one before, but one more won't hurt anyone):

See whats going on here? The HF is a perfect mirror image of the LF, just with a lower level and a sloping.

You know what they call that in circles outside of MQA: aliasing... of the worst kind! So please stop telling us that MQA somehow solves aliasing, because all evidence points towards the expact opposite.

@amirm: why do you condemn this kind of behaviour when reviewing DAC's, but not when MQA does it? If you were given an upscaler to review that showed the exact same properties, I bet you it would get a scolding review.

awdeeoh

Member

- Joined

- Feb 24, 2020

- Messages

- 68

- Likes

- 28

I'm confused... I don't see how this could be. You download the "Hifi" and "Master" versions, one turns on the MQA indicator when you play it, the other doesn't. The bitstream that turns on the MQA indicator is embedded in the audio, so how can the files be bit identical?

He is confused too, cuz the MQA tag is now hidden that MediaInfo app can't even detect it.

There are other tools to reveal that hidden thing, just like a file ripped from MQA-CD.

Tidal updated the MQA files.

Last edited:

- Status

- Not open for further replies.

Similar threads

- Replies

- 18

- Views

- 5K

- Replies

- 4

- Views

- 2K

- Replies

- 426

- Views

- 22K

- Replies

- 148

- Views

- 34K