https://en.wikipedia.org/wiki/Binaural_recording

Binaural recording is intended for replay using headphones and will not translate properly over stereo speakers.

Conventional stereo recordings do not factor in natural ear spacing or "

head shadow" of the head and ears, since these things happen naturally as a person listens, generating their own

ITDs (interaural time differences) and ILDs (interaural level differences). Because loudspeaker-crosstalk with conventional stereo interferes with binaural reproduction (i.e., because the sound from each channel’s speaker is heard by both ears rather than only by the ear on the corresponding side, as would be the case with headphones), either headphones are required, or

crosstalkcancellation of signals intended for loudspeakers such as

Ambiophonics is required. For listening using conventional speaker-stereo, or

MP3 players, a

pinna-less dummy head may be preferable for quasi-binaural recording, such as the sphere microphone or Ambiophone. As a general rule, for true binaural results, an audio recording and reproduction system chain, from microphone to the listener's brain, should contain one and only one set of pinnae (preferably the listener's own) and one head-shadow.

Not all cues required for exact localization of the sound sources can be preserved this way, but it also works well for loudspeaker reproduction.

Using space to manipulate a sound and then re-recording it has been done through the use of echo chambers in recording studios for many years. In 1959, an echo chamber was famously used by Irving Townsend during the post-production process of

Miles Davis's 1959 album

Kind of Blue. "[the effect of the echo chamber on Kind of Blue is] just a bit of sweetening. At 30th Street, a line was run from the mixing console down into a low-ceilinged, concrete basement room - about 12 by 15 feet in size - where we set up a speaker and a good omnidirectional microphone."[3]

Using an MRI scanner, Brüel & Kjær and DTU collected the geometries of a large population of human ears. By capturing the full ear canal geometry including the bony part adjoining the eardrum was, this data was post-processed to determine the average human ear canal geometry. Based on this, High-frequency Head and Torso Simulator (HATS) Type 5128, creates a very realistic reproduction of the acoustic properties, covering the full audible frequency range (up to 20 kHz).[6]

There are some complications with the playback of binaural recordings through headphones. The sound that is picked up by a microphone placed in or at the entrance of the ear channel has a frequency spectrum that is very different from the one that would be picked up by a free-standing microphone. The diffuse-field head-transfer function (HRTF), that is, the frequency response at the ear drum averaged for sounds coming from all possible directions, is quite grotesque, with peaks and dips exceeding 10

dB. Frequencies from around 2 kHz to 5 kHz in particular are strongly amplified as compared to free field presentation.[8]

During an interview with Chris Pike from BBC R&D in September 2012, Pike stated that "you may get good spatial impression but timbral coloration is often an issue".[10] The issue of timbral coloration is mentioned in a large amount of spatial enhancement research and is sometimes seen as the outcome of the misuse or insufficient amount of HRTF data when reproducing binaural audio for example, or the fact that the end-user simply will not respond well to the collected HRTF data. Francis Rumsey states in the 2011 article "Whose head is it anyway?" [11] that "badly implemented HRTFs can give rise to poor timbral quality, poor externalisation, and a host of other unwanted results".[11] Getting the HRTF data correct is a key point in making the final product a success, and possibly by making the HRTF data as extensive as possible, there will be less room for error such as timbral issues. The HRTFs used for

Private Peaceful[9] were designed by measuring impulse responses in a reverberant room, done so to capture a sense of space, but is not very external and there are obvious timbral issues as pointed out by Pike.[10]

Juha Merimaa's from Sennheiser Research Laboratories in California discusses using HRTF filters and EQ to reduce timbral issues in his paper entitled 'Modification of HRTF Filters to Reduce Timbral Effects in Binaural Synthesis, Part2: Individual HRTFs' (2010).[12] His research found that using HRTF filters to reduce timbral issues did not affect the spatial localisation previously achieved using the data when tested on a panel of listeners. This explains that there are ways of reducing the effects of timbral issues on audio that has been processed with HRTF data, but this does mean further EQ manipulation of the audio. If this route is to be further explored, researchers will have to be happy with the fact that the audio is being manipulated in great amounts to achieve a greater sense of spatial awareness, and that this further manipulation will cause irreversible changes to the audio, something content creators may not be happy with. Consideration will have to be taken into how much manipulation is appropriate and to what extent, if any, will this affect the end users experience.It is important to consider the room that the BRIR and HRTF data has been collected in, as different rooms will influence the end results.

When recording a series of HRTF data, only a limited amount of measurements can be taken for distribution, and the end-users will have to find the best results for themselves. Of course the best HRTF data for any individuals will be the information that would be collect from their own pinna, not something that content creators for mobile applications are currently taking part in. Because of this, timbral issues may be unavoidable while using non-personal HRTF data, or attempting to distribute any audio that has already been affected by spatial manipulation. It may be that the most feasible route to improving spatial awareness in audio is to explore the possibilities of head tracking or other methods of collecting HRTF data at the user-end.

Many mp3 players and tablets are traditionally supplied with low budget earphones and these can cause problems for spatially enhanced audio.

Ideal listening conditions will most likely be experienced with headphones designed and calibrated to give an as flat frequency response as possible in order to reduce colouration of the audio the user is listening to.

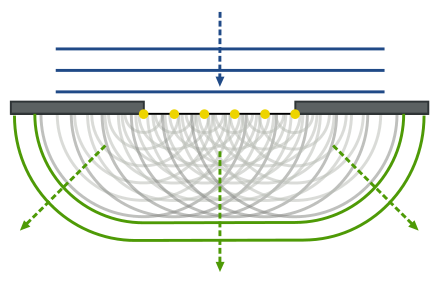

Microphones are placed exactly at the ear drum using Primo EM 172 and 235mm being the average earlobe to earlobe distance. The sigmoid form in the canal of Kaan makes up for the missing head to a greater extent.

Lastly, the types of things that can be recorded do not have a typically high market value. Studio recordings would have little to benefit from using a binaural set up, beyond natural cross-feed, as the spatial quality of the studio would not be very dynamic and interesting.

Recordings that are of interest are live orchestral performances, and ambient "environmental" recordings of city sounds, nature, and other such subject matters.