-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Evidence-based Speaker Designs

- Thread starter Ilkless

- Start date

- Joined

- Jun 19, 2018

- Messages

- 6,652

- Likes

- 9,403

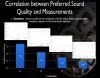

Ok. I’m having difficulty finding the raw data of DBT. I found two graphs from a Sean Olive blog from 2009:

http://seanolive.blogspot.com/2009/04/dishonesty-of-sighted-audio-product.html?m=1

Couple of quick thoughts:

1) Test subjects are not randomized. You have 40 Harman employees. Having owned Harman speakers, I know their attributes and would be able to pick them out in a DBT. Obviously Harman employees would have brand loyalty and they may already be aware of Toole design principles and how those speakers would behave. Also 40 test subjects would be considered small in a pharma test.

2) Four speakers is not nearly enough to accurately represent all the different kinds of design principles that are available on the market. I know this test is intended to show the merits of blind testing.

3) The error bars are huge. At least 3 of the 4 speakers could be the winner based on the bars.

If someone could point me to more raw data, I would greatly appreciate it.

Yeh, these are fair criticisms too I reckon. The study you're looking at there is just one of many, though.

To the best of my knowledge, this research began at the NRC in the 1980s and had nothing to do with Harman back then. The first studies I read are here and here.

Those studies involved 42 listeners who were musicians, recording industry professionals and "audiophiles", and 11 loudspeakers.

(On a side note, Toole addresses the mono/stereo issue in Part 2, saying, "highly rated loudspeakers received closely similar ratings in both stereo and mono tests, but loudspeaker with lower ratings tended to receive elevated ratings in stereo assessments." Make of that what you will. There's a much more in-depth discussion in Dr Toole's book FWIW.)

Also in the book is the following assertion (p. 54): "Olive has conducted numerous sound quality evaluations with listeners from Europe, Canada, Japan and China, and has yet to find any evidence of cultural bias."

Again, I would prefer to see the actual data, but that is Toole's conclusion in any case...

svart-hvitt

Major Contributor

- Joined

- Aug 31, 2017

- Messages

- 2,375

- Likes

- 1,253

Obviously not, but now you have articulated it more clearly.

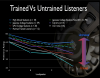

In the data from Toole and Olive trained listeners are superior at picking faults with speakers, however untrained listeners still come to broadly the same conclusions with the same preferences.

Just being a bit pedantic here. In my field, I often experience that younger researchers get credit for work and insight of previous generations. And that irritates me as I think history of science often can be as fascinating as science in itself.

On ASR I have read remarks that Toole's (and Olive's) research document a "training effect", as if the two invented the effect of training (in audio).

To give some perspective, in 1976, Blauert and Laws submitted a paper to be published in Journal of the Acoustical Society of America (http://www.mariobon.com/Articoli_storici_AES/1976_Group_delay_distortions.PDF). Here, Blauert and Laws write:

"Training sessions were performed during a period of 15 days at 9 a.m. each day and each session lasted 30minutes. The subject received feedback after every judgment and had to repeat the judgment in case of failure. Each session began with a measurement. The results are plotted in Fig. 8.

After only one day of training we found a reduction inthreshold values from 0.86 to 0.54 ms, and in the following session the asymptotic value of 0.4 ms has already been reached. The threshold values stabilized after eighth day.

We learned from these tests that even relatively short training is sufficient to make subjects familiar with the attributes of sound sensations which are correlated to group delay distortions. Subsequent tests were therefore always preceded by appropriate training sessions".

So it seems like insights into the effect of training in audio had been already documented by peers of Toole by the time Toole started to write his well-known papers on trained listeners. Please correct me if Toole really was the first to document the effect of training in audio. Besides, I guess what Blauert and Laws documented on the effect of training had already been documented in other disciplines than audio.

This seems like a small detail, but in a thread on evidence in audio we'd better - as already discussed above - have a wider perspective than one book and one man, even if this book and this man is probably the first - but not last - you should visit to learn audio.

DDF

Addicted to Fun and Learning

- Joined

- Dec 31, 2018

- Messages

- 617

- Likes

- 1,360

Obviously not, but now you have articulated it more clearly.

In the data from Toole and Olive trained listeners are superior at picking faults with speakers, however untrained listeners still come to broadly the same conclusions with the same preferences.

Yes, I’ve been familiar with the data since it was published in the aes papers. While they saw trends in relative scoring similar for untrained listeners, there were obvious and not infrequent outliers and the data isn’t really statistically significant. It in no way is at cross purposes with my observation

DDF

Addicted to Fun and Learning

- Joined

- Dec 31, 2018

- Messages

- 617

- Likes

- 1,360

Is it the same idea as: users of Walkman got used to a certain tonal balance and they like speakers that match what they are used to?

I think that’s a drastic and hyperbolic example but definitely ones exposure affects their perception of accurate

- Joined

- Jun 19, 2018

- Messages

- 6,652

- Likes

- 9,403

Yes, I’ve been familiar with the data since it was published in the aes papers. While they saw trends in relative scoring similar for untrained listeners, there were obvious and not infrequent outliers and the data isn’t really statistically significant. It in no way is at cross purposes with my observation

Just to clarify which data we're talking about, would you mind citing the specific papers you talking about here pls?

DDF

Addicted to Fun and Learning

- Joined

- Dec 31, 2018

- Messages

- 617

- Likes

- 1,360

I’m traveling for work so don’t have access right now but search aes conf proceedings or the journalJust to clarify which data we're talking about, would you mind citing the specific papers you talking about here pls?

- Joined

- Jun 19, 2018

- Messages

- 6,652

- Likes

- 9,403

Higher distortion, doesn't match the sensitivity of the horn, doesn't necessarily have the same beamwith either, and with a smaller horn the crossover causes phase anomalies and superposition in a sensitive area which is very audible IMO.

Higher distortion than what?

What's the problem in your view with a sensitivity mismatch?

The beamwidth can be matched quite easily (and is in every competent design).

Could you explain your last point please? What horn profiles (or do you mean all horn profiles)? "Smaller" relative to what? Causes phase anomalies how?

@Bjorn I would be very interested to hear your perspective on these questions when you get around to it

- Joined

- Jun 19, 2018

- Messages

- 6,652

- Likes

- 9,403

I’m traveling for work so don’t have access right now but search aes conf proceedings or the journal

I'm not having much luck here I'm afraid. I'm an AES member but it's not entirely clear what I'm searching for. Would appreciate you posting the names of the papers when you're back from travelling

...or if you could just share the search terms you used to find them in the first place...

Last edited:

- Joined

- Jun 10, 2018

- Messages

- 6,189

- Likes

- 9,276

Please: His name is Toole.

You are correct, but people call me a "tool" and I don't know anything about designing speakers.

Erik

Active Member

- Joined

- Jul 1, 2018

- Messages

- 137

- Likes

- 271

Audio market knows, that peope have different desires and even cultural differencies in what kind of (measured) reproduced sound they like.

For example, the test were performed in the US. How do we know the tests would result the same in Europe or Africa? How do we know those populations don’t have a certain genetic make up that would predispose them to favor a different set of speaker design principles.

The Influence of Listeners’ Experience, Age, and Culture on Headphone Sound Quality PreferencesAlso in the book is the following assertion (p. 54): "Olive has conducted numerous sound quality evaluations with listeners from Europe, Canada, Japan and China, and has yet to find any evidence of cultural bias."

Again, I would prefer to see the actual data, but that is Toole's conclusion in any case...

www.aes.org/e-lib/browse.cfm?elib=17500

www.youtube.com/watch?v=sWkHIxmIqjo

Factors that Influence Listeners’ Preferred Bass and Treble Balance in Headphones

www.aes.org/e-lib/browse.cfm?elib=17940

www.youtube.com/watch?v=ySQV5OR71e4

If someone could point me to more raw data, I would greatly appreciate it.

- Joined

- Nov 29, 2017

- Messages

- 543

- Likes

- 1,618

This is addressing a side-topic that's sort of been dropped, but I think it's worth considering that "this standard of good sound does not predict purchasing decisions well" isn't actually a very robust criticism. Edit: Well, maybe unless you're talking market strategy as a manufacturer

On the face of it, it seems pretty coherent - after all, audio equipment is for audio, so if your chosen standards for quality are right, shouldn't they align with what people actually seem to prefer? In fact, however, I would argue that it'd be quite surprising if people did consistently group around the speakers (or any audio equipment really) that sounded best (to the exclusion of all other traits, at least).

We have to consider that when people buy audio equipment, "precisely how well does this align with my preferences for sound" isn't the sole criterion they apply. Aesthetics, status, perceived reliability/risk of failure, size, convenience of purchase, and a score of other factors will influence what people want to buy, even assuming that they knew exactly how every option sounded (which, obviously, they don't).

As a headphone person, the most obvious example of a non-sound factor that meaningfully influences my willingness to buy a given item is ergonomics - this stuff goes on my head, and stays there quite a while, so it needs to be comfortable. For speaker people, I'd suggest that if the latest, greatest, constant directivity, 0.001% THD, DSP-controlled wünderspeaker was available for only two installments of $99.99 and personally signed by Floyd Toole, but was 10 feet tall, 15 feet wide, and day glow orange, there would not be many takers. While this is a ludicrous extreme, I would suggest that those of us who care about sound enough to spend a lot of time reading books and having internet discussions about it might need to apply some extremes to the other aspects of audio equipment to get some perspective on how the value balance stacks up to people who aren't as passionate.

But, of course, both as manufacturers, and as consumers, there's a good reason to see what people prefer on the sound side of thing when all else is excluded - and that's precisely what we get from research like Toole and Olive's. It won't perfectly predict consumer behavior, because sound isn't the only thing people buy for (and consumers don't have perfect information, sadly), but it's a good guide both for designers and for people, like us, who are really interested in the sound (perhaps to the exclusion of all else).

On the face of it, it seems pretty coherent - after all, audio equipment is for audio, so if your chosen standards for quality are right, shouldn't they align with what people actually seem to prefer? In fact, however, I would argue that it'd be quite surprising if people did consistently group around the speakers (or any audio equipment really) that sounded best (to the exclusion of all other traits, at least).

We have to consider that when people buy audio equipment, "precisely how well does this align with my preferences for sound" isn't the sole criterion they apply. Aesthetics, status, perceived reliability/risk of failure, size, convenience of purchase, and a score of other factors will influence what people want to buy, even assuming that they knew exactly how every option sounded (which, obviously, they don't).

As a headphone person, the most obvious example of a non-sound factor that meaningfully influences my willingness to buy a given item is ergonomics - this stuff goes on my head, and stays there quite a while, so it needs to be comfortable. For speaker people, I'd suggest that if the latest, greatest, constant directivity, 0.001% THD, DSP-controlled wünderspeaker was available for only two installments of $99.99 and personally signed by Floyd Toole, but was 10 feet tall, 15 feet wide, and day glow orange, there would not be many takers. While this is a ludicrous extreme, I would suggest that those of us who care about sound enough to spend a lot of time reading books and having internet discussions about it might need to apply some extremes to the other aspects of audio equipment to get some perspective on how the value balance stacks up to people who aren't as passionate.

But, of course, both as manufacturers, and as consumers, there's a good reason to see what people prefer on the sound side of thing when all else is excluded - and that's precisely what we get from research like Toole and Olive's. It won't perfectly predict consumer behavior, because sound isn't the only thing people buy for (and consumers don't have perfect information, sadly), but it's a good guide both for designers and for people, like us, who are really interested in the sound (perhaps to the exclusion of all else).

Just being a bit pedantic here. In my field, I often experience that younger researchers get credit for work and insight of previous generations. And that irritates me as I think history of science often can be as fascinating as science in itself.

On ASR I have read remarks that Toole's (and Olive's) research document a "training effect", as if the two invented the effect of training (in audio).

To give some perspective, in 1976, Blauert and Laws submitted a paper to be published in Journal of the Acoustical Society of America (http://www.mariobon.com/Articoli_storici_AES/1976_Group_delay_distortions.PDF). Here, Blauert and Laws write:

"Training sessions were performed during a period of 15 days at 9 a.m. each day and each session lasted 30minutes. The subject received feedback after every judgment and had to repeat the judgment in case of failure. Each session began with a measurement. The results are plotted in Fig. 8.

After only one day of training we found a reduction inthreshold values from 0.86 to 0.54 ms, and in the following session the asymptotic value of 0.4 ms has already been reached. The threshold values stabilized after eighth day.

We learned from these tests that even relatively short training is sufficient to make subjects familiar with the attributes of sound sensations which are correlated to group delay distortions. Subsequent tests were therefore always preceded by appropriate training sessions".

So it seems like insights into the effect of training in audio had been already documented by peers of Toole by the time Toole started to write his well-known papers on trained listeners. Please correct me if Toole really was the first to document the effect of training in audio. Besides, I guess what Blauert and Laws documented on the effect of training had already been documented in other disciplines than audio.

This seems like a small detail, but in a thread on evidence in audio we'd better - as already discussed above - have a wider perspective than one book and one man, even if this book and this man is probably the first - but not last - you should visit to learn audio.

View attachment 23151

I don't think I have seen any comments here that say or even imply they invented the concept of training, just that they used it. Nonetheless the additional info is interesting.

Last edited:

But, of course, both as manufacturers, and as consumers, there's a good reason to see what people prefer on the sound side of thing when all else is excluded - and that's precisely what we get from research like Toole and Olive's. It won't perfectly predict consumer behavior, because sound isn't the only thing people buy for (and consumers don't have perfect information, sadly), but it's a good guide both for designers and for people, like us, who are really interested in the sound (perhaps to the exclusion of all else).

I think this sentiment is largely correct...if one is designing a speaker as a commercial project, then all else equal (roughly), favoring designs with relatively flat frequency response and consistent off axis is a good idea.

I watched the video of Toole's lecture above. He has some excellent arguments for what makes a "good" speaker, including the evidence from the listening tests. But there are some assumptions and matters of opinion he injects into the discussion along the way. (Look at medical research for contrast, researches are careful not to extrapolate the implications of the research beyond what can be reasonably concluded. So if a researcher is testing a cancer drug in vitro they report the results of that experiment. They can't jump to the efficacy of the drug in humans...)

I would be very interested to know what the correlation is between the objective aspects of sound, size, cost, visual design, and sales are!

Some considerations:

- Nobody listens to speakers blinded. You can only draw limited conclusions about a product if you don't test in relatively real-world conditions. Blinded tests cannot be used to fully test phenomena that involve visual elements. Visual input has an effect on sound, and this is not just placebo. (Prove this to yourself by sitting in front of your speakers listening closely, then close your eyes.) I'm pretty confident that visual elements of subjective speaker quality could be ascertained to some level of repeatability. As Toole points out, the effect is so strong that it can't be overcome by the intention of the listener.

- Blinded tests have limited meaning when testing subjective phenomena. For example, if you are testing medication, there will be an objective measurement that will prove efficacy or not. There is no objective way to prove that a person had a better subjective experience (Maybe you could do an MRI). You can only rely on the subjects reported experience. In the case of the blinded speaker tests, the repeatability of the results is a proxy for an objective result. This cannot invalidate a given person's subjective experience in the way a blinded speaker wire test could. The differences in speaker sounds are real, and easily determined.

- The classic "audiophool" objection to AB/X testing is that differences can't be picked up in short term tests. This is indeed a ridiculous objection, when subjective reports of sonic improvement are often superlative, implying a substantiality that should be discernable quickly.

When considering speakers, I don't think you can as confidently extrapolate from short term listening tests. (Harmon may have done long term listening tests, and the results might correlate exactly, I don't know.) I mention this because when evaluating speakers I can usually make a quick determination as to what I like that's close, but I have found that my impression changes over time.

- There's a big element left out of simply asking what makes a speaker "good" which is good for what? Different kinds of music, from different eras, are flattered by different types of speakers. So if someone listens to a wide range of styles, then a relatively neutral speaker might be the best. In my own case, for fun I listen to primarily rock music from the 60s, 70s, 80s, 90s.

As Toole mentions, studio monitoring deviated from some objective "norm" of quality for most of this time. The most common monitors would be large soffit mounted monitors or wooden boxes with 2 or 3 way systems. The engineers and producers of the time also mixed to the speakers that would be found in the "real world," which were largely wooden boxes! It is my opinion that the rock music of these eras sounds the best on speakers that have these characteristics.

- There is a subtle reason for this, but essentially it goes back to the idea of "transparency." Toole is "old school" and the concept of "hi fi" is rooted in the ability of a playback system to represent the original musical event faithfully. So he talks about an ideal studio monitor being one that would be "transparent" to...what? If you record a violin or voice it's pretty "clear." We know what these sound like, so we can evaluate whether it is being faithfully reproduced. But most modern music exists as an a complete acoustic event only at the output of the studio monitors. So if you wanted to be "accurate" in your playback representation, then your best hope is having similar speakers.

Because this is not a realistic possibility, having relatively neutrally voiced speakers is a good strategy for having a speaker that on average does a good job across a lot of different recordings. But many people have a definite taste, and I think different styles of speakers lend themselves to different styles of music. As a simple example, if you have a speaker that can reproduce acoustic instruments with exquisite accuracy, that speaker will likely sound abrasively bright on recordings that have an excessive amount of high-frequency information in them. Which is a lot of recordings.

- I know rock music the best, and one of the huge issues with this is that rock music is produced by loud instruments, often highly distorted. Reproducing this accurately is not possible unless playback volume approaches a similar level (which is loud as fuck.) Recording engineers and producers work to create mixes that translate well across the playback systems that exist in the real world. But this is an illusion.

Even with rock, because of the need for mixes to translate, it is preferable to have a relatively neutral speaker as a monitor. But that is not because it is "accurate." It's because it gives you a chance to make a reasonably balanced recording that will translate well. I have a sense of how rock music should sound, and it is shockingly hard to get this effect in a well controlled, modern, flat speaker. On playback, a speaker cabinet with resonance helps embody the sound and can create the illusion of "rocking" more convincingly. I think part of this is that when a speaker is turned up enough to resonate the cabinet, that translates as "loud" on a psychoacoustic level.

- Toole also makes a comment that if you have a "good speaker" and the playback "sounds bad" you can be confident that it's a "bad recording." Well, bad recordings are the rule of the day. Some speakers can have a distinct voice that can sort of homogenize recordings, and mask deficiencies. This could be a very desirable quality. It's also the kind of thing that would require more extensive, long term testing, with specific inclusions of bad recordings to figure out what objective characteristics contribute to this.

I believe in science, and am in favor of improving the overall sound experience of the world in general. But when it comes to my personal listening, I have taste, and I hate listening to "accurate" speakers. The Neumann KH120 were so horrific to my ear, that even though I think I could have made them work as studio monitors, there is no reason to subject myself to this sound if I don't have too. I sold those suckers.

At our studio we also have a set of Genelec 8030's with a sub in our main recording room. I'm not sure if these measure well, but this system is strikingly accurate in that sound in recording space is represented in the control room to an uncanny level. These are very easy speakers to work on, they are relatively uncolored, so I can kind of stop worrying as much about what I'm hearing is what I'm getting. They are also pleasant sounding.

But I would never choose such speakers to listen to for fun. They have no discernable character. The sound is boring. The cabinets are so completely damped that you can barely hear them at all. This leads to a kind of "disembodied" sound which is unnerving. My pet theory is that we are just not evolved to hear disembodied sound. Without the sense of a resonant body, there is no "medium" there. We don't hear sounds, we hear things. If the speaker "disappears" what we are left with is often incoherent.

As far as the future of recorded and reproduced "music" is going, the horses are out of the barn. The notion of "hi fi" reproduction is ever less relevant. Producers of pop, rap, EDM mix to target specific playback systems which have no relationship to accuracy.

Music itself has crossed a singularity, in which it is decreasingly the product of muscles making movements, in real time, translating energy into physical mediums. Until we can jack the bitstream directly into the cerebral cortex, the need to translate these bits into acoustic energy will be relevant. Which makes for an interesting world

Last edited:

- Joined

- Jun 19, 2018

- Messages

- 6,652

- Likes

- 9,403

But, of course, both as manufacturers, and as consumers, there's a good reason to see what people prefer on the sound side of thing when all else is excluded - and that's precisely what we get from research like Toole and Olive's. It won't perfectly predict consumer behavior, because sound isn't the only thing people buy for (and consumers don't have perfect information, sadly), but it's a good guide both for designers and for people, like us, who are really interested in the sound (perhaps to the exclusion of all else).

Well put. I would add that probably the vast majority of audio purchases are made without any experience of how the thing sounds at all.

- Joined

- Nov 29, 2017

- Messages

- 543

- Likes

- 1,618

- Nobody listens to speakers blinded. You can only draw limited conclusions about a product if you don't test in relatively real-world conditions. Blinded tests cannot be used to fully test phenomena that involve visual elements. Visual input has an effect on sound, and this is not just placebo. (Prove this to yourself by sitting in front of your speakers listening closely, then close your eyes.) I'm pretty confident that visual elements of subjective speaker quality could be ascertained to some level of repeatability. As Toole points out, the effect is so strong that it can't be overcome by the intention of the listener.

I think one can actually go a bit beyond this statement. Again, I come from the world of headphones for the most part, and a foundational text of contemporary headphones is Güther Theile's seminar paper on diffuse field. One of the particularly interesting sections is on the so-called "SLD effect", wherein differently (visually) perceived acoustic sources are perceived to sound differently, even as the measured response says otherwise. While I don't know of how thoroughly this has been studied in the world of speakers, it would seem conceivable to me that this could meaningfully impact the perceived sound of a speaker.

In fact, does anyone happen to know if the work of Rudmose that Theile cites was followed up on/have any similar recommendations? I'm not as up on the speaker lit as I could be.

Beyond that and with regard to fidelity and intention, it's a question I grapple with a fair share of the time myself. My read of Olive, McMullin, and Welti's work in headphones is that 1, people generally agree on what they like (and perceive it as fairly neutral), particularly under blinded conditions, but 2, this seems to vary at least somewhat both between people, and, key, between test music programs (and listening levels). Here both Olive's (and I presume Toole's) hope of closing the circle of confusion, and the question of finding a metric for "good", become a tad cloudier to me.

With speakers, things seem even more complex, since you have the added variable of directivity fully in play. For a given power response, what's the "correct" ratio of direct to indirect sound? The one that matches the recording studio? The one that people like the most? And does that vary between people significantly¹?

IMO we have some good ideas about what makes a bad speaker (or headphone), and an evidence-based process of design will veer away from these, but I think there remains some interesting (albeit radically in minority) ground in the space that all of the bad options have fenced in to figure out what is best and how widely applicable it is

¹I'm not being entirely hypothetical here, does anyone have any data on that I can look at?

Some considerations:

- Nobody listens to speakers blinded. You can only draw limited conclusions about a product if you don't test in relatively real-world conditions. Blinded tests cannot be used to fully test phenomena that involve visual elements. Visual input has an effect on sound, and this is not just placebo. (Prove this to yourself by sitting in front of your speakers listening closely, then close your eyes.) I'm pretty confident that visual elements of subjective speaker quality could be ascertained to some level of repeatability. As Toole points out, the effect is so strong that it can't be overcome by the intention of the listener.

Im not sure what you are saying here. Please correct me if I have misunderstood. You seem to be acknowledging the influence of sighted bias whilst implying you should asses sighted as its a part of the whole experience.

If my interpretation is correct of what you are saying, I couldnt disagree more.

The whole point of non sighted assessment is to remove those biases to gain a consistent outcome. Your sighted bias is different to mine and different to the next person. Its not a consistent factor.

You have to assess blind so that you only assess the aural information. Otherwise you have no idea where you are. Was it a good speaker or were you simply impressed by its looks or price or brand name? Perhaps I was impressed more?

Last edited:

- Blinded tests have limited meaning when testing subjective phenomena. For example, if you are testing medication, there will be an objective measurement that will prove efficacy or not. There is no objective way to prove that a person had a better subjective experience (Maybe you could do an MRI). You can only rely on the subjects reported experience. In the case of the blinded speaker tests, the repeatability of the results is a proxy for an objective result. This cannot invalidate a given person's subjective experience in the way a blinded speaker wire test could. The differences in speaker sounds are real, and easily determined.

- There is a subtle reason for this, but essentially it goes back to the idea of "transparency." Toole is "old school" and the concept of "hi fi" is rooted in the ability of a playback system to represent the original musical event faithfully. So he talks about an ideal studio monitor being one that would be "transparent" to...what? If you record a violin or voice it's pretty "clear." We know what these sound like, so we can evaluate whether it is being faithfully reproduced. But most modern music exists as an a complete acoustic event only at the output of the studio monitors. So if you wanted to be "accurate" in your playback representation, then your best hope is having similar speakers.

Thing is that the Toole et al data concludes that people subjectively like a neutral speaker. It doesnt vary with musical style. I therefore think we do have an objective definition of the "better" subjective experience. Im sure some individuals will scream "i dont fit that definition"......oh well, never mind.

However yes, I have made the point earlier that a typical music production is an artistic endeavor, musically and aurally. The artist/producer creates the sound they want. It has little to do with accuracy and audiophiles need to get over this. They have no idea how much the recording is messed with before it ends up in their home.

So yes the studio monitor does need to be transparant, ie neutral. Flat on axis anechoic measurement with smooth off axis response. Why would we expect it to conform to anything else? Massive low frequency boost with no off axis high frequencies perhaps? Does that make any sense? Not to me. This should, as with video/film production, be embodied in a standard. Without such there will never be any consistency and we will remain in that circle of confusion.

Just to note that with the video production comparison, the "look" is still graded to whatever the directors artistic intent, added noise, colour hues etc, but at least they have a consistent calibrated place to work from. This calibration can be extended all the way top the home reproduction screen.

Last edited:

With speakers, things seem even more complex, since you have the added variable of directivity fully in play. For a given power response, what's the "correct" ratio of direct to indirect sound? The one that matches the recording studio? The one that people like the most? And does that vary between people significantly¹?

This is the variable thats a little contentious to my mind. Well not really contentious, but more difficult to have a specific definition of correct.

IIRC the Toole data suggests that people prefer a more spacious and enveloping sound generally, but this will vary as you rightly say depending on the speakers directivity and of course the room absorption/reflectivity characteristics. I will check, but I think he personally has stated no particular preference for a certain characteristic , just that the off axis should be smooth.

Last edited:

From Floyd previously in this thread:

If you read my book and/or papers it will be found that most people listening for recreation have a preference for wide dispersion, as a way to attenuate the basic limitations of conventional stereo.

If you read my book and/or papers it will be found that most people listening for recreation have a preference for wide dispersion, as a way to attenuate the basic limitations of conventional stereo.

Similar threads

- Replies

- 24

- Views

- 2K

- Replies

- 27

- Views

- 3K