-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Does Quality of Coax Input Matter for DACs?

- Thread starter amirm

- Start date

somebodyelse

Major Contributor

- Joined

- Dec 5, 2018

- Messages

- 3,743

- Likes

- 3,028

As @garbz pointed out, wired digital interconnects are often transformer isolated to avoid ground loop issues. IIRC it's mandatory for AES/EBU in the transmitter, and for some versions of the spec in the receiver too, although someone better versed in these may correct me. You can see the transformer in the internal pics of the DAC-SQ3 or even the £16 Fun Generation UA-202. It's not quite universal - see the HifiBerry Digi+ Standard which has an unpopulated footprint for the transformer, or various PC motherboards - but it's certainly not unusual.May sound like a smoke screen until you witness one when trying to interconnect three or four different types/mixes of interfaces, including analog audio.

If you tell me again that ground loops don't exist (and/or "nothing of the sorts"), I shall ignore your comments. Cheers!

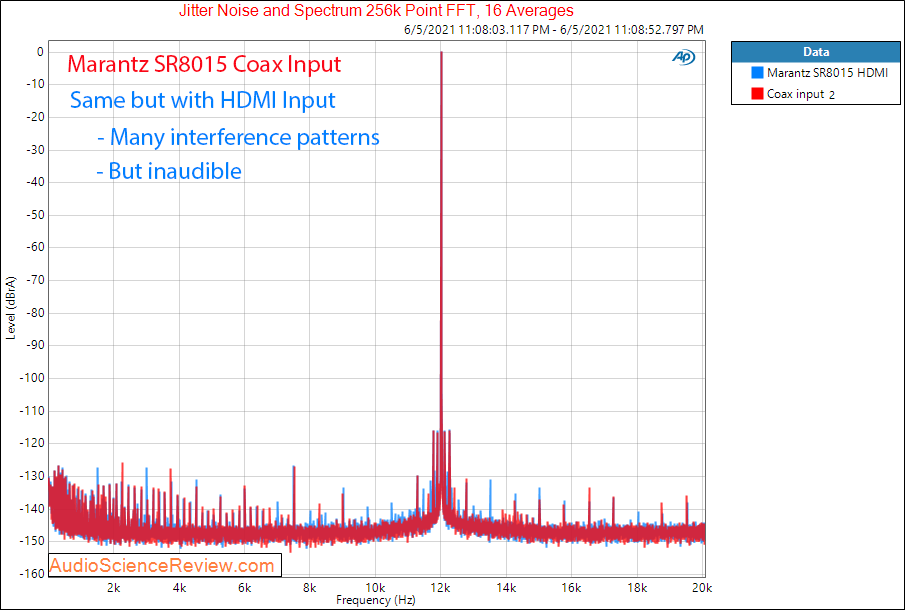

I currently stream music from a HTPC to a Denon AVR4600 (SINAD of ~99ish in pre-out mode) via HDMI. I've wondered about the potential improvement a D10 or similar would provide as a USB-Coax converter compared to HDMI. From what I've read so far, am I right to assume there is likely no discernable improvement to be had? Any other benefit to getting away from HDMI?I have measured coax input for some. Hard to generalize though. Here is an example:

Much appreciated for all the great content on ASR!

There is no standard for this. You'll have the check the manual of your equipment. Tascam units seem to use 50ohm BNC but have a selectable termination resistance of none, 50ohm and 75ohm. Mutec seems to use 75ohm BNC predominantly with some devices providing both 75ohm and 50ohm outputs.is BNC 75 Ohms compatible with spdif 75 ohms ?

You can tell at a glance by looking at the connector. Mutec's REF 10 should make this obvious:

But not electrically, which is the entire point of termination. Now here's a question for you. Jitter is basically a non-issue in audio, so the terminations involved here become trivial. But is it still a trivial matter when you spend $4000 on a master clock source to improve something already imperceptible only to then hamper the effort by choosing the wrong one? I think when we're talking about the use of master clocks we've moved far beyond "going to be an issue", and are well and truly in the realm of "is it ideal"All BNC connectors are mechanically compatible. In this instance, the slight impedance mismatch isn't going to be a issue. I'd use whatever I might have lying around and not give it a second thought.

...on the other hand; it would not cost you oodles if you get rid of the "mis" part, as a good practice!...the slight impedance mismatch...

Or offload the hi-grade hardware which relies on providing the best wordclock sync you are trying to achieve, while cheezing out on coax/RF signal integrity.

As always, YMMV.

Uh, you can easily get 75 ohm coax, and BNC's that fit. (or buy it made up) $10ish bucks for "more perfecter". At that price, why not? OH, yes, that would be a regular RF cable, not Audiophile pricing....on the other hand; it would not cost you oodles if you get rid of the "mis" part, as a good practice!

Or offload the hi-grade hardware which relies on providing the best wordclock sync you are trying to achieve, while cheezing out on coax/RF signal integrity.

As always, YMMV.

But I agree, in this use case, the mismatch shouldnt make much difference on a cable of a few feet. If I had a 3' 50ohm BNC-BNC laying on my bench, I probably wouldnt bother to order a 75ohm one.

To be clear, I have both 50 Ω and 75 Ω cables with proper connectors of various lengths, and when practical I'll use the correct type. If buying a cable for a specific purpose, I'd obviously get the correct one too. Still, I won't hesitate to use mismatched cables if that's more practical and the use case isn't too sensitive. Audio related things never are.

You're missing something. To use a transmission line you need a constant impedance across the line. Having a 75ohm BNC plug mated to a 75ohm BNC socket doesn't help you if your cable is 50ohm, or your source is 50ohm.Uh, you can easily get 75 ohm coax, and BNC's that fit.

If you're going for perfect or ideal you *need* to check compatibility with not just the cable, but between the two piece of equipment you are connecting as well. Again this is why some equipment has multiple different outputs with different connectors and different terminations.

audio2design

Major Contributor

- Joined

- Nov 29, 2020

- Messages

- 1,769

- Likes

- 1,830

To be clear, I have both 50 Ω and 75 Ω cables with proper connectors of various lengths, and when practical I'll use the correct type. If buying a cable for a specific purpose, I'd obviously get the correct one too. Still, I won't hesitate to use mismatched cables if that's more practical and the use case isn't too sensitive. Audio related things never are.

While the edge speeds in SPDIF are slow, which some may conflate with relaxed requirements for impedance matching, the voltage levels and hysteresis are also low, which does present the potential for jitter, which is not theoretical, but has been illustrated. If you have a new competent DAC, it probably does not matter, and given the limits of human hearing, in most cases it would not matter anyway, but there is still the potential for additional jitter with poor termination and the end result with some DACs will be < 16 bit performance.

You keep repeating that claim, yet you persistently refuse to give a single example of actual equipment that suffers measurably from this so-called problem.While the edge speeds in SPDIF are slow, which some may conflate with relaxed requirements for impedance matching, the voltage levels and hysteresis are also low, which does present the potential for jitter, which is not theoretical, but has been illustrated. If you have a new competent DAC, it probably does not matter, and given the limits of human hearing, in most cases it would not matter anyway, but there is still the potential for additional jitter with poor termination and the end result with some DACs will be < 16 bit performance.

It is nothing of the sort. Most semi-competently designed DACs will have transformer isolated coax inputs which far outperform crappy Toslink, or they simply galvanically isolate digital and analogue signals between the receiver and the DAC chips. And by outperform I mean in terms of a reasonable looking waveform at the receiver and also the liklihood of longer cable runs or cheap junk cables actually simply working.

The DAC-SQ3 which was used for this test is no exception.

No, this is a common trope that is repeated on audio forums but is incorrect.

Galvanic isolation of coax SPDIF is not perfect. First, there is the interwinding capacitance of the transformer to account for. The Newava S22083 has 15 pF from pri to sec. This is around 100 Ohms at 100 MHz.

Second, trying to galvanically isolate an interconnect that uses a shielded cable forces a compromise. Imagine you have a Class I appliance with metal enclosure. That enclosure is connected to PE as required for safety. When you add a BNC or RCA connector for your coax, you need to decide if it's an insulated BNC or one that connects to chassis. If it's insulated, you do still have isolation, but now you have an RF "hole" in your enclosure and you're bringing the coax shield into the to chassis through it. If it's not an insulated connector, that solves this problem but you have just defeated the purpose of the isolation transformer.

TOSLINK provides better isolation and there is no audible issue with the increased jitter. The only disadvantage is probably max cable length as you mention. I guess some transceivers don't work at 192 KHz either.

audio2design

Major Contributor

- Joined

- Nov 29, 2020

- Messages

- 1,769

- Likes

- 1,830

You keep repeating that claim, yet you persistently refuse to give a single example of actual equipment that suffers measurably from this so-called problem.

Because this is self evident, and has been known and accepted for as long as the interface has existed when developing SPDIF interfaces, though sometimes knowledge gets forgotten. The equipment would be every SPDIF interface (IC / discrete) ever developed, whether early Yamaha, Cirrus Logic, TI, Philips (NXP), or roll your own, etc. It is inherent cable/transceiver realities coupled with transmission line effects. SPDIF has limited edge speed (and not consistent in every implementation), cables can be poorly terminated, and the receiver will transition at a somewhat fixed voltage level. If that reflection hits near the transition point, it has the potential to create jitter. Remember, real world systems here, what we think of as "fixed" are much closer to stochastic when we are talking picoseconds.

In the audiophile world, this was even shown in 1993. I am sure there are naysayers, but the simple fact is, in a repeatable experiment, changing the direction of the cable changed the jitter (and that appears to be after the PLL).

https://www.stereophile.com/content/transport-delight-cd-transport-jitter-page-4

This was a great paper done in 1992 that looked at most issues with SPDIF, especially and AC coupled one. I don't think people quite realize just how many inherent jitter sources there are at the H/W interface level. AC coupling of course removes one source of jitter and creates a new data dependent one.

https://audioworkshop.org/downloads/AES_EBU_SPDIF_DIGITAL_INTERFACEaes93.pdf

@DonH56 wrote a basic look at what termination does in 2019 it appears.

https://www.audiosciencereview.com/...igital-audio-cable-reflections-and-dacs.7159/

I have to admit, I was very surprised by what Stereophile measured in 1993. Not that there was a difference, but that it was large. @John_Siau alludes gives us the reason why indirectly. Depending on the impedance mismatch, the reflected polarity will be different. If the reflection is destructive at the receiver, the effect is to slow the edge rate (slower than it already is). When you combine a slow edge into a real hardware receiver with other system level effects, jitter is going to go up, potentially way up, as illustrated in the Stereophile article where nanoseconds were measured on the word clock that would go directly to a DAC. That is more than enough to reduce the performance to < 16 bits. An effective difference in edge speed caused by termination and reflection coincident with the signal transitioning will make it more sensitive to periodic noise sources within the receiving device.

Where the big issue lies is that those periodic noise sources can be at frequencies less than the PLL cutoff frequency. This could be the cause of the Stereophile result. It is certainly the results of work I have done on the bench over the years.

audio2design

Major Contributor

- Joined

- Nov 29, 2020

- Messages

- 1,769

- Likes

- 1,830

No, this is a common trope that is repeated on audio forums but is incorrect.

Galvanic isolation of coax SPDIF is not perfect. First, there is the interwinding capacitance of the transformer to account for. The Newava S22083 has 15 pF from pri to sec. This is around 100 Ohms at 100 MHz.

Second, trying to galvanically isolate an interconnect that uses a shielded cable forces a compromise. Imagine you have a Class I appliance with metal enclosure. That enclosure is connected to PE as required for safety. When you add a BNC or RCA connector for your coax, you need to decide if it's an insulated BNC or one that connects to chassis. If it's insulated, you do still have isolation, but now you have an RF "hole" in your enclosure and you're bringing the coax shield into the to chassis through it. If it's not an insulated connector, that solves this problem but you have just defeated the purpose of the isolation transformer.

TOSLINK provides better isolation and there is no audible issue with the increased jitter. The only disadvantage is probably max cable length as you mention. I guess some transceivers don't work at 192 KHz either.

Admittedly I am having trouble "unwrapping" what you wrote.

Yes, 15pF will be 100 ohms at 100MHz, but how many 100MHz sources of noise do you have that will induce even small amounts of common mode voltage? That common mode noise voltage will also need to present as a differential voltage at the receiver input where there is invariably a capacitor to shunt just such noise to ground. I can also use supplementary common mode filtering. Further, that 100Mhz noise would have to form a beat frequency with the underlying clock transitions to generate noise below the PLL cut-off. Not saying it can't happen, but it is what would have to happen.

A far worse issue with galvanic isolation is data induced jitter.

w.r.t. "RF holes", while that may be the case, it only needs to be the case at one end. You are also bringing in a very small piece of metal which only is an effective antenna at very high frequencies. On the transmitting end, there is no reason of course not to ground the BNC. On the receiving end, you can capacitively couple the shield to case with a small capacitance to eliminate RF, and get the side benefit of shunting the aforementioned common mode noise to ground.

tvrgeek

Major Contributor

I am not sure on the technology as stated here. DACs buffer the data stream so the jitter is a function of the DAC clock, not the layer one transport. Think about it. It has to as R & L come in a serial stream! Now if some DAC implementation was so cheap it tried to lock a clock to the incoming stream, well shame on them. It will be all over the place and noise might effect it.This has nothing to do with the scenario here and what is tested. Jitter is created by the quality of transmission of S/PDIF over Coax in DAC-SQ3. The filtering is occurring in Topping DX3 Pro+ receiver/ESS DAC it has. It is not fed USB audio to care one way or the other in this testing.

So, yea the quality of the coax, optical and USB matter, but they are a function of the DAC execution, not the host. I wonder if that is why the JDS I just plugged in does sound smoother than the Schiit. Better clock. If we believe anything OEMs say, both these companies have talked about interface implementation being more significant than the DAC chip used. From what the recent measurements you have made on some DACs, they must have very very good clocks. One was even advertised to have an oven.

Is there another way to look at this? I have not torn into the schematics of newer DACs.

audio2design

Major Contributor

- Joined

- Nov 29, 2020

- Messages

- 1,769

- Likes

- 1,830

I am not sure on the technology as stated here. DACs buffer the data stream so the jitter is a function of the DAC clock, not the layer one transport. Think about it. It has to as R & L come in a serial stream! Now if some DAC implementation was so cheap it tried to lock a clock to the incoming stream, well shame on them. It will be all over the place and noise might effect it.

So, yea the quality of the coax, optical and USB matter, but they are a function of the DAC execution, not the host. I wonder if that is why the JDS I just plugged in does sound smoother than the Schiit. Better clock. If we believe anything OEMs say, both these companies have talked about interface implementation being more significant than the DAC chip used. From what the recent measurements you have made on some DACs, they must have very very good clocks. One was even advertised to have an oven.

Is there another way to look at this? I have not torn into the schematics of newer DACs.

USB is buffered. SPDIF/TOSLINK not necessarily. PLL on the receiver, Async sample rate conversion, per clock pulse DAC methods immune to jitter, etc. all work to reduce jitter. Buffering is definitely a method, but introduces a delay, and brings in the system issue of how much delay, and what ratio between input word clock and output word clock do you use, so you don't get buffer under-run, but have accurate frequency as well. These days, over-run shouldn't be an issue, but without external ram on a processor at the lowest end could be.

There is 0 advantage to the oven. The only advantage in an OCXO is that the cut of the crystal and mounting are typically better which ultimately reduces phase noise (jitter). The actually heating increases jitter. The heating is for RF and other markets where absolute frequency is important. w.r.t. typical crystal accuracy, this does not even enter the equation for audio.

To make a clock more than good enough for audio, i.e. 0 audible artifacts, costs a few dollars in volume.

It's all fantastic info. But jitter seems not to be an audible problem in any real world implementations. Even Dacs scoring low SINAD are not doing so because of jitter.

audio2design

Major Contributor

- Joined

- Nov 29, 2020

- Messages

- 1,769

- Likes

- 1,830

It's all fantastic info. But jitter seems not to be an audible problem in any real world implementations. Even Dacs scoring low SINAD are not doing so because of jitter.

Modern DAC chips have many mechanisms for alleviating the effects of jitter which I mentioned above. I would far more expect issues with boutique designs where either an old DAC chip is used, or the company is rolling their own. Amir has shown susceptibility to external jitter varies DAC to DAC and not all DACs have the same performance on all interfaces which indicates jitter, no matter the source, is still in play as that is a primary variable. If that DAC is fed with a high jitter source, i.e. a CD player, or finds itself in some other interface induced jitter issue (not USB of course), then that will show up in the output.

Similar threads

- Replies

- 11

- Views

- 434

- Replies

- 67

- Views

- 4K

- Replies

- 21

- Views

- 2K

- Replies

- 11

- Views

- 1K