Grooved

Addicted to Fun and Learning

- Joined

- Feb 26, 2021

- Messages

- 679

- Likes

- 441

@dominikz

I made a mistake by posting this in the other ABX thread of @Echoes

It was you using the Cosmos ADC, so here was what I wrote:

One thing I forgot to ask you sooner regarding your previous test: can you do a test with Deltawave to compare each one of your previous recordings with the original file?

If I'm not wrong (unless I saw it on another thread), you compared both files between each other, but not each one with the original file

I ask that because using Cosmos ADC, you certainly did have no clock sync between your DAC and the ADC

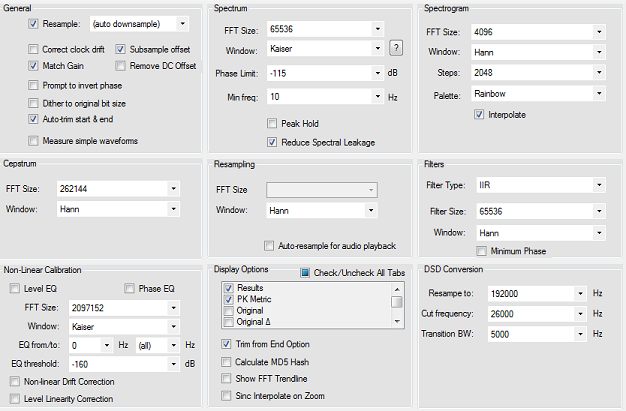

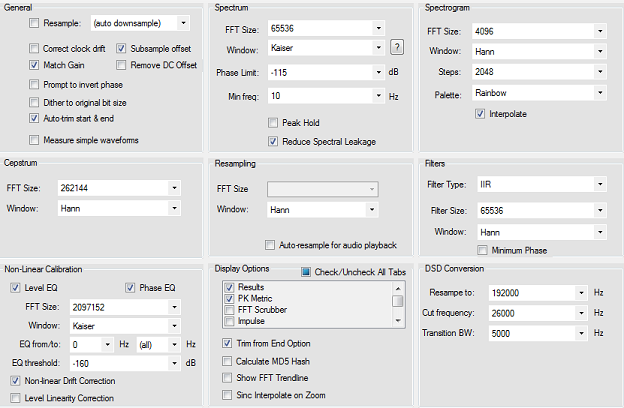

Try using these settings: (and with "clock drift" enabled to check if it changes something in this case)

and

I made a mistake by posting this in the other ABX thread of @Echoes

It was you using the Cosmos ADC, so here was what I wrote:

One thing I forgot to ask you sooner regarding your previous test: can you do a test with Deltawave to compare each one of your previous recordings with the original file?

If I'm not wrong (unless I saw it on another thread), you compared both files between each other, but not each one with the original file

I ask that because using Cosmos ADC, you certainly did have no clock sync between your DAC and the ADC

Try using these settings: (and with "clock drift" enabled to check if it changes something in this case)

and