-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Current state of mainstream music scene (Billie Eilish taken Grammys by storm..)

- Thread starter pavuol

- Start date

xr100

Addicted to Fun and Learning

There’s a lot to it, but one big change is the reliance in the most popular genres on digital instruments to create sounds. These instruments are almost entirely programmed, not performed in real time. It turns out that digital instruments are not very “expressive“ as performance instrument.

Absolutely untrue. The first professional digital synth that sold in units of 1000s was the Yamaha DX7. It uses FM synthesis, which enables very large changes in the harmonic structure during the course of a note, as well as changes with velocity (how hard a key is struck), the modulation wheel (the position of which affects a note that's already started) and came with a breath controller (again, control notes that have already been triggered.) Many professional keyboards also have "aftertouch" which means that again the note is modulated (changed) depending on how hard the keys are pressed, again, after note have already been triggered.

It's possible with digital synths to have an essentially arbitrarily complicated system of modulation which allows for as much expressiveness as desired.

It is true that the basic controller interface (and feedback, or lack thereof) hasn't really progressed beyond piano-style keyboard (plus knobs.) Different kinds of controller do exist, for example the Haken Continuum, the market for "different" controllers has tended to be, shall we say, modest. The breath controller that came with the DX7 wasn't used much, either.

(Caveat: I admit I'm not entirely up to speed with the market, e.g. there are various controllers from the likes of Native Instruments.)

Digital instruments are also abstract themselves. They are embodied in matter, but there is no “analog“ between the motion of the molecules in the instrument (a computer) and the sound that comes out of the speakers They don’t actually make sound, they make numbers. In many cases, when listening to electronic music you are hearing first generation sound production.

In response to user input, they generate PCM streams which are a digitally coded form of the sound, yes.

BTW, synthesis actually began in the analogue world. (The old modular Moogs and the like barely kept in tune, or some have said they were a real pain to get and keep in tune, if you want "expressive!")

Also, "programming" can be done at different stages--when creating a sound itself (e.g. how it evolves and how it responds to modulation commands) or conversely inputting notes into a sequencer and adding modulation to control the sound in the sequencer, too.

Anyway, this is at risk of completely losing the plot. There have definitely been changes to the way music is made, but "studio" recordings are not "live" recordings. Every trick in the book has always been used just as soon as it was possible to do so, from compiling multiple vocal takes, double tracking vocals, to erasing the start of a bass guitar note to be in time with the kick, and long before "Auto-tune," adjusting tape speed to get vocals in tune!

For example, Blondie, apparently, were NOT happy with producer Mike Chapman when recording "Parallel Lines," as never ending takes were required to get everything perfectly timed.

As a quick reference to what I’m talking about, compare any modern pop to the greats of classic rock. Apples and oranges.

"Pop" music, at least by my definition, is not "rock" (i.e. guitar band) music.

e.g. This is pop music...

This is not:

OK, so I've cited "bubblegum" pop vs. "progressive" rock. But the point remains: Teenage girls don't buy Pink Floyd records and sing along to them with a hairbrush... wrong market!

Last edited:

xr100

Addicted to Fun and Learning

Initially, I thought she was rubbish.

Still wouldn't buy her album or attend a show, but she nailed this.

Overwrought, tedious and rhythmically too influenced by modern "Rn'B" for my taste. Sorry. :-(

Last edited:

xr100

Addicted to Fun and Learning

Anything is better than rap / hip-hop, no melody, lyrics about gangsters and asses.

Science Proves: Pop Music Has Actually Gotten Worse

I listened to J-Lo from the kitchen during the half-time show tonight...

And I'm watching the game with my back to it, while trying to make a nice score on YMH20, just in case something exciting seems to have happened.

I'll probably replay the half-time later tonight, because when I peeked it at least looked exciting.

And I'm watching the game with my back to it, while trying to make a nice score on YMH20, just in case something exciting seems to have happened.

I'll probably replay the half-time later tonight, because when I peeked it at least looked exciting.

I listened to J-Lo from the kitchen during the half-time show tonight...

And I'm watching the game with my back to it, while trying to make a nice score on YMH20, just in case something exciting seems to have happened.

I'll probably replay the half-time later tonight, because when I peeked it at least looked exciting.

OMG so excited Chiefs win (He says wearing his Mahomes jersey!

Wasn't it a great game!

I'm not into current music, generally. I catch up a year or five later, picking out what I like. That said, sitting here, listening to Bad Guy, the second track on her album, I think this is legit quality music. Creative and fun. Her voice is all breathy and interesting. The production quality might not be A+. OK, it totally sounds home produced, but tons of great albums are poorly recorded. The production creativity is perfectly fine and interesting. It isn't Neptunes, but it's a sight more interesting than most pop.

That's a bit snobbish.Anything is better than rap / hip-hop, no melody, lyrics about gangsters and asses. Billie is at least original.

xr100

Addicted to Fun and Learning

That's a bit snobbish.

That's rap/hip-hop? (Didn't listen to the whole thing, but...)

I think it is pretty unquestionable that rip/hip-hop have had a profound effect on popular music as a whole. It has led to the typical use of repetitive 4 chord harmonic structures for one, throughout verse/pre-chorus/chorus. Not that a good song can't be written that way, but it tends towards boring compared to "traditional" songwriting techniques such as shoving in a "surprise" pivot chord before the chorus and seamlessly changing key...

The Roots are one of the biggest names in Hip hop...and they have been for the past 30 years. And yes this is hip hop.That's rap/hip-hop? (Didn't listen to the whole thing, but...)

I think it is pretty unquestionable that rip/hip-hop have had a profound effect on popular music as a whole. It has led to the typical use of repetitive 4 chord harmonic structures for one, throughout verse/pre-chorus/chorus. Not that a good song can't be written that way, but it tends towards boring compared to "traditional" songwriting techniques such as shoving in a "surprise" pivot chord before the chorus and seamlessly changing key...

xr100

Addicted to Fun and Learning

The Roots are one of the biggest names in Hip hop...and they have been for the past 30 years. And yes this is hip hop.

Having listened to some more, yes, (alas) I can hear that...

So, sorry to come here late. I am one of the "almost" old farts that tries to keep up with modern music. One never knows when the next Zepp will come along. And I didn't know much about Eillish until I had dinner with my kids last week (28 and 24 years old). They illuminated me a bit about her. I first thought she was phony but was very attracted by the fact that she was trying to sell music without sex. Unlike those two 43 and 50 year old women last night (I digress).

My kids made me go back and listen to some of her music and I also watched some of the interviews of her (them). To be fair, it should be Eillish brothers and not Billie. Rolling Stone has a video (or more) of them showing how they compose the music and how they produce. You can see the equipment they have and also understand why the record doesn't sound better that it should. Look at these tiny monitors! But, I think they are sincere in trying to make good music. You may like it or not, but I give them more credit now.

Here is one (just remember, she is 17/18).

My kids made me go back and listen to some of her music and I also watched some of the interviews of her (them). To be fair, it should be Eillish brothers and not Billie. Rolling Stone has a video (or more) of them showing how they compose the music and how they produce. You can see the equipment they have and also understand why the record doesn't sound better that it should. Look at these tiny monitors! But, I think they are sincere in trying to make good music. You may like it or not, but I give them more credit now.

Here is one (just remember, she is 17/18).

Tks

Major Contributor

- Joined

- Apr 1, 2019

- Messages

- 3,221

- Likes

- 5,496

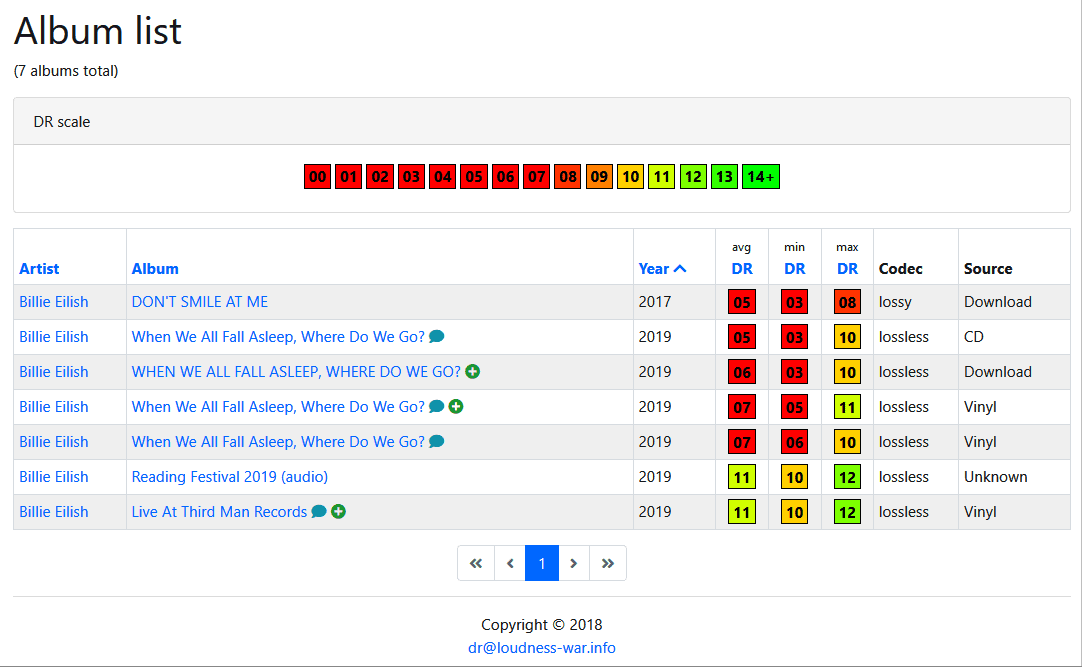

Biggest problem with modern music, is just such a massive amount of music with dynamics crushed. Everything else I think is pretty much okay, and boundaries are constantly being pushed even in the mainstream (technically speaking, even if such instances aren't always something I find personally tasteful). But man.. must so much music have so much compression constantly? I'm noticing it even in orchestral pieces for movie/video game soundtracks.

She is in good company. The other albums did not come above 11. Had to use Audacity to make it listenable.Billie Eilish,

http://dr.loudness-war.info/album/list/year?artist=Billie+Eilish

Only the live recordings have good DR. The others, studio, only we needwitha cheap audio system and few watts to obtain the SPL at the room.

Such is the current misfortune with commercial music. It is so compressed that only cheap audio equipment is required. It only matters that it sounds loud on mobile phones and YouTube, not the sound quality -> bad time for the audio industry will continue and worsen.

Similar threads

- Replies

- 30

- Views

- 2K

- Replies

- 0

- Views

- 473

- Replies

- 19

- Views

- 4K