Your questions definitely were reasonable.

As

@voodooless notes, the issue is twofold:

1. The M-Scaler's measured performance (jitter, SINAD, etc), while not bad enough to produce any audible problems, is notably mediocre for a digital component like a DAC (the M-Scaler isn't exactly a DAC but that's the closest analogue in terms of expectations and benchmarks for measured performance). So given its price and performance, it's not a good choice.

2. More fundamentally, the M-Scaler is claimed to "reconstruct" missing information by filling in transients lost with a digital source's original sample rate, by increasing the sample rate and thereby the "resolution" of the signal. There no evidence that it does this - and that is because it CANNOT do this, for two reaons:

(a) Once a digital recording has been made at (or downsampled to) a given sample rate, like 44.1kHz for example, that's it: any missing information is permanently gone and cannot be reconstructed. Any upscaling simply duplicates the existing samples. It's not like frame interpolation with video, where a TV or processor can create new frames in between existing ones by combining the two existing frames and therefore create the impression of smoother motion (the so-called, and much-detested, "soap opera effect" when such processing is applied to 24 frame-per-second movies). A simple way to think about why audio cannot be thought of or dealt with like video in this way is to remember that it is possible to freeze-frame a video and step through each individual frame one by one. If extra frames have been added, you can clearly see that in such a scenario. But with audio you cannot freeze-frame samples and actually listen to the sound progressing frame by frame, and have your brain be able to detect or make any sense of it, because audio plays in time while video is displayed in space. You can take advantage of time to visually inspect a frozen video frame. You cannot take advantage of space to audibly inspect a frozen audio sample. If you load up a song into Audacity or any other audio app, select a single sample, and press Play, as far as I know you will either hear nothing, or else you might hear a momentary "tick," which you cannot even begin to make any sense of as a musical sound. (Of course you can VISUALLY inspect a single audio sample in an audio editing app, but that's not the same thing as being able to LISTEN to that sample in that way.)

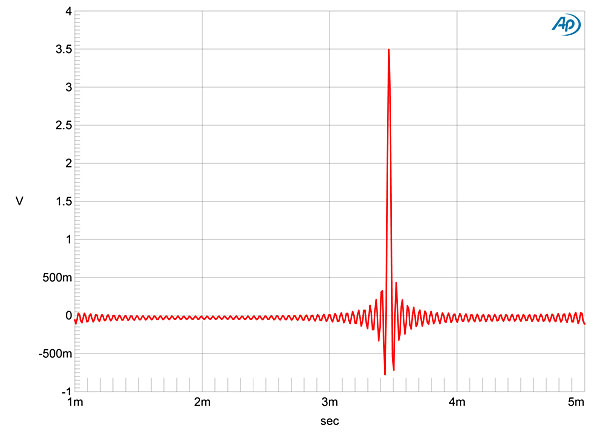

(b) Perhaps even more fundamentally, a higher sample rate BY DEFINITION cannot restore transients, because a sample rate of 44.1k is ALREADY capable of capturing the "fastest" transients that any human being can hear. This is a very basic and common misconception about digital audio. IMHO it's actually a quite understandable misconception for us non-experts, but for someone like Rob Watts it's either a stunning level of ignorance or a knowing act of deception. This becomes clear when we realize that the frequency of a sound literally IS its "speed." Any transient that is "too fast" to be encoded by a 44.1kHz sample rate is also, BY DEFINITION at too high of a frequency to be heard by humans.

So the test was "doomed from the start," yes - but

@amirm tested it because the only way you can scientifically address claims like the ones Chord makes for the M-Scaler is to test those claims. Testing a device that could not do what it says was not a great option - but it was a better option than the alternative, which would be to refuse to test it and then leave open an opportunity for others to say, "you claim the M-Scaler can't work but you won't even test it - that seems very unscientific and close-minded."