Below is what I replied to the

Soundstage Network video which was the impetus for the discussion over on the other site...

-----------------

I'll give my two cents, as a fellow reviewer who relies heavily on objective data to provide information.

For the record, I agree that listening should be done before measurements. We already know that ideally every step would be infinitely- quadruple-blind. But that is just not feasible. At least not for the regular folk like me. So, I try to avoid the biases I can... at least within the highest degree I can.

When I get a speaker my review process is:

1) Before I get the speaker, I (try to) avoid as much information as I can. I know this seems counterintuitive to what a reviewer should do. You'd think you want a reviewer to be well versed in what they're about to review. But for me, that's just one more area of potential influence. If I study the design and try to discover the purpose, what others thought of it, etc, then I go it to with those preconceived notions.

2) I listen. I take a notepad, my iPhone or a laptop and I take notes. I've been DIY'ing, tuning and "judging" systems for over a decade and have steadily worked to align things I hear with frequencies. I don't claim to have a golden ear ... I don't have perfect pitch, I don't have a musician's background. But what I do is to simply focus on the things I am familiar with. So with that and with some bit of logic, when I hear something in a song that stands out - for one reason or another - I am able to get a pretty reasonable estimate of the frequency it occurs. Maybe within an octave or so. Sometimes better. Sometimes worse. Sometimes it is not the fundamental that is the issue but the harmonic. I use a website called soundgym for ear training. It's worth checking out if anyone out there wants to work on their listening skills as a reviewer. I write down the frequency range I think is the problem. For example, if I hear a lower male vocal resonance then I might say "resonance ~150Hz". Otherwise, me saying "midrange" doesn't help me when I'm trying to fine tune. In a way, I suppose it is very similar to the method you mentioned where listeners were instructed to draw out the response they think the speakers had as they were listening. But doing this also helps me to separate the speaker from the room because if the anechoic response(s) doesn't indicate an issue then I can check the measured in-room/in-situ response to see if that might be the issue.

3) I measure the anechoic response and do my other measurements. The whole dang ordeal.

4) I look at my notes. I compare them with the measurements. I try to find things that correlate.

5) I re-listen with the measurements in mind. I sometimes use EQ to adjust the things I noted based on the measurements to see if that seems to resolve any issues I heard.

6) Do some research on forums and other reviews to see what others thought of the speaker and what questions folks have about the speaker that I can try to address.

*) Somewhere in there (after step 2) I will measure the in-room response with a spatial average. Usually I share this data as well but I've gotten to where I don't lately simply because I don't want to eat up the bandwidth and I don't see the benefit anymore.

I take all the above and... I tell people: "hey, here is what I did, what I heard, what I see in the measurements, and what I think all this means".

I know me. I know myself well enough - from years of experience building, measuring and manually tuning systems (usually only via DSP) that what I see in the measurements, I will be influenced by. I found that measuring first and then listening is counterproductive to me trying to improve a system because what typically would wind up happening is I would start trying to "fix" the things measurements showed to be a "problem" and while it sometimes worked, it most often resulted in me over-correcting the response or spending too much time worrying about things that were less problematic and I would miss other attributes that maybe should have gotten more attention. How does "tuning" a system relate to "evaluating" a system? Well, they're not the same for sure. But the end goal is the same: to discover things you like or do not like and then you work to improve them. In a DIY design that's necessary. In a completed speaker, there really is nothing you can do. But the process still bears the same useful information.

That's my personal process. It is what seems logical to me and it has worked well for me, my friends and (hopefully) those who choose to read/watch my reviews as well.

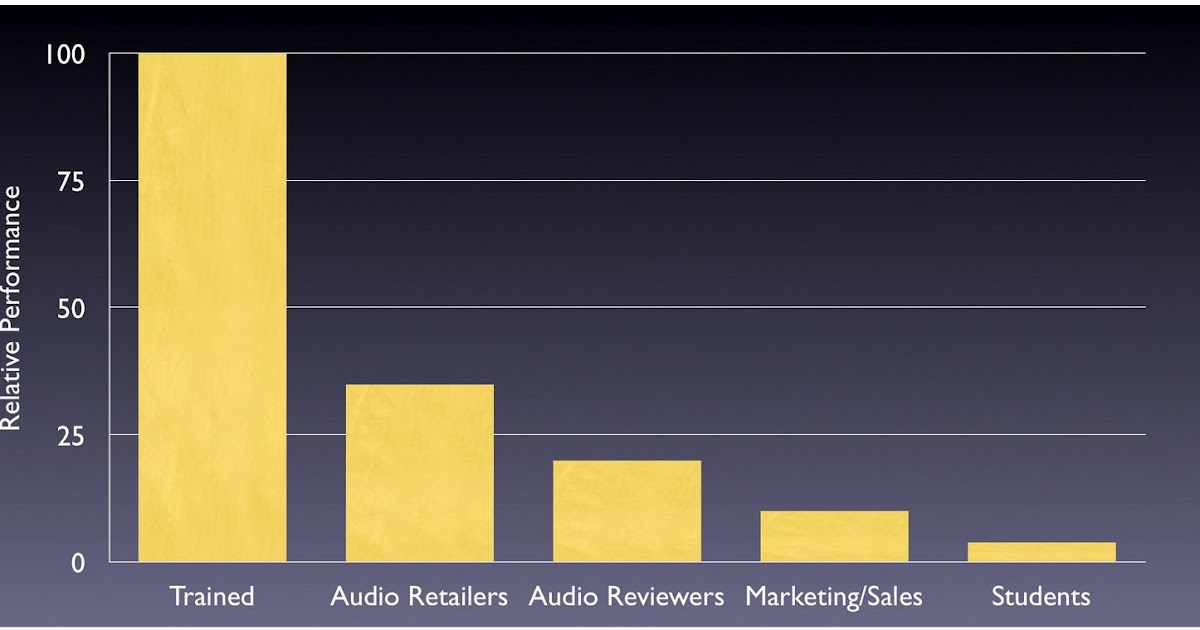

I am sure some will disagree with your video and therefore my methods as well. I can’t change how others review products. And frankly, I don’t care enough to belabor the point. I’ll worry about myself and provide my reviews in what I feel is most helpful to my audience and if there is pushback then I’ll reconsider my methodology. Having said that, I think it says a lot that when the studies were done wrt what listeners preferred, that the listeners were not exposed to the measurements first but rather instructed to draw the response curve and those curves were then compared to the data to find out what people generally liked/disliked. Per Dr. Toole: "When listening “blind,” without the biasing influences of price, size, brand and appearance, people turn out to be remarkably similar in [terms of] what they like and dislike." But, as I said above, without research funds to pay for the means necessary to do these kind of reviews, we do the best we can. Therefore, it is logical that by adding another previously-known variable (in this case "measurements") to the queue, you are even further away from the goal of removing biases. If there is a reviewer out there with their own Harman-esque speaker comparator for double-blind listening evaluation then they aren't sharing that info.

My long-winded two cents is now over.

- Erin