Correct, you don't know.If everything is that sensitive it seems like the hardware truly sucks, maybe that's the industry standard in the recording industry, I don't know.

-

WANTED: Happy members who like to discuss audio and other topics related to our interest. Desire to learn and share knowledge of science required. There are many reviews of audio hardware and expert members to help answer your questions. Click here to have your audio equipment measured for free!

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

EquiTech 1.5RQ Balanced Power Review

- Thread starter amirm

- Start date

michaelahess

Member

- Joined

- Oct 31, 2020

- Messages

- 72

- Likes

- 60

Correct, you don't know.

Seems like maybe that's something you need then. Someone to say "WTF?!?!" Why are we doing this stupid, dangerous, expensive work around, instead of holding manufacturers of our equipment to a higher standard.

Take this times dozens of racks of gear, with hundreds of millions of dollars in equipment, copper, fiber, everything. Far more power than any audio recording studio would ever use. No noise issues on any of it. your little board has a problem with MUCH more coarse signals? Come on...

This is a joke right?Seems like maybe that's something you need then. Someone to say "WTF?!?!" Why are we doing this stupid, dangerous, expensive work around, instead of holding manufacturers of our equipment to a higher standard.

Take this times dozens of racks of gear, with hundreds of millions of dollars in equipment, copper, fiber, everything. Far more power than any audio recording studio would ever use. No noise issues on any of it. your little board has a problem with MUCH more coarse signals? Come on...

View attachment 141643

michaelahess

Member

- Joined

- Oct 31, 2020

- Messages

- 72

- Likes

- 60

This is a joke right?

LOL nope. This was a datacenter that came under my responsibility. This is before it was rebuilt, and is only a small part of it. It was rebuilt because it looked like this...not because it didn't work. Thus making my point.

Audio is easy, engineers are lazy.

Geert

Major Contributor

- Joined

- Mar 20, 2020

- Messages

- 1,955

- Likes

- 3,570

You transmit analog audio via ethernet cables? I've never seen anyone measuring copper cable noise in a data center.Take this times dozens of racks of gear, with hundreds of millions of dollars in equipment, copper, fiber, everything. Far more power than any audio recording studio would ever use. No noise issues on any of it.

michaelahess

Member

- Joined

- Oct 31, 2020

- Messages

- 72

- Likes

- 60

You transmit analog audio via ethernet cables? I've never seen anyone measuring copper cable noise in a data center.

You take all that gear and pass analog through it? Really? Well there's your problem. Mic's and recording devices should be digitized immediately in the recording room. EVERYTHING else should be in the digital realm until hitting the DAC to your monitor amps/speakers.

And yes, I've absolutely had to measure in data centers. COAX, as well as Ethernet. Noise isn't a thing to us. If it is found, it's a REALLY dumb thing that was done. Poor crimp, bad bond, kinked cable, a million and one things.

Geert

Major Contributor

- Joined

- Mar 20, 2020

- Messages

- 1,955

- Likes

- 3,570

Yes, most of that outboard gear runs analog signals. Why? Because studios exist much longer than digital, so a lot of the popular audio equipment has no digital connections. And studios that use analog consoles don't digitize microphone inputs. Don't come here lecturing us about something you absolutely know nothing about.You take all that gear and pass analog through it? Really? Well there's your problem. Mic's and recording devices should be digitized immediately in the recording room. EVERYTHING else should be in the digital realm until hitting the DAC to your monitor amps/speakers.

Indeed, as it very rare to start with and it needs to be very bad before it becomes a problem (has impact on the network signal). Most network engineers are not capable of troubleshooting a ground loop in a sound studio.Noise isn't a thing to us.

A troll looking for attention, I get it now.Audio is easy, engineers are lazy.

michaelahess

Member

- Joined

- Oct 31, 2020

- Messages

- 72

- Likes

- 60

Just because it "has been" doesn't mean it "needs to" going forward.

But if you enjoy dealing with archaic backwards methods and technology, have at it. I'll continue to embrace the future and adapt with it instead of fighting against it.

And yeah I've dealt with tons of analog, maybe it's not clear but I was in the cable industry for a very long time. We learned very quickly to adopt digital everywhere possible. It's not rocket science. Quality skyrocketed and costs and man hours plummeted.

But if you enjoy dealing with archaic backwards methods and technology, have at it. I'll continue to embrace the future and adapt with it instead of fighting against it.

And yeah I've dealt with tons of analog, maybe it's not clear but I was in the cable industry for a very long time. We learned very quickly to adopt digital everywhere possible. It's not rocket science. Quality skyrocketed and costs and man hours plummeted.

Analog in - Digital capture/processing - Analog out. That's how records are made today.Just because it "has been" doesn't mean it "needs to" going forward.

But if you enjoy dealing with archaic backwards methods and technology, have at it. I'll continue to embrace the future and adapt with it instead of fighting against it.

And yeah I've dealt with tons of analog, maybe it's not clear but I was in the cable industry for a very long time. We learned very quickly to adopt digital everywhere possible. It's not rocket science. Quality skyrocketed and costs and man hours plummeted.

michaelahess

Member

- Joined

- Oct 31, 2020

- Messages

- 72

- Likes

- 60

Analog in - Digital capture/processing - Analog out. That's how records are made today.

So it's just the hardware manufacturers that can't get their poop in a group? A UPS on either side would resolve the possibility of any noise. And you say analog out...but is it? Really?

I assume besides high rez uncompressed digital files, you still do a pressing of a CD master, which is digital. Only literal records would require analog out. And seriously, who uses those for anything approaching professional grade in this day and age?

With copper prices getting higher, you might even get more money for it.

https://tradingeconomics.com/commodity/copper

Copper theft is a thing...

https://www.todayonline.com/singapore/man-stole-copper-wires-mall-finance-wifes-chemotherapy

Has been for a long time - I used to work for the British Transport Police and they have officers dedicated to dealing with cable theft.

Helicopter

Major Contributor

A big problem with the foreclosed buildings in Detroit is they don't have any copper in them, and were damaged by its removal.Has been for a long time - I used to work for the British Transport Police and they have officers dedicated to dealing with cable theft.

Mic's and recording devices should be digitized immediately in the recording room. EVERYTHING else should be in the digital realm until hitting the DAC to your monitor amps/speakers.

In some sort of utopian fictional world maybe. The simple reality is that this doesn't happen. It isn't the current technology. I agree, it could be a better way. It would also be much more expensive, and would obsolete a massive amount of existing technology. So sure, in some fictional universe where there is infinite money, and infinite time to tear out everything and start afresh, one could do this.

But simply dismissing the current systems and the solutions to noise as snake oil is simply ignorant trolling. You have no idea how recording studios work or the requirements they meet.

Again, you have no clue about the industry or how existing real life studios work. No, not every studio is end to end DAW. Thinking that is simply naive.And seriously, who uses those for anything approaching professional grade in this day and age?

Oh, and once again, the Equitech systems are not line conditioners. Stop comparing them to line conditioners. All it does is show you haven't read the thread and don't know what you are talking about. They address a different and quite real problem.

We monitor what we are doing in analog, lolSo it's just the hardware manufacturers that can't get their poop in a group? A UPS on either side would resolve the possibility of any noise. And you say analog out...but is it? Really?

I assume besides high rez uncompressed digital files, you still do a pressing of a CD master, which is digital. Only literal records would require analog out. And seriously, who uses those for anything approaching professional grade in this day and age?

Digital data is not as noise sensitive as an audio analog signal. A one's a one and a zero's a zero, and we don't care about what's in between, and the digital signal swings between the two states are large. There's also error correction available to digital signals that are not available to analog ones. Of course, analog audio signals are slow changing compared to digital ones, so transmission of analog is not as tricky as sending something with 5nSec edges down a cable. They both have their own problems, all of which can be successfully dealt with.

Record making is an art form, not data distribution. Should painters stop painting portraits with oil and only use digital photography because it's more "accurate"?Mic's and recording devices should be digitized immediately in the recording room. EVERYTHING else should be in the digital realm until hitting the DAC to your monitor amps/speakers.

Digital data is not as noise sensitive as an audio analog signal. A one's a one and a zero's a zero, and we don't care about what's in between, and the digital signal swings between the two states are large. There's also error correction available to digital signals that are not available to analog ones. Of course, analog audio signals are slow changing compared to digital ones, so transmission of analog is not as tricky as sending something with 5nSec edges down a cable. They both have their own problems, all of which can be successfully dealt with.

Off topic, but one of my favourite areas. In the real world there isn't any such thing as a digital signal. One of the first lessons any digital designer learns is that everything is analog. Nice crisp waveforms representing ones and zeros are great for representation and explaining principles, but if you hook a scope up to your signals and they look like that, all it tells you is that you can go a lot faster, and are not trying hard enough. Eventually everything returns to Shannon. The information carrying capacity of a channel is defined by its signal to noise and its bandwidth (actually the integral over the bandwidth wrt the signal to noise). This is true no matter what signal you run down the line. What actually gets sent down the wire looks a lot different to the simple idea of ones and zeros. That went out with serial lines decades ago. Now quadrature encoding is pretty standard, and the actual line driver and receiver systems are near science fiction. Getting a gigabit signal to transit through a connector that was designed for nothing more than voice is a triumph. Every gigabit phy contains adaptive active line driver logic and effectively a miniature TDR. What they are capable of doing to get the signal though is incredible. 10Gb systems defy the imagination. They work because they wring every last drop of capability out of the channel. They don't do this by sending nice sharp edged bits down the wire, they do it by treating the system as a total mess of an analog system, characterising it to the n'th degree and pushing the edge of what Shannon says they can achieve by pulling every analog level trick possible to find corners they can cram a few more bits into.

But of course the protocols include error correction, and TCP is robust against packet loss. So if you don't have a real time communication problem you can relax a bit and let that take care of problems. But if you have a real time problem, one where latency really matters, you need to be on top of things.

Latency is the big problem with any digital audio system. Domestic users hardly ever see this. People moan if the lip sync is a bit off. That is about it. You simply cannot perform audio recording if there is perceptible latency. The wheels fall off. This places a very hard and implacable boundary on what a digital system can achieve. One that no amount of fancy computational capacity can solve.

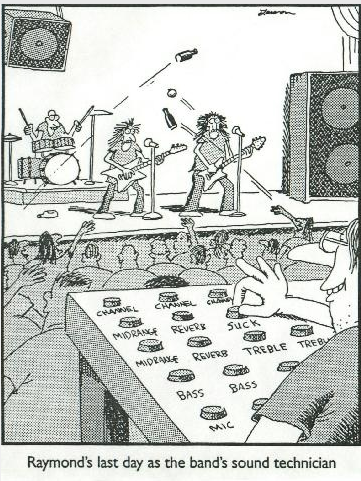

People may have heard stories about a live sound engineer taking out revenge on a band that had annoyed him. Larson drew a famous cartoon.

There are various stories. One involves pitch shifting the foldback. But it is much much easier. Just delay the foldback slightly. The band will instantly suck.

Last edited:

Similar threads

- Poll

- Replies

- 493

- Views

- 52K

- Replies

- 63

- Views

- 15K

- Poll

- Replies

- 88

- Views

- 19K

- Replies

- 6

- Views

- 752

- Poll

- Replies

- 138

- Views

- 20K

G