Thanks to Michael for bringing my attention to this paper. I had it in my library already but I did not remember it  .

.

Audio Capacitors. Myth or reality?

P. J. Duncan , P. S. Dodds , and N. Williams University of Salford, Greater Manchester, M5 4WT, UK. [email protected]

ICW Ltd, Wrexham, Wales, LL12 0PJ, UK. [email protected]

ICW Ltd, Wrexham, Wales, LL12 0PJ, UK. [email protected]

The paper hypothesizes the mechanical resonance of a film resistor which occurs in the audible band between 10 Khz and 30 Khz could have deleterious effect on audio when used in crossover circuits in a speaker:

ABSTRACT

This paper gives an account of work carried out to assess the effects of metallised film polypropylene crossover capacitors on key sonic attributes of reproduced sound. The capacitors under investigation were found to be mechanically resonant within the audio frequency band, and results obtained from subjective listening tests have shown this to have a measurable effect on audio delivery. The listening test methodology employed in this study evolved from initial ABX type tests with set program material to the final A/B tests where trained test subjects used program material that they were familiar with. The main findings were that capacitors used in crossover circuitry can exhibit mechanical resonance, and that maximizing the listenerís control over the listening situation and minimizing stress to the listener were necessary to obtain meaningful subjective test results.

Seems like a great study! Let's dig in to see if they deliver on that promise.

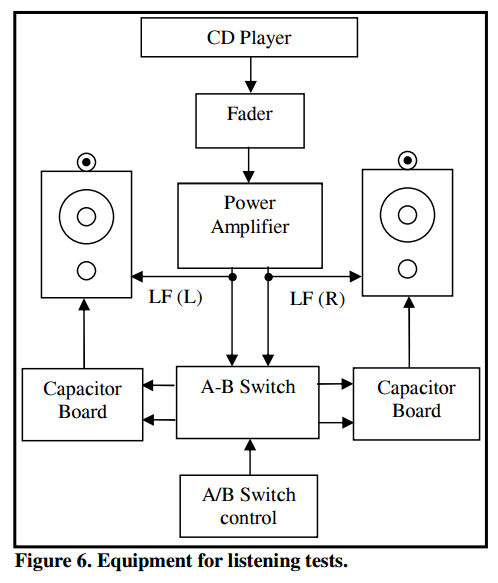

Let's focus on the listening part of the test. Here is the test setup:

Speakers in use are B&W 802. The woofer is directly driven but the tweeter goes through an AB switch of two different capacitors. They say they use the "bi-wire" connection of the B&W speakers which to me sounds like its crossover remained in the path. So guessing, the capacitor under test would be in series with that. This doesn't simulate what would happen if you changed a cap in the crossover. It simply adds another cap to the audio path which would likely increase the filter slope of the tweeter.

They start off with an ABX test. As you may know with ABX you can hear capacitor A versus capacitor B and then a random A or B is played ("X") and you have to identify it. Initially they do a comparison of an electrolytic capacitor against film (both 4.7 microfarads). They used this for "training" which means it should be easy for listeners to tell them apart. They then followed that by testing one ordinary film capacitor against a fancier one:

"The initial ABX tests involved a panel of fourteen volunteer listeners comprising students and staff in the University. The listeners generally found the tests to be very difficult and quite tiring, and in general, the results from the initial ABX tests gave no usable outcome.

One surprising feature of the tests was that during the initial training part of the tests where the electrolytic capacitor was compared with a film capacitor, and the difference was fairly audible, many of the listeners were unable to correctly identify A or B. In the second part of the tests, where the differences in audio delivery from the two film capacitors was much more subtle, the panel was unable to correctly identify A or B and no useful results were obtained.

Translating that into English, the "easy" case turned out not to be so. What was obvious to the people who created the test, was not to the people taking the test blind. Difficulty of telling the caps apart went through the roof when the two capacitors were of the same kind, i.e. purpose of this test. The testers failed to tell the capacitors apart in double blind ABX test.

They regroup and declare two things:

1. Maybe some of the testers don't have proper training. This is a valid criticism and you do want to use the training phase to weed out people who are lost and can't tell obvious differences. The trick however is to know for sure the training outcome was predictable. That is, we want to have proof that audible difference exists and based on that filter out testers.

As a way of example, if I want to test CD against high-res, one "control" for training would be to filter out the CD down to 12 Khz. If someone can't tell that from the CD, they sure as heck are not qualified to test CD against high-res.

The problem here is that we don't know that an electrolytic cap is indeed this bad or obvious to tell apart. The testers just assume so without any objective data or proof otherwise.

2. The experimenters blame ABX test itself faulty because the difference they could hear sighted, could not be replicated blind using other testers. Well duh! That is why we do ABX tests: to see if sighted results can be replicated when knowledge is removed. If ABX tests don't agree with sighted, the arrow does NOT automatically point to ABX tests being wrong. It as a minimum says that any audible difference must be very small. At worst it says audible difference does not exist.

Sadly organizers move on to a preference test of one capacitor against another. For their defense they site ITU BS-1116 which is a highly recommended set of standards to determine fidelity differences between samples. BS-1116 was designed for testing lossy audio codecs where we "know" sonic differences exist. That is, we don't need to first use ABX testing to know there is a difference. That is a given.

Using that logic is a mistake in this context. It is not given at all that the caps of any sort sound different. They cannot as such skip the ABX phase and go to preference.

The problem with doing that is that you get false data. I can test two cans of identical soda and ask people which one tastes better and one may come out ahead of the other. That alone means nothing. It is vagaries of human testers and too few trials.

The incredulity here for fancy caps versus standard is one where we don't believe there is any audible difference. That is the hypothesis that had to be proven or disproven. You can't assume an outcome of audible difference and then go and grade them as they did. Indeed their ABX testing showed that such reliable difference did NOT exist!

But they proceed with the AB preference test. Here, they use a reference capacitor and compare it to three others:

1. Elec is the electrolytic capacitor that is supposed to sound different.

2. Cap 1 is the same as reference so should sound identical.

3. Cap 2 is the fancy film capacitor which they hope sound better

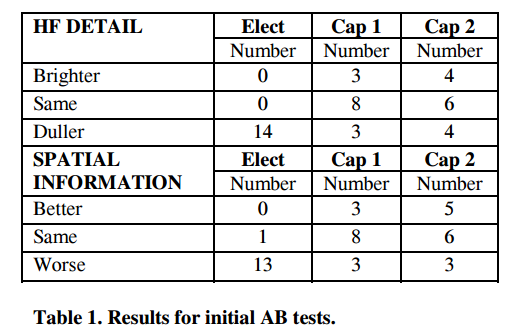

Here are the results

Let's parse this as it is not easy.

Electrolytic capacitor was picked by 14 out of 14 testers/test to sound duller. And 13 out of 14 to have worse spatial information.

Cap 1 which is identical to its alternative in the AB test should have generated 14 out of 14 people saying it is the same in both categories of preference. As is commonly the case, it did NOT generate that result. In the detail front, only 8 people thought it sounded the same as its identical twin. Three (3) people said it sounded brighter, and the same number (3) thought it sounded duller. Identical results were generated in the Spatial category with just 8 people correctly identifying it as sounding the same.

You now see the problem I mentioned. Two identical caps being compared, yet people randomly give preference to one or the other.

The testers declare victory based on the outcome for the fancier film capacitor's results. There, six (6) people in both preference tests thought the caps sounded the same. Two less than the generic cap. One of those two people said the sound was duller. One said it was brighter. In other words the people who shifted their votes did not agree on what they heard!

The spatial results did better. Here two people shifted their votes (presumably) from being the same to being better when comparing cap 1 to cap 2.

What does this mean? Nothing! A statistical analysis must be made to see if the results of either outcome, cap 1 or cap 2 is due to chance or not. None were provided in the paper. This is like doing a math homework in high-school and not showing your work!

That slight shift in preference does not speak at all to validity of the outcome.

The last part of the paper which I won't go into confuses again the science of blind testing. It takes research that shows trained listeners are equiv to 7 non-trained people, and performs some vague computation to exaggerate the preference ratings for the fancy cap.

Conclusion

My personal conclusion is that they proved one and only one thing: that when blind tested properly using ABX listeners failed to hear a difference between generic and fancy film capacitor. Maybe a different test would be more revealing. But for now, that is the only valid part of the paper. Preference tests are not appropriate and defensible when not backed by statistical analysis that can demonstrate if listeners were randomly voting or not.

Audio Capacitors. Myth or reality?

P. J. Duncan , P. S. Dodds , and N. Williams University of Salford, Greater Manchester, M5 4WT, UK. [email protected]

ICW Ltd, Wrexham, Wales, LL12 0PJ, UK. [email protected]

ICW Ltd, Wrexham, Wales, LL12 0PJ, UK. [email protected]

The paper hypothesizes the mechanical resonance of a film resistor which occurs in the audible band between 10 Khz and 30 Khz could have deleterious effect on audio when used in crossover circuits in a speaker:

ABSTRACT

This paper gives an account of work carried out to assess the effects of metallised film polypropylene crossover capacitors on key sonic attributes of reproduced sound. The capacitors under investigation were found to be mechanically resonant within the audio frequency band, and results obtained from subjective listening tests have shown this to have a measurable effect on audio delivery. The listening test methodology employed in this study evolved from initial ABX type tests with set program material to the final A/B tests where trained test subjects used program material that they were familiar with. The main findings were that capacitors used in crossover circuitry can exhibit mechanical resonance, and that maximizing the listenerís control over the listening situation and minimizing stress to the listener were necessary to obtain meaningful subjective test results.

Seems like a great study! Let's dig in to see if they deliver on that promise.

Let's focus on the listening part of the test. Here is the test setup:

Speakers in use are B&W 802. The woofer is directly driven but the tweeter goes through an AB switch of two different capacitors. They say they use the "bi-wire" connection of the B&W speakers which to me sounds like its crossover remained in the path. So guessing, the capacitor under test would be in series with that. This doesn't simulate what would happen if you changed a cap in the crossover. It simply adds another cap to the audio path which would likely increase the filter slope of the tweeter.

They start off with an ABX test. As you may know with ABX you can hear capacitor A versus capacitor B and then a random A or B is played ("X") and you have to identify it. Initially they do a comparison of an electrolytic capacitor against film (both 4.7 microfarads). They used this for "training" which means it should be easy for listeners to tell them apart. They then followed that by testing one ordinary film capacitor against a fancier one:

"The initial ABX tests involved a panel of fourteen volunteer listeners comprising students and staff in the University. The listeners generally found the tests to be very difficult and quite tiring, and in general, the results from the initial ABX tests gave no usable outcome.

One surprising feature of the tests was that during the initial training part of the tests where the electrolytic capacitor was compared with a film capacitor, and the difference was fairly audible, many of the listeners were unable to correctly identify A or B. In the second part of the tests, where the differences in audio delivery from the two film capacitors was much more subtle, the panel was unable to correctly identify A or B and no useful results were obtained.

Translating that into English, the "easy" case turned out not to be so. What was obvious to the people who created the test, was not to the people taking the test blind. Difficulty of telling the caps apart went through the roof when the two capacitors were of the same kind, i.e. purpose of this test. The testers failed to tell the capacitors apart in double blind ABX test.

They regroup and declare two things:

1. Maybe some of the testers don't have proper training. This is a valid criticism and you do want to use the training phase to weed out people who are lost and can't tell obvious differences. The trick however is to know for sure the training outcome was predictable. That is, we want to have proof that audible difference exists and based on that filter out testers.

As a way of example, if I want to test CD against high-res, one "control" for training would be to filter out the CD down to 12 Khz. If someone can't tell that from the CD, they sure as heck are not qualified to test CD against high-res.

The problem here is that we don't know that an electrolytic cap is indeed this bad or obvious to tell apart. The testers just assume so without any objective data or proof otherwise.

2. The experimenters blame ABX test itself faulty because the difference they could hear sighted, could not be replicated blind using other testers. Well duh! That is why we do ABX tests: to see if sighted results can be replicated when knowledge is removed. If ABX tests don't agree with sighted, the arrow does NOT automatically point to ABX tests being wrong. It as a minimum says that any audible difference must be very small. At worst it says audible difference does not exist.

Sadly organizers move on to a preference test of one capacitor against another. For their defense they site ITU BS-1116 which is a highly recommended set of standards to determine fidelity differences between samples. BS-1116 was designed for testing lossy audio codecs where we "know" sonic differences exist. That is, we don't need to first use ABX testing to know there is a difference. That is a given.

Using that logic is a mistake in this context. It is not given at all that the caps of any sort sound different. They cannot as such skip the ABX phase and go to preference.

The problem with doing that is that you get false data. I can test two cans of identical soda and ask people which one tastes better and one may come out ahead of the other. That alone means nothing. It is vagaries of human testers and too few trials.

The incredulity here for fancy caps versus standard is one where we don't believe there is any audible difference. That is the hypothesis that had to be proven or disproven. You can't assume an outcome of audible difference and then go and grade them as they did. Indeed their ABX testing showed that such reliable difference did NOT exist!

But they proceed with the AB preference test. Here, they use a reference capacitor and compare it to three others:

1. Elec is the electrolytic capacitor that is supposed to sound different.

2. Cap 1 is the same as reference so should sound identical.

3. Cap 2 is the fancy film capacitor which they hope sound better

Here are the results

Let's parse this as it is not easy.

Electrolytic capacitor was picked by 14 out of 14 testers/test to sound duller. And 13 out of 14 to have worse spatial information.

Cap 1 which is identical to its alternative in the AB test should have generated 14 out of 14 people saying it is the same in both categories of preference. As is commonly the case, it did NOT generate that result. In the detail front, only 8 people thought it sounded the same as its identical twin. Three (3) people said it sounded brighter, and the same number (3) thought it sounded duller. Identical results were generated in the Spatial category with just 8 people correctly identifying it as sounding the same.

You now see the problem I mentioned. Two identical caps being compared, yet people randomly give preference to one or the other.

The testers declare victory based on the outcome for the fancier film capacitor's results. There, six (6) people in both preference tests thought the caps sounded the same. Two less than the generic cap. One of those two people said the sound was duller. One said it was brighter. In other words the people who shifted their votes did not agree on what they heard!

The spatial results did better. Here two people shifted their votes (presumably) from being the same to being better when comparing cap 1 to cap 2.

What does this mean? Nothing! A statistical analysis must be made to see if the results of either outcome, cap 1 or cap 2 is due to chance or not. None were provided in the paper. This is like doing a math homework in high-school and not showing your work!

That slight shift in preference does not speak at all to validity of the outcome.

The last part of the paper which I won't go into confuses again the science of blind testing. It takes research that shows trained listeners are equiv to 7 non-trained people, and performs some vague computation to exaggerate the preference ratings for the fancy cap.

Conclusion

My personal conclusion is that they proved one and only one thing: that when blind tested properly using ABX listeners failed to hear a difference between generic and fancy film capacitor. Maybe a different test would be more revealing. But for now, that is the only valid part of the paper. Preference tests are not appropriate and defensible when not backed by statistical analysis that can demonstrate if listeners were randomly voting or not.

Last edited: