DonR

Major Contributor

Because double eliminates bias from creeping into the test. Triple doesn't further reduce something that has already been eliminated.I can't decide whether to respond a) incorrect, or b) why not triple? Science is hard.

Because double eliminates bias from creeping into the test. Triple doesn't further reduce something that has already been eliminated.I can't decide whether to respond a) incorrect, or b) why not triple? Science is hard.

Uh huh.The reason for the apparent contradiction is that the controls in a properly implemented blind test are designed to prevent any biases from influencing the result. Biases can alter the subjects perception so that they hear a difference even when non exists.

If the controls are NOT properly implemented, they are more likely to perceive a difference where not exists. However poorly implemented controls will never hide a difference that does exist.

So that means if someone does not implement the controls properly but STILL fails to detect a difference in the test it really doesn't matter much if the test set up was flawed. A flawed test setup won't prevent you from hearing a difference that genuinely exists.

So in the negative result, the test setup doen't need to be questioned to the same extent - or at all: If I can't hear a difference even in a fully sighted test, then I can't hear it. Test controls are not needed.

I don't agree that this is a good reason to not question testing methodologies when the result confirms your hypothesis.The reason for the apparent contradiction is that the controls in a properly implemented blind test are designed to prevent any biases from influencing the result. Biases can alter the subjects perception so that they hear a difference even when non exists.

If the controls are NOT properly implemented, they are more likely to perceive a difference where not exists. However poorly implemented controls will never hide a difference that does exist.

So that means if someone does not implement the controls properly but STILL fails to detect a difference in the test it really doesn't matter much if the test set up was flawed. A flawed test setup won't prevent you from hearing a difference that genuinely exists.

So in the negative result, the test setup doen't need to be questioned to the same extent - or at all. If I can't hear a difference in a fully sighted test, then I can't hear it. Test controls are not needed.

No - it is not being ignored when it confirms a hypothesis. It is being ignored when it cant have had an influence the result.I don't agree that this is a good reason to not question testing methodologies when the result confirms your hypothesis.

Oh crap, I'd decided not to post that, because pedantry, but it must have been there in the reply box. I'll have to stand by it now though. Your post is nonsense (using the term idiomatically, not literally). Single, double and triple blind are all valid methodologically, and usage depends on a number of factors (what we are testing, what we need to control, etc). Triple is absolutely necessary in certain test/analysis scenarios.Because double eliminates bias from creeping into the test. Triple doesn't further reduce something that has already been eliminated.

Uh huh.

I don't agree that this is a good reason to not question testing methodologies when the result confirms your hypothesis.

No - it is not being ignored when it confirms a hypothesis. It is being ignored when it cant have had an influence the result.

Assuming good faith in the OP, and that I get your previous drift, it's correct that type III errors aren't usually serious (where you still have the correct result) but it isn't correct to say they can't occur. We are also assuming we aren't seeing a type I or II error. But as the post above indicates, we don't know that yet.

If I understand the result correctly, did it show that most attempts couldn't tell a difference, but one out of 72 could? That a difference was heard by someone with very good hearing???perhaps you will find my online DAC ABX test interesting as well. That one compared an objectively flawed low-budget DAC (FiiO D03K) with one that is SOTA performing (Topping E50) and so far the large majority of participants seem to be struggling to detect any difference.

But we do not know that is true until we know the methodology. If the methodology did not test a differentiating feature, then that invalidates the conclusion of the ABX test. Or if the test structure made differentiation difficult or impossible, I would say that invalidates the conclusion of no differentiation.

Maybe the only played drum & bass, not female vocal. Or maybe they played 10 minute segments for ABCD so by the time the X came around, of course one could not clearly attribute it to A, B, or C. Or maybe they did ABX choosing pairwise, but did not equally cover all pairs.

So, I do not accept the premise that a flawed test that indicates no differentiation still implies no differentiation in a test without those flaws.

So, you are not curious that using a different headphones might get him different results? Or a certain song is better for testing? You just want the results as is?What the OP reported was a test of specific devices, using his own ears and his own system and music content that didn't sound different to him during that one particular test. For some reason there is a strong defensive tendency here to try to refute the results because they don't apply to everyone, and to every device under the sun, used in every possible system, with every possible piece of music.

The OP didn't set out to prove that nobody can hear the differences, nor did he set out to prove that these differences can't be heard with all content, and under all possible circumstances. So, let's treat this result as a single data point that it is. One that doesn't generalize to the whole world population and doesn't prove any generalizable hypothesis. Just a data point.

So, you are not curious that using a different headphones might get him different results? You just want the results as is?

Uh huh.Oh crap, I'd decided not to post that, because pedantry, but it must have been there in the reply box. I'll have to stand by it now though. Your post is nonsense (using the term idiomatically, not literally). Single, double and triple blind are all valid methodologically, and usage depends on a number of factors (what we are testing, what we need to control, etc). Triple is absolutely necessary in certain test/analysis scenarios.

1. ASR is not about doing science or confirming hypotheses -- it's about reporting objective measurements of audio devices

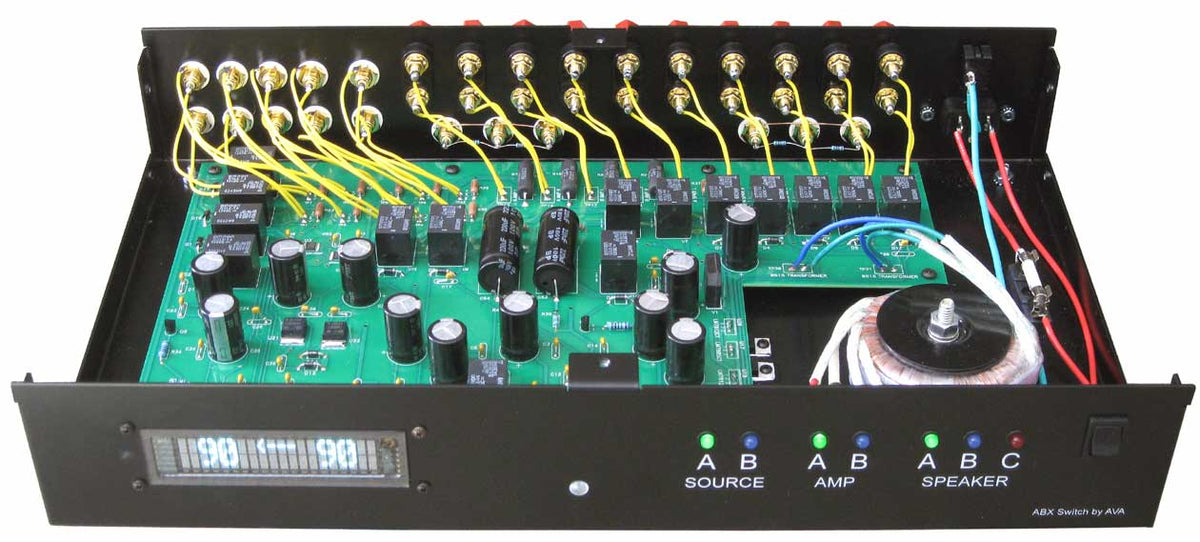

That looks like a helpful little device and a damn sight cheaper than this baby;BTW, I recently started using this 1:4 (or 4:1) switchbox from Nobosound.

Absolutely. That's why we are posting here on Audio Engineering Reports, and not ever there with the crazies at Audio Science Review.

If OP is satisfied, why he wanted to do another round?To be blunt, I care about my own listening test results, and assume that others do the same. If the result satisfied OP’s curiosity, then I’m perfectly fine with the result he got.

Uh huh.